利用前面所学知识,对之前的信贷项目,利用神经网络训练

# 先运行之前预处理好的代码

import pandas as pd

import pandas as pd #用于数据处理和分析,可处理表格数据。

import numpy as np #用于数值计算,提供了高效的数组操作。

import matplotlib.pyplot as plt #用于绘制各种类型的图表

import seaborn as sns #基于matplotlib的高级绘图库,能绘制更美观的统计图形。

import warnings

warnings.filterwarnings("ignore")

# 设置中文字体(解决中文显示问题)

plt.rcParams['font.sans-serif'] = ['SimHei'] # Windows系统常用黑体字体

plt.rcParams['axes.unicode_minus'] = False # 正常显示负号

data = pd.read_csv('data.csv') #读取数据

# 先筛选字符串变量

discrete_features = data.select_dtypes(include=['object']).columns.tolist()

# Home Ownership 标签编码

home_ownership_mapping = {

'Own Home': 1,

'Rent': 2,

'Have Mortgage': 3,

'Home Mortgage': 4

}

data['Home Ownership'] = data['Home Ownership'].map(home_ownership_mapping)

# Years in current job 标签编码

years_in_job_mapping = {

'< 1 year': 1,

'1 year': 2,

'2 years': 3,

'3 years': 4,

'4 years': 5,

'5 years': 6,

'6 years': 7,

'7 years': 8,

'8 years': 9,

'9 years': 10,

'10+ years': 11

}

data['Years in current job'] = data['Years in current job'].map(years_in_job_mapping)

# Purpose 独热编码,记得需要将bool类型转换为数值

data = pd.get_dummies(data, columns=['Purpose'])

data2 = pd.read_csv("data.csv") # 重新读取数据,用来做列名对比

list_final = [] # 新建一个空列表,用于存放独热编码后新增的特征名

for i in data.columns:

if i not in data2.columns:

list_final.append(i) # 这里打印出来的就是独热编码后的特征名

for i in list_final:

data[i] = data[i].astype(int) # 这里的i就是独热编码后的特征名

# Term 0 - 1 映射

term_mapping = {

'Short Term': 0,

'Long Term': 1

}

data['Term'] = data['Term'].map(term_mapping)

data.rename(columns={'Term': 'Long Term'}, inplace=True) # 重命名列

continuous_features = data.select_dtypes(include=['int64', 'float64']).columns.tolist() #把筛选出来的列名转换成列表

# 连续特征用中位数补全

for feature in continuous_features:

mode_value = data[feature].mode()[0] #获取该列的众数。

data[feature].fillna(mode_value, inplace=True) #用众数填充该列的缺失值,inplace=True表示直接在原数据上修改。

# 最开始也说了 很多调参函数自带交叉验证,甚至是必选的参数,你如果想要不交叉反而实现起来会麻烦很多

# 所以这里我们还是只划分一次数据集

from sklearn.model_selection import train_test_split

X = data.drop(['Credit Default'], axis=1) # 特征,axis=1表示按列删除

y = data['Credit Default'] # 标签

# 按照8:2划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42) # 80%训练集,20%测试集

# 打印下尺寸

print(X_train.shape)

print(y_train.shape)

print(X_test.shape)

print(y_test.shape)将数据转换成合适类型

import torch

import torch.nn as nn

import torch.optim as optim

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import MinMaxScaler

import time

import matplotlib.pyplot as plt

# 设置GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")

scaler = MinMaxScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test) #确保训练集和测试集是相同的缩放

# 将数据转换为 PyTorch 张量,因为 PyTorch 使用张量进行训练

# y_train和y_test是整数,所以需要转化为long类型,如果是float32,会输出1.0 0.0

X_train = torch.FloatTensor(X_train).to(device)

y_train = torch.LongTensor(y_train.values).to(device)

X_test = torch.FloatTensor(X_test).to(device)

y_test = torch.LongTensor(y_test.values).to(device)模型训练

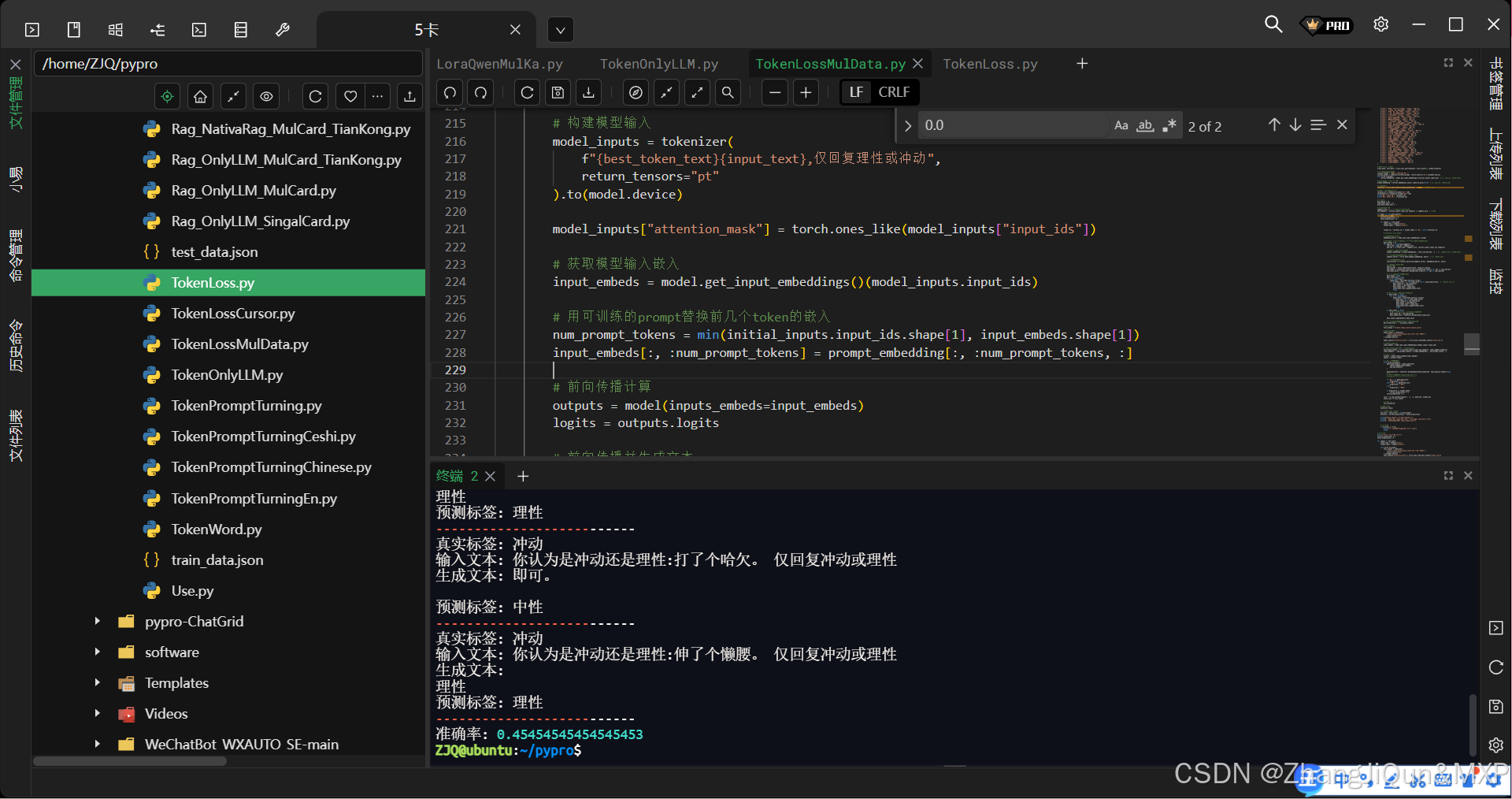

class MLP(nn.Module):

def __init__(self):

super(MLP, self).__init__()

self.fc1 = nn.Linear(31, 10) # 输入层到隐藏层

self.relu = nn.ReLU()

self.fc2 = nn.Linear(10, 2) # 隐藏层到输出层

def forward(self, x):

out = self.fc1(x)

out = self.relu(out)

out = self.fc2(out)

return out

# 实例化模型并移至GPU

model = MLP().to(device)

# 分类问题使用交叉熵损失函数

criterion = nn.CrossEntropyLoss()

# 使用随机梯度下降优化器

optimizer = optim.SGD(model.parameters(), lr=0.01)

# 训练模型

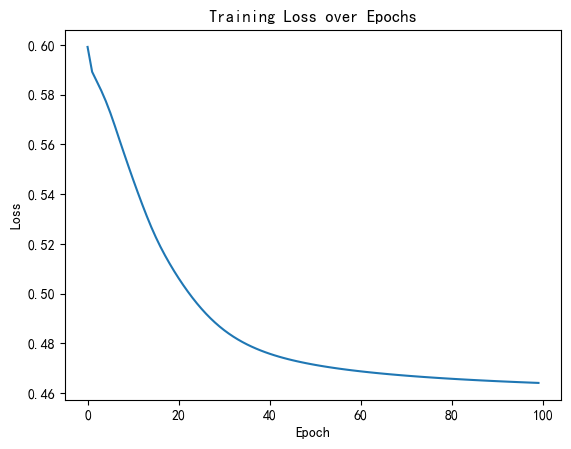

num_epochs = 20000 # 训练的轮数

# 用于存储每100个epoch的损失值和对应的epoch数

losses = []

start_time = time.time() # 记录开始时间

for epoch in range(num_epochs):

# 前向传播

outputs = model(X_train) # 隐式调用forward函数

loss = criterion(outputs, y_train)

# 反向传播和优化

optimizer.zero_grad() #梯度清零,因为PyTorch会累积梯度,所以每次迭代需要清零,梯度累计是那种小的bitchsize模拟大的bitchsize

loss.backward() # 反向传播计算梯度

optimizer.step() # 更新参数

# 记录损失值

if (epoch + 1) % 200 == 0:

losses.append(loss.item()) # item()方法返回一个Python数值,loss是一个标量张量

print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item():.4f}')

# 打印训练信息

if (epoch + 1) % 100 == 0: # range是从0开始,所以epoch+1是从当前epoch开始,每100个epoch打印一次

print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item():.4f}')

time_all = time.time() - start_time # 计算训练时间

print(f'Training time: {time_all:.2f} seconds')

# 可视化损失曲线

plt.plot(range(len(losses)), losses)

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.title('Training Loss over Epochs')

plt.show()

# 评估模型

model.eval() # 设置模型为评估模式

with torch.no_grad(): # torch.no_grad()的作用是禁用梯度计算,可以提高模型推理速度

outputs = model(X_test) # 对测试数据进行前向传播,获得预测结果

_, predicted = torch.max(outputs, 1) # torch.max(outputs, 1)返回每行的最大值和对应的索引

correct = (predicted == y_test).sum().item() # 计算预测正确的样本数

accuracy = correct / y_test.size(0)

print(f'测试集准确率: {accuracy * 100:.2f}%')测试集准确率: 77.07%

@浙大疏锦行