前言

llama-3-1 meta于2024-07-23发布

- https://ai.meta.com/blog/meta-llama-3-1/

文档

- https://llama.meta.com/docs/llama-everywhere/running-meta-llama-on-linux/

git

- https://github.com/meta-llama/llama3

Cloudflare提供了免费访问的入口

-

https://linux.do/t/topic/70074

-

https://playground.ai.cloudflare.com/

如下,Llama 3.1模型在中文支持方面仍有较大提升空间

在Hugging Face上已经可以找到经过微调、支持中文的Llama 3.1版本

-

git:https://github.com/Shenzhi-Wang/Llama3-Chinese-Chat?tab=readme-ov-file

- https://huggingface.co/shenzhi-wang/Llama3.1-8B-Chinese-Chat

- https://www.modelscope.cn/models/LLM-Research/Llama3-8B-Chinese-Chat

-

git:https://github.com/CrazyBoyM/llama3-Chinese-chat?tab=readme-ov-file

- https://huggingface.co/shareAI/llama3.1-8b-instruct-dpo-zh

- https://modelscope.cn/models/shareAI/llama3.1-8b-instruct-dpo-zh

硬件准备

使用“阿里云人工智能平台 PAI”

PAI-DSW免费试用

- https://free.aliyun.com/?spm=5176.14066474.J_5834642020.5.7b34754cmRbYhg&productCode=learn

- https://help.aliyun.com/document_detail/2261126.html

GPU规格和镜像版本选择(参考的 “基于Wav2Lip+TPS-Motion-Model+CodeFormer技术实现动漫风数字人”):

- pytorch-develop:1.12-gpu-py39-cu113-ubuntu20.04 (官方推荐的镜像貌似在变化)

- 规格名称为ecs.gn6v-c8g1.2xlarge,1 * NVIDIA V100

实操

Linux 下载并安装 Ollama

官网给的命令

- https://github.com/ollama/ollama

- https://github.com/ollama/ollama/blob/main/docs/linux.md

curl -fsSL https://ollama.com/install.sh | sh

国内下载可能很慢

使用“GitHub 文件加速”,分分钟下载完成

- https://github.com/ollama/ollama/issues/3973

- https://defagi.com/ai-case/ollama-installation-guide-china/

参考命令

# 下载安装脚本

/mnt/workspace/ollama_test> curl -fsSL https://ollama.com/install.sh -o install.sh

# 打开install.sh,找到以下两个下载地址:

/mnt/workspace/ollama_test> cat install.sh | grep "https://ollama.com/download"

curl --fail --show-error --location --progress-bar -o $TEMP_DIR/ollama "https://ollama.com/download/ollama-linux-${ARCH}${VER_PARAM}"

curl --fail --show-error --location --progress-bar "https://ollama.com/download/ollama-linux-amd64-rocm.tgz${VER_PARAM}" \

/mnt/workspace/ollama_test>

# 修改为如下

https://github.moeyy.xyz/https://github.com/ollama/ollama/releases/download/v0.3.5/ollama-linux-amd64

https://github.moeyy.xyz/https://github.com/ollama/ollama/releases/download/v0.3.5/ollama-linux-amd64-rocm.tgz

# 即

curl --fail --show-error --location --progress-bar -o $TEMP_DIR/ollama "https://github.moeyy.xyz/https://github.com/ollama/ollama/releases/download/v0.3.5/ollama-linux-amd64"

curl --fail --show-error --location --progress-bar "https://github.moeyy.xyz/https://github.com/ollama/ollama/releases/download/v0.3.5/ollama-linux-amd64-rocm.tgz"

# 运行

/mnt/workspace/ollama_test> sh install.sh

>>> Downloading ollama...

################################################################################################################################################################################################### 100.0%

>>> Installing ollama to /usr/local/bin...

>>> Creating ollama user...

>>> Adding ollama user to video group...

>>> Adding current user to ollama group...

>>> Creating ollama systemd service...

WARNING: Unable to detect NVIDIA/AMD GPU. Install lspci or lshw to automatically detect and install GPU dependencies.

>>> The Ollama API is now available at 127.0.0.1:11434.

>>> Install complete. Run "ollama" from the command line.

/mnt/workspace/ollama_test>

检测有没有安装完成

/mnt/workspace/ollama_test> ollama -v

Warning: could not connect to a running Ollama instance

Warning: client version is 0.3.5

/mnt/workspace/ollama_test>

使用 Ollama 安装 Llama3.1模型

# 前台启动 https://github.com/ollama/ollama/issues/4184

/mnt/workspace/ollama_test> ollama serve

# 新建窗口,运行

/mnt/workspace/ollama_test> ollama run llama3.1

/mnt/workspace/ollama_test> ollama list

NAME ID SIZE MODIFIED

llama3.1:latest 91ab477bec9d 4.7 GB 18 hours ago

/mnt/workspace/ollama_test>

看起来中文不太友好

REST API

使用 Ollama 安装 Llama3.1-8B-Chinese-Chat 模型

参考

- https://github.com/Shenzhi-Wang/Llama3-Chinese-Chat?tab=readme-ov-file

# to use the Ollama model for our 8bit-quantized GGUF Llama3-8B-Chinese-Chat-v2.1

ollama run wangshenzhi/llama3-8b-chinese-chat-ollama-q8

/mnt/workspace/ollama_test> ollama run wangshenzhi/llama3-8b-chinese-chat-ollama-q8

pulling manifest

pulling 63159cb6b313... 100% ▕██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████▏ 8.5 GB

pulling fbbe9f68bba1... 100% ▕██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████▏ 256 B

pulling 4fc7dad80c9b... 100% ▕██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████▏ 30 B

pulling 50020e23ef83... 100% ▕██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████▏ 126 B

pulling f287048ff560... 100% ▕██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████▏ 483 B

verifying sha256 digest

writing manifest

removing any unused layers

success

>>>

比较费劲的安装方式

直接安装 Llama3.1-8B-Chinese-Chat 模型

HuggingFace(需要挂梯子)

- https://huggingface.co/shenzhi-wang/Llama3.1-8B-Chinese-Chat

ModelScope(国内可直接访问)

- https://www.modelscope.cn/models/LLM-Research/Llama3-8B-Chinese-Chat

# 创建conda虚拟环境(也可以不创建,直接使用默认的环境)

conda create --name ollama_test python=3.11

# conda env list

/mnt/workspace/ollama_test> conda env list

# conda environments:

#

base /home/pai

ollama_test /home/pai/envs/ollama_test

# conda activate ollama_test

# 如果报错CommandNotFoundError: Your shell has not been properly configured to use 'conda activate'.,可以执行source activate ollama_test。后面就可以正常使用conda activate 命令激活虚拟环境了

/mnt/workspace/ollama_test> source activate ollama_test

(ollama_test) /mnt/workspace/ollama_test>

(ollama_test) /mnt/workspace/ollama_test> conda activate base

(base) /mnt/workspace/ollama_test> conda activate ollama_test

(ollama_test) /mnt/workspace/ollama_test>

#模型下载

(ollama_test) /mnt/workspace/ollama_test> pip install modelscope

(ollama_test) /mnt/workspace> python

Python 3.11.9 (main, Apr 19 2024, 16:48:06) [GCC 11.2.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> from modelscope import snapshot_download

>>> model_dir = snapshot_download('LLM-Research/Llama3-8B-Chinese-Chat',cache_dir="/mnt/workspace/LLM/")

#检查

https://www.modelscope.cn/models/LLM-Research/Llama3-8B-Chinese-Chat/file/view/master?fileName=README.md&status=1

/mnt/workspace/LLM/LLM-Research/Llama3-8B-Chinese-Chat/

/mnt/workspace/ollama_test> ll /mnt/workspace/LLM/LLM-Research/Llama3-8B-Chinese-Chat/

total 15693196

drwxrwxrwx 2 root root 4096 Aug 13 20:17 ./

drwxrwxrwx 3 root root 4096 Aug 13 20:14 ../

-rw-rw-rw- 1 root root 649 Aug 13 20:14 config.json

-rw-rw-rw- 1 root root 73 Aug 13 20:14 configuration.json

-rw-rw-rw- 1 root root 147 Aug 13 20:14 generation_config.json

-rw-rw-rw- 1 root root 7801 Aug 13 20:14 LICENSE

-rw-rw-rw- 1 root root 58 Aug 13 20:14 .mdl

-rw-rw-rw- 1 root root 4976698672 Aug 13 20:15 model-00001-of-00004.safetensors

-rw-rw-rw- 1 root root 4999802720 Aug 13 20:16 model-00002-of-00004.safetensors

-rw-rw-rw- 1 root root 4915916176 Aug 13 20:17 model-00003-of-00004.safetensors

-rw-rw-rw- 1 root root 1168138808 Aug 13 20:17 model-00004-of-00004.safetensors

-rw-rw-rw- 1 root root 23950 Aug 13 20:17 model.safetensors.index.json

-rw------- 1 root root 1013 Aug 13 20:17 .msc

-rw-rw-rw- 1 root root 36 Aug 13 20:17 .mv

-rw-rw-rw- 1 root root 43585 Aug 13 20:17 README.md

-rw-rw-rw- 1 root root 97 Aug 13 20:17 special_tokens_map.json

-rw-rw-rw- 1 root root 51272 Aug 13 20:17 tokenizer_config.json

-rw-rw-rw- 1 root root 9084490 Aug 13 20:17 tokenizer.json

/mnt/workspace/ollama_test>

#安装Pytorch

#这里有介绍,如何根据CUDA版本选择合适的Pytorch https://blog.csdn.net/szw_yx/article/details/140826705?spm=1001.2014.3001.5502

(ollama_test) /mnt/workspace/ollama_test> conda install pytorch==2.3.1 torchvision==0.18.1 torchaudio==2.3.1 pytorch-cuda=11.8 -c pytorch -c nvidia

#下载包,并运行

#https://huggingface.co/shenzhi-wang/Llama3.1-8B-Chinese-Chat/blob/main/README.md

(ollama_test) /mnt/workspace/ollama_test> pip install accelerate

(ollama_test) /mnt/workspace/ollama_test> pip install --upgrade transformers

#运行

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = "/mnt/workspace/LLM/LLM-Research/Llama3-8B-Chinese-Chat"

tokenizer = AutoTokenizer.from_pretrained(model_id)

# 之前按照官方命令执行的,报错了NameError: name 'torch' is not defined,后面调整了torch_dtype就可以了,https://blog.csdn.net/weixin_42225889/article/details/140325755

model = AutoModelForCausalLM.from_pretrained(

model_id, torch_dtype=torch.float16, device_map="auto"

)

messages = [

{"role": "user", "content": "写一首诗吧"},

]

input_ids = tokenizer.apply_chat_template(

messages, add_generation_prompt=True, return_tensors="pt"

).to(model.device)

outputs = model.generate(

input_ids,

max_new_tokens=8192,

do_sample=True,

temperature=0.6,

top_p=0.9,

)

response = outputs[0][input_ids.shape[-1]:]

print(tokenizer.decode(response,skip_special_tokens=True))

# 对中文的支持比原模型更好

>>> messages = [

... {"role": "user", "content": "你好"},

... ]

>>> input_ids = tokenizer.apply_chat_template(

... messages, add_generation_prompt=True, return_tensors="pt"

... ).to(model.device)

>>> outputs = model.generate(

... input_ids,

... max_new_tokens=8192,

... do_sample=True,

... temperature=0.6,

... top_p=0.9,

... )

>>> response = outputs[0][input_ids.shape[-1]:]

>>> print(tokenizer.decode(response,skip_special_tokens=True))

你好,很高兴为您服务。有什么可以帮助您的吗?

>>>

导入 Llama3.1-8B-Chinese-Chat 模型到 Ollama

如果需要将模型导入到 Ollama 管理,需要使用 gguf 文件

下载gguf文件

huggingface中有gguf文件,但是需要挂梯子下载

- https://huggingface.co/shenzhi-wang/Llama3.1-8B-Chinese-Chat/tree/main/gguf

modelscope上暂时没有,我提了一个issue,可能不久之后会上传

- https://www.modelscope.cn/models/LLM-Research/Llama3-8B-Chinese-Chat/feedback

想到的一种可行的方式:

先从huggingface上下载文件到本地(挂梯子),然后上传至阿里云盘(类似于百度网盘,文件放到“备份文件”里),“阿里云人工智能平台 PAI”使用命令从阿里云盘下载文件

参考命令

#参考例子: https://gitcode.com/gh_mirrors/ali/aliyunpan/overview?utm_source=csdn_github_accelerator&isLogin=1

#使用加速器,下载更快 https://github.akams.cn/${url}

/mnt/workspace/software> wget https://gh.llkk.cc/https://github.com/tickstep/aliyunpan/releases/download/v0.3.2/aliyunpan-v0.3.2-linux-amd64.zip

unzip aliyunpan-v0.3.2-linux-amd64.zip

cd aliyunpan-v0.3.2-linux-amd64

./aliyunpan

# aliyunpan命令手册 https://gitcode.com/gh_mirrors/ali/aliyunpan/blob/main/docs/manual.md#%E5%91%BD%E4%BB%A4%E5%88%97%E8%A1%A8%E5%8F%8A%E8%AF%B4%E6%98%8E

# login

aliyunpan > login

请在浏览器打开以下链接进行登录,链接有效时间为5分钟。

注意:你需要进行一次授权一次扫码的两次登录。

https://openapi.alipan.com/oauth/authorize?xxxx

请在浏览器里面完成扫码登录,然后再按Enter键继续...

阿里云盘登录成功: 150***393

aliyunpan:/ 150***393(备份盘)$

# download

aliyunpan:/ 150***393(备份盘)$ download llama3.1_8b_chinese_chat_q8_0.gguf

[0] 当前文件下载最大并发量为: 5, 下载缓存为: 64.00KB

[1] 加入下载队列: /llama3.1_8b_chinese_chat_q8_0.gguf

[1] ----

文件ID: 66bc6a97cf27857dc5a64eb8a848b4b395626563

文件名: llama3.1_8b_chinese_chat_q8_0.gguf

文件类型: 文件

文件路径: /llama3.1_8b_chinese_chat_q8_0.gguf

[1] 准备下载: /llama3.1_8b_chinese_chat_q8_0.gguf

[1] 将会下载到路径: /root/Downloads/d0d78657f8914d2f9efb33fe8af6daf2/llama3.1_8b_chinese_chat_q8_0.gguf

[1] 下载开始

[1] ↓ 3.39GB/7.95GB(42.65%) 5.53MB/s(6.35MB/s) in 8m3.27s, left 14m5s .................

/mnt/workspace/software> mv /root/Downloads/d0d78657f8914d2f9efb33fe8af6daf2/llama3.1_8b_chinese_chat_q8_0.gguf /mnt/workspace/LLM/

/mnt/workspace/software> ll /mnt/workspace/LLM/llama3.1_8b_chinese_chat_q8_0.gguf

-rw-rw-rw- 1 root root 8540770560 Aug 14 17:01 /mnt/workspace/LLM/llama3.1_8b_chinese_chat_q8_0.gguf

/mnt/workspace/software>

导入模型

编写一个配置文件,如 llama3_8b_chinese_config.txt。第一行FROM "…"中的模型文件路径需要根据实际情况进行修改,其余部分的模板内容无需改动

/mnt/workspace/ollama_test/config> pwd

/mnt/workspace/ollama_test/config

/mnt/workspace/ollama_test/config> cat llama3_8b_chinese_config.txt

FROM "/mnt/workspace/LLM/llama3.1_8b_chinese_chat_q8_0.gguf"

TEMPLATE """{{- if .System }}

<|im_start|>system {{ .System }}<|im_end|>

{{- end }}

<|im_start|>user

{{ .Prompt }}<|im_end|>

<|im_start|>assistant

"""

SYSTEM """"""

PARAMETER stop <|im_start|>

PARAMETER stop <|im_end|>

/mnt/workspace/ollama_test/config>

然后,运行以下命令导入模型:

/mnt/workspace/ollama_test/config> ollama create llama3-8b-zh -f llama3_8b_chinese_config.txt

# 查看模型列表,已经含有llama3-8b-zh

/mnt/workspace/ollama_test/config> ollama list

NAME ID SIZE MODIFIED

llama3-8b-zh:latest ce9ac3c678dd 8.5 GB 3 minutes ago

llama3.1:latest 91ab477bec9d 4.7 GB 20 hours ago

/mnt/workspace/ollama_test/config>

运行模型

命令行对话

ollama run llama3-8b-zh

API

API

# https://ollama.fan/reference/api/#generate-a-completion-request-no-streaming

curl http://localhost:11434/api/generate -d '{

"model": "llama3-8b-zh",

"prompt": "你好,请介绍一下自己",

"stream": false

}'

# https://ollama.fan/reference/openai/#curl

curl http://localhost:11434/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "llama3-8b-zh",

"messages": [

{

"role": "system",

"content": "You are a helpful assistant."

},

{

"role": "user",

"content": "你好,请介绍一下自己"

}

]

}'

启用GPU

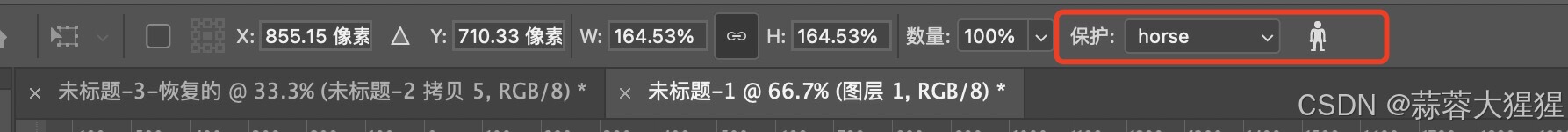

修改配置文件,将计算模式改为GPU

# 参考 https://wenku.csdn.net/answer/63mxeomqsm、https://blog.csdn.net/weixin_46124467/article/details/136781232

# 配置文件

/mnt/workspace/LLM> ll /etc/systemd/system/ollama.service

-rw-rw-rw- 1 root root 533 Aug 13 19:54 /etc/systemd/system/ollama.service

/mnt/workspace/LLM>

# 添加

/mnt/workspace/LLM> tail -2 /etc/systemd/system/ollama.service

[computing]

mode=gpu

/mnt/workspace/LLM>

# 重启服务

/mnt/workspace/ollama_test> ollama serve

启动时能看到可用的GPU

交互时能看到GPU的使用率从0.0%开始上涨,且响应变得非常快

参考:

HuggingFace + Ollama + Llama 3.1:轻松搞定Llama 3.1中文微调版本安装

Cloudflare llama 3.1 免费大尝鲜:中文效果令你意想不到