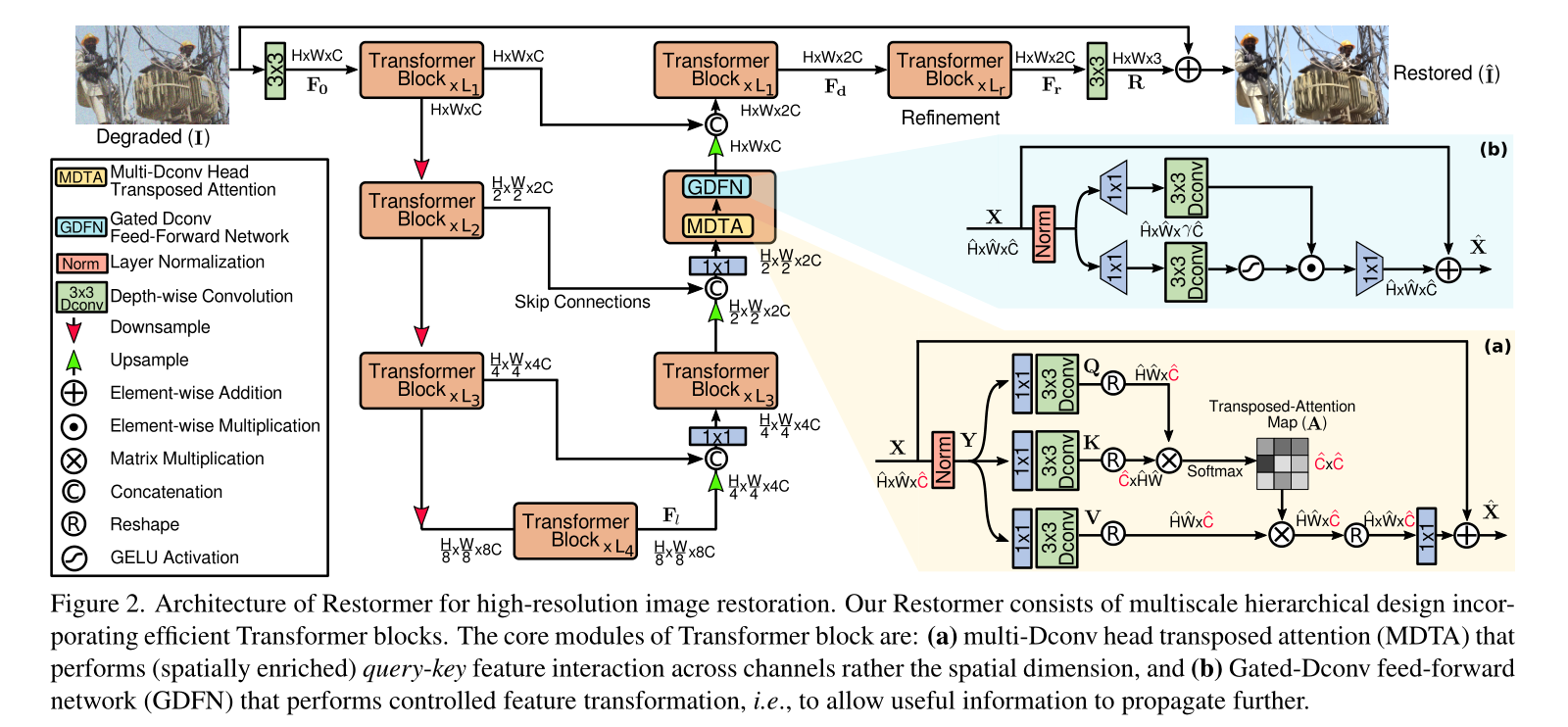

由一个MDTA模块和一个GDFN模块组成一个Transformer Block

我们看一下代码实现:

class TransformerBlock(nn.Module):

def __init__(self, dim, num_heads, ffn_expansion_factor, bias, LayerNorm_type):

super(TransformerBlock, self).__init__()

self.norm1 = LayerNorm(dim, LayerNorm_type)

self.attn = Attention(dim, num_heads, bias)

self.norm2 = LayerNorm(dim, LayerNorm_type)

self.ffn = FeedForward(dim, ffn_expansion_factor, bias)

def forward(self, x):

x = x + self.attn(self.norm1(x))

x = x + self.ffn(self.norm2(x))

return x

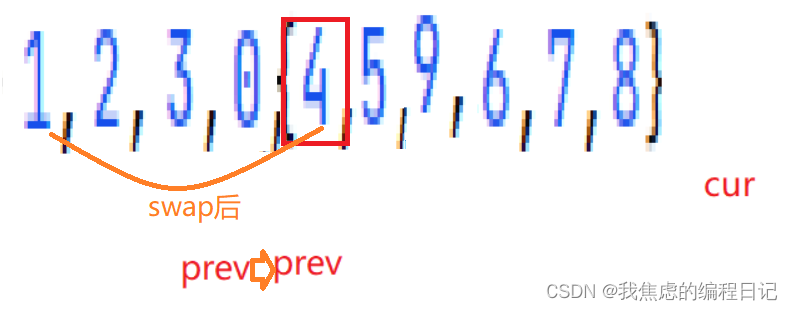

需要注意的是,Transformer Block中的MDTA和GDFN都是残差连接

![java八股文面试[多线程]——为什么要用线程池、线程池参数](https://img-blog.csdnimg.cn/132847fd92934329b37d0f4219722559.png)

![java八股文面试[多线程]——什么是守护线程](https://img-blog.csdnimg.cn/c6b128f75083482d8465e622f543153b.png)