深度学习训练营之中文文本分类识别

- 原文链接

- 环境介绍

- 前置工作

- 设置环境

- 设置GPU

- 加载数据

- 构建词典

- 生成数据批次和迭代器

- 模型定义

- 定义实例

- 定义训练函数和评估函数

- 模型训练

- 模型预测

原文链接

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍦 参考文章:365天深度学习训练营-第N2周:pytorch中文文本分类识别

- 🍖 原作者:K同学啊|接辅导、项目定制

环境介绍

- 语言环境:Python3.9.12

- 编译器:jupyter notebook

- 深度学习环境:pytorch

前置工作

本次中文文本分类的大致内容是一样的,不一样的地方就在于本次使用的是中文的文本分类

文本分类大致过程如下:

设置环境

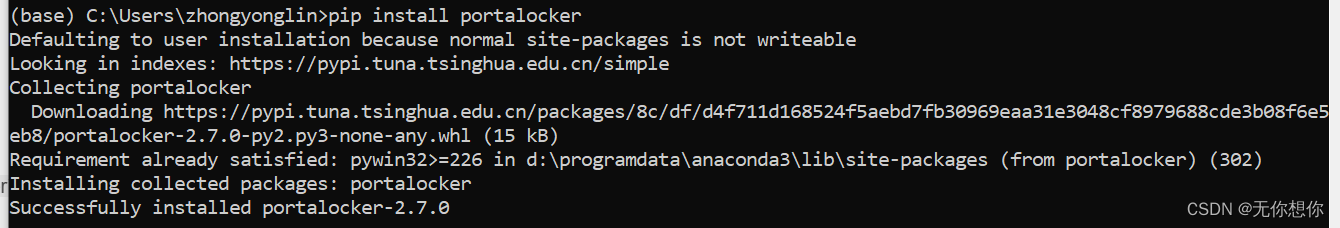

在工作开始之前,先保证下载了需要使用到的两个包torchtext和portalocker,我是在anaconda prompt当中下载的,下载 命令如下

pip install torchtext

pip install portalocker

本次训练的内容还需格外下载jieba包,专门用来中文的文本划分

设置GPU

选择运行的设备

import torch

import torch.nn as nn

import os,PIL,pathlib,warnings

warnings.filterwarnings("ignore") #忽略警告信息

# win10系统

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

device

device(type=‘cpu’)

加载数据

本次使用的是当地下载的文件,文件的内容需要的可以私信我或者加入深度学习训练营

首先进行输入的导入

import pandas as pd

# 加载自定义中文数据

train_data = pd.read_csv('train.csv', sep='\t', header=None)

train_data.head()

# 构造数据集迭代器

def coustom_data_iter(texts, labels):

for x, y in zip(texts, labels):

yield x, y

train_iter = coustom_data_iter(train_data[0].values[:], train_data[1].values[:])

torchtext.datasets.AG_NEWS()是用于加载AG News数据集的TorchText数据集类,主要包含有世界,科技,体育和商业等新闻文章

构建词典

from torchtext.data.utils import get_tokenizer

from torchtext.vocab import build_vocab_from_iterator

# conda install jieba -y

import jieba

# 中文分词方法

tokenizer = jieba.lcut

def yield_tokens(data_iter):

for text,_ in data_iter:

yield tokenizer(text)

vocab = build_vocab_from_iterator(yield_tokens(train_iter), specials=["<unk>"])

vocab.set_default_index(vocab["<unk>"]) # 设置默认索引,如果找不到单词,则会选择默认索引

vocab(['我','想','看','和平','精英','上','战神','必备','技巧','的','游戏','视频'])

label_name = list(set(train_data[1].values[:]))

print(label_name)

text_pipeline = lambda x: vocab(tokenizer(x))

label_pipeline = lambda x: label_name.index(x)

print(text_pipeline('我想看和平精英上战神必备技巧的游戏视频'))

print(label_pipeline('Video-Play'))

[[‘Music-Play’, ‘Alarm-Update’, ‘TVProgram-Play’, ‘FilmTele-Play’, ‘Video-Play’, ‘Weather-Query’, ‘HomeAppliance-Control’, ‘Other’, ‘Audio-Play’, ‘Calendar-Query’, ‘Travel-Query’, ‘Radio-Listen’]

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0]

4

生成数据批次和迭代器

from torch.utils.data import DataLoader

def collate_batch(batch):

label_list, text_list, offsets = [], [], [0]

for (_text,_label) in batch:

# 标签列表

label_list.append(label_pipeline(_label))

# 文本列表

processed_text = torch.tensor(text_pipeline(_text), dtype=torch.int64)

text_list.append(processed_text)

# 偏移量,即语句的总词汇量

offsets.append(processed_text.size(0))

label_list = torch.tensor(label_list, dtype=torch.int64)

text_list = torch.cat(text_list)

offsets = torch.tensor(offsets[:-1]).cumsum(dim=0) #返回维度dim中输入元素的累计和

return text_list.to(device),label_list.to(device), offsets.to(device)

# 数据加载器,调用示例

dataloader = DataLoader(train_iter,

batch_size=8,

shuffle =False,

collate_fn=collate_batch)

模型定义

首先先定义我们进行分类用到的模型,然后嵌入文本,然后对句子嵌入之后的结果均值聚合

from torch import nn

class TextClassificationModel(nn.Module):

def __init__(self, vocab_size, embed_dim, num_class):

super(TextClassificationModel, self).__init__()

self.embedding = nn.EmbeddingBag(vocab_size, # 词典大小

embed_dim, # 嵌入的维度

sparse=False) #

self.fc = nn.Linear(embed_dim, num_class)

self.init_weights()

def init_weights(self):

initrange = 0.5

self.embedding.weight.data.uniform_(-initrange, initrange)

self.fc.weight.data.uniform_(-initrange, initrange)

self.fc.bias.data.zero_()

def forward(self, text, offsets):

embedded = self.embedding(text, offsets)

return self.fc(embedded)

self.embedding.weight.data.uniform_(-initrange, initrange)这段代码在PyTorch框架下用于初始化神经网络的词嵌入层权重的一种方法,这样使得模型在训练时具有一定的随机性,避免了梯度消失或者梯度爆炸等问题

定义实例

在设置好的模型当中进行一个命名为model

num_class = len(set([label for (label, text) in train_iter]))

vocab_size = len(vocab)

em_size = 64

model = TextClassificationModel(vocab_size, em_size, num_class).to(device)

定义训练函数和评估函数

import time

def train(dataloader):

model.train() # 切换为训练模式

total_acc, train_loss, total_count = 0, 0, 0

log_interval = 50

start_time = time.time()

for idx, (text,label,offsets) in enumerate(dataloader):

predicted_label = model(text, offsets)

optimizer.zero_grad() # grad属性归零

loss = criterion(predicted_label, label) # 计算网络输出和真实值之间的差距,label为真实值

loss.backward() # 反向传播

torch.nn.utils.clip_grad_norm_(model.parameters(), 0.1) # 梯度裁剪

optimizer.step() # 每一步自动更新

# 记录acc与loss

total_acc += (predicted_label.argmax(1) == label).sum().item()

train_loss += loss.item()

total_count += label.size(0)

if idx % log_interval == 0 and idx > 0:

elapsed = time.time() - start_time

print('| epoch {:1d} | {:4d}/{:4d} batches '

'| train_acc {:4.3f} train_loss {:4.5f}'.format(epoch, idx, len(dataloader),

total_acc/total_count, train_loss/total_count))

total_acc, train_loss, total_count = 0, 0, 0

start_time = time.time()

def evaluate(dataloader):

model.eval() # 切换为测试模式

total_acc, train_loss, total_count = 0, 0, 0

with torch.no_grad():

for idx, (text,label,offsets) in enumerate(dataloader):

predicted_label = model(text, offsets)

loss = criterion(predicted_label, label) # 计算loss值

# 记录测试数据

total_acc += (predicted_label.argmax(1) == label).sum().item()

train_loss += loss.item()

total_count += label.size(0)

return total_acc/total_count, train_loss/total_count

模型训练

from torch.utils.data.dataset import random_split

from torchtext.data.functional import to_map_style_dataset

# 超参数

EPOCHS = 10 # epoch

LR = 5 # 学习率

BATCH_SIZE = 64 # batch size for training

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=LR)

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, 1.0, gamma=0.1)

total_accu = None

# 构建数据集

train_iter = coustom_data_iter(train_data[0].values[:], train_data[1].values[:])

train_dataset = to_map_style_dataset(train_iter)

split_train_, split_valid_ = random_split(train_dataset,

[int(len(train_dataset)*0.8),int(len(train_dataset)*0.2)])

train_dataloader = DataLoader(split_train_, batch_size=BATCH_SIZE,

shuffle=True, collate_fn=collate_batch)

valid_dataloader = DataLoader(split_valid_, batch_size=BATCH_SIZE,

shuffle=True, collate_fn=collate_batch)

for epoch in range(1, EPOCHS + 1):

epoch_start_time = time.time()

train(train_dataloader)

val_acc, val_loss = evaluate(valid_dataloader)

# 获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

if total_accu is not None and total_accu > val_acc:

scheduler.step()

else:

total_accu = val_acc

print('-' * 69)

print('| epoch {:1d} | time: {:4.2f}s | '

'valid_acc {:4.3f} valid_loss {:4.3f} | lr {:4.6f}'.format(epoch,

time.time() - epoch_start_time,

val_acc,val_loss,lr))

print('-' * 69)

| epoch 1 | 50/ 152 batches | train_acc 0.422 train_loss 0.03069

| epoch 1 | 100/ 152 batches | train_acc 0.698 train_loss 0.01958

| epoch 1 | 150/ 152 batches | train_acc 0.765 train_loss 0.01386

---------------------------------------------------------------------

| epoch 1 | time: 1.55s | valid_acc 0.799 valid_loss 0.012 | lr 5.000000

---------------------------------------------------------------------

| epoch 2 | 50/ 152 batches | train_acc 0.811 train_loss 0.01107

| epoch 2 | 100/ 152 batches | train_acc 0.832 train_loss 0.00908

| epoch 2 | 150/ 152 batches | train_acc 0.849 train_loss 0.00813

---------------------------------------------------------------------

| epoch 2 | time: 1.51s | valid_acc 0.849 valid_loss 0.008 | lr 5.000000

---------------------------------------------------------------------

| epoch 3 | 50/ 152 batches | train_acc 0.869 train_loss 0.00679

| epoch 3 | 100/ 152 batches | train_acc 0.886 train_loss 0.00646

| epoch 3 | 150/ 152 batches | train_acc 0.881 train_loss 0.00629

---------------------------------------------------------------------

| epoch 3 | time: 1.49s | valid_acc 0.874 valid_loss 0.007 | lr 5.000000

---------------------------------------------------------------------

| epoch 4 | 50/ 152 batches | train_acc 0.900 train_loss 0.00529

| epoch 4 | 100/ 152 batches | train_acc 0.910 train_loss 0.00488

| epoch 4 | 150/ 152 batches | train_acc 0.915 train_loss 0.00473

---------------------------------------------------------------------

| epoch 4 | time: 1.61s | valid_acc 0.881 valid_loss 0.006 | lr 5.000000

---------------------------------------------------------------------

| epoch 5 | 50/ 152 batches | train_acc 0.931 train_loss 0.00385

...

| epoch 10 | 150/ 152 batches | train_acc 0.983 train_loss 0.00128

---------------------------------------------------------------------

| epoch 10 | time: 1.71s | valid_acc 0.901 valid_loss 0.005 | lr 5.000000

---------------------------------------------------------------------

TorchText是Torch的一个拓展库,专注于处理文本数据,这样我们可以通过索引直接访问数据集当中的特定样本,简化了模型的训练,验证和测试过程当中的数据处理

模型预测

#预测函数

def predict(text, text_pipeline):

with torch.no_grad():

text = torch.tensor(text_pipeline(text))

output = model(text, torch.tensor([0]))

return output.argmax(1).item()

ex_text_str = "随便播放一首专辑阁楼里的佛里的歌"

#ex_text_str = "还有双鸭山到淮阴的汽车票吗13号的"

model = model.to(device)

print("该文本的类别是:%s" % label_name[predict(ex_text_str, text_pipeline)])

该文本的类别是:Music-Play