第五章.与学习相关技巧

5.1 参数更新的最优化方法

- 神经网络学习的目的是找到使损失函数的值尽可能小的参数,这是寻找最优参数的问题,解决这个问题的过程称为最优化。

- 很多深度学习框架都实现了各种最优化方法,比如Lasagne深度学习框架,在update.py这个文件中以函数的形式集中实现了最优化方法,用户可以从中选择自己想用的最优化方法。

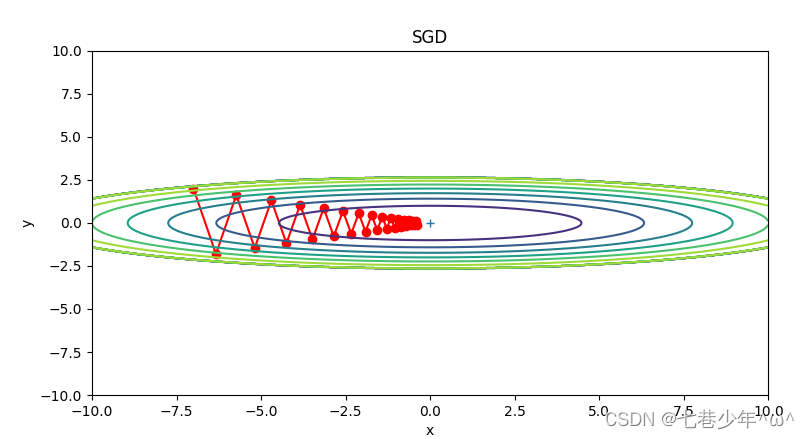

1.SGD

使用参数的梯度,沿着梯度方向更新参数,不断重复这个步骤多次,从而逐渐靠近最优参数,这个过程称为随机梯度下降法(SGD)

1).数学式:

- 参数说明:

①.W:待更新的权重参数

②.η:学习率

③.∂L/∂W:损失函数关于W的梯度

2).示例:

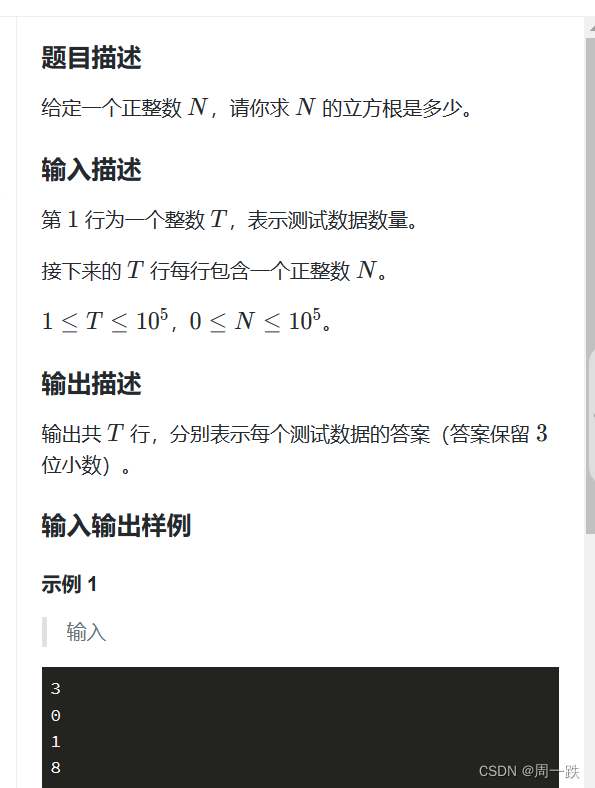

以 f(x,y)=1/20x2 + y2 为例:

-

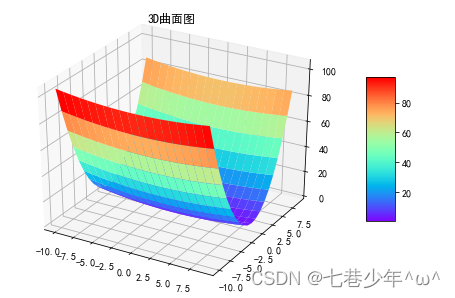

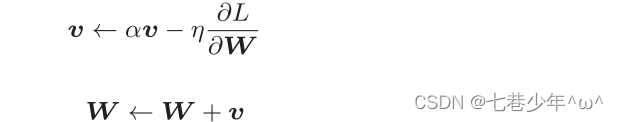

3D曲面图:

-

最优化的梯度更新路径图:

缺点:

如果函数的形状非均向,比如呈延伸状SGD,搜索路径就会非常低效。SGD低效的根本原因:

梯度的方向并没有指向最小值的方向。

3).代码实现:

class SGD:

def __init__(self, lr):

self.lr = lr

def update(self, params, grads):

for key in params.keys():

params[key] -= self.lr * grads[key]

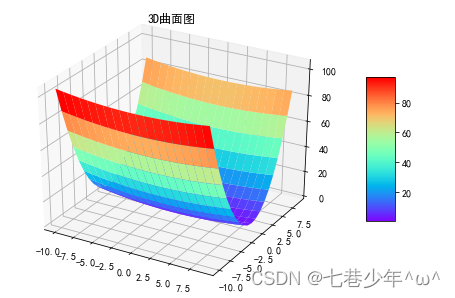

2.Momentum

Momentum可以解决SGD存在的问题:如果函数的形状非均向,搜索效率很低的情况。

1).数学式:

- 参数说明:

①.W:待更新的权重参数

②.η:学习率

③.∂L/∂W:损失函数关于W的梯度

④.v:对应物理上的速度,表示物体在梯度方向上的受力,在这个力的作用下,物体的速度增加这一物理量

⑤.α:对应物理上的地面摩擦力或空气阻力,α=0.9

2).示例:

以 f(x,y)=1/20x2 + y2 为例:

-

3D曲面图:

-

最优化的梯度更新路径图:

缺点:

在神经网络的学习中,η的值很重要,η值太小,学习花费更多时间;η值太大,学习发散不能正确进行。

3).代码实现:

class Momentum:

def __init__(self, lr, momentum):

self.lr = lr

self.momentum = momentum

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = np.zeros_like(val)

for key in params.keys():

self.v[key] = self.momentum * self.v[key] - self.lr * grads[key]

params[key] += self.v[key]

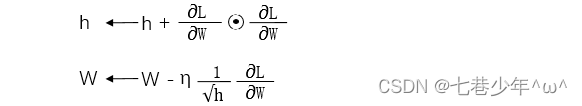

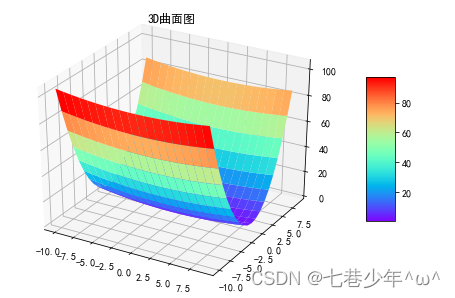

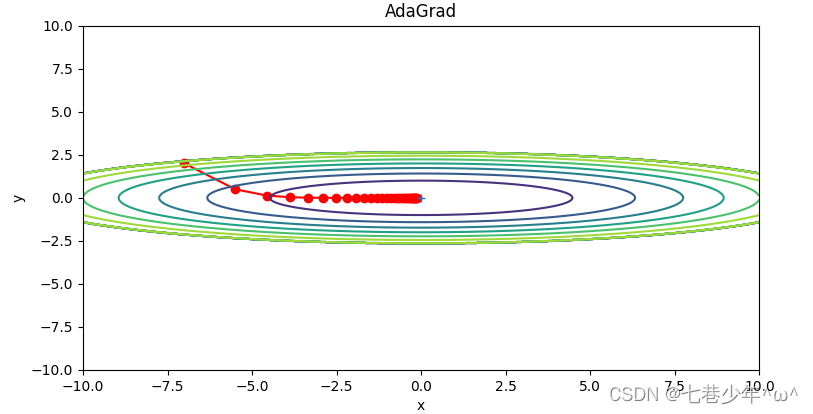

3.AdaGrad

AdaGrad方法可以解决Momentum存在的η难设置问题。AdaGrad方法在更新参数时,随着学习的进行,使学习率逐渐减小。

1).数学式:

- 参数说明:

①.W:待更新的权重参数

②.η:学习率

③.∂L/∂W:损失函数关于W的梯度

④.h:它保存了以前所有梯度值的平方和

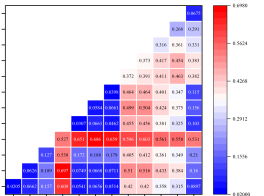

2).示例:

以 f(x,y)=1/20x2 + y2 为例:

-

3D曲面图:

-

最优化的梯度更新路径图:

图像描述:

函数的取值高效地向着最小值移动,由于y轴方向上的梯度较大,因此刚开始变动较大,但是后面会根据这个较大的变动按比例进行调整,减小更新的步伐。

3).代码实现:

class AdaGrad:

def __init__(self, lr):

self.lr = lr

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

for key in params.keys():

self.h[key] += grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7)#加上1e-7是为了解决除数为0的情况

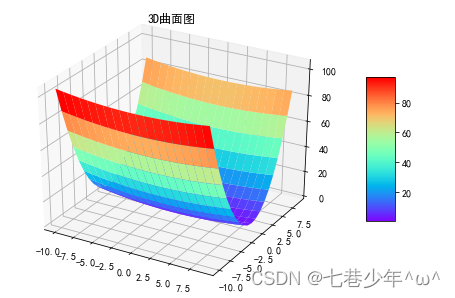

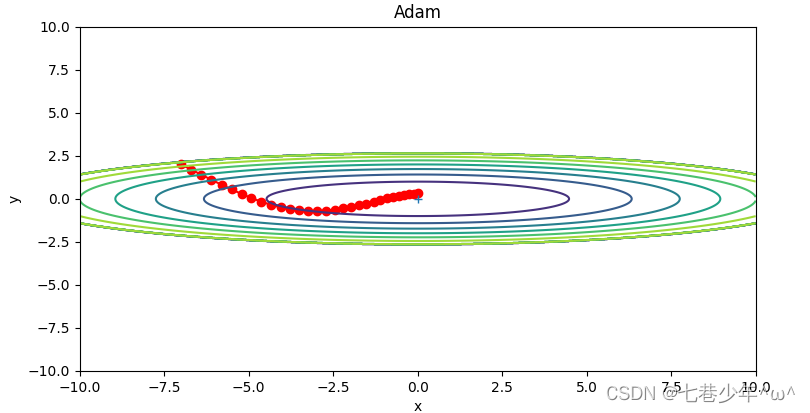

4.Adam

Adam是融合了Momentum与AdaGrad的方法。

1).示例:

以 f(x,y)=1/20x2 + y2 为例:

-

3D曲面图:

-

最优化的梯度更新路径图:

2).代码实现:

class Adam:

def __init__(self, lr, β1, β2):

self.lr = lr

self.β1 = β1

self.β2 = β2

self.iter = 0

self.h = None

self.v = None

def update(self, params, grads):

if self.h is None:

self.h, self.v = {}, {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

self.v[key] = np.zeros_like(val)

self.iter += 1

lr_t = self.lr * np.sqrt(1.0 - self.β2 ** self.iter) / (1.0 - self.β1 ** self.iter)

for key in params.keys():

self.h[key] += (1 - self.β1) * (grads[key] - self.h[key])

self.v[key] += (1 - self.β2) * (grads[key] ** 2 - self.v[key])

params[key] -= lr_t * self.h[key] / (np.sqrt(self.v[key]) + 1e-7) # 加上1e-7是为了解决除数为0的情况

5.各种最优化方法实现手写数字识别的完整代码:(直接替换上述类即可)

1).代码实现:

import numpy as np

import matplotlib.pyplot as plt

import sys, os

sys.path.append(os.pardir)

from dataset.mnist import load_mnist

from collections import OrderedDict

class Adam:

def __init__(self, lr, β1, β2):

self.lr = lr

self.β1 = β1

self.β2 = β2

self.iter = 0

self.h = None

self.v = None

def update(self, params, grads):

if self.h is None:

self.h, self.v = {}, {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

self.v[key] = np.zeros_like(val)

self.iter += 1

lr_t = self.lr * np.sqrt(1.0 - self.β2 ** self.iter) / (1.0 - self.β1 ** self.iter)

for key in params.keys():

self.h[key] += (1 - self.β1) * (grads[key] - self.h[key])

self.v[key] += (1 - self.β2) * (grads[key] ** 2 - self.v[key])

params[key] -= lr_t * self.h[key] / (np.sqrt(self.v[key]) + 1e-7) # 加上1e-7是为了解决除数为0的情况

class Affine:

def __init__(self, W, b):

self.W = W

self.b = b

self.x = None

self.original_x_shape = None

# 权重和偏置参数的导数

self.dW = None

self.db = None

# 向前传播

def forward(self, x):

self.original_x_shape = x.shape

x = x.reshape(x.shape[0], -1)

self.x = x

out = np.dot(self.x, self.W) + self.b

return out

# 反向传播

def backward(self, dout):

dx = np.dot(dout, self.W.T)

self.dW = np.dot(self.x.T, dout)

self.db = np.sum(dout, axis=0)

dx = dx.reshape(*self.original_x_shape) # 还原输入数据的形状(对应张量)

return dx

class ReLU:

def __init__(self):

self.mask = None

def forward(self, x):

self.mask = (x <= 0)

out = x.copy()

out[self.mask] = 0

return out

def backward(self, dout):

dout[self.mask] = 0

dx = dout

return dx

class SoftmaxWithLoss:

def __init__(self):

self.loss = None

self.y = None

self.t = None

# 输出层函数:softmax

def softmax(self, x):

if x.ndim == 2:

x = x.T

x = x - np.max(x, axis=0)

y = np.exp(x) / np.sum(np.exp(x), axis=0)

return y.T

x = x - np.max(x) # 溢出对策

y = np.exp(x) / np.sum(np.exp(x))

return y

# 误差函数:交叉熵误差

def cross_entropy_error(self, y, t):

if y.ndim == 1:

y = y.reshape(1, y.size)

t = t.reshape(1, t.size)

# 监督数据是one_hot_label的情况下,转换为正确解标签的索引

if t.size == y.size:

t = t.argmax(axis=1)

batch_size = y.shape[0]

return -np.sum(np.log(y[np.arange(batch_size), t] + 1e-7)) / batch_size

def forward(self, x, t):

self.t = t

self.y = self.softmax(x)

self.loss = self.cross_entropy_error(self.y, self.t)

return self.loss

def backward(self, dout=1):

batch_size = self.t.shape[0]

if self.t.size == self.y.size:

dx = (self.y - self.t) / batch_size

else:

dx = self.y.copy()

dx[np.arange(batch_size), self.t] -= 1

dx = dx / batch_size

return dx

class TwoLayerNet:

# 初始化

def __init__(self, input_size, hidden_size, output_size, weight_init_std=0.01):

# 初始化权重

self.params = {}

self.params['W1'] = weight_init_std * np.random.randn(input_size, hidden_size)

self.params['b1'] = np.zeros(hidden_size)

self.params['W2'] = weight_init_std * np.random.randn(hidden_size, output_size)

self.params['b2'] = np.zeros(output_size)

# 生成层

self.layers = OrderedDict()

self.layers['Affine1'] = Affine(self.params['W1'], self.params['b1'])

self.layers['ReLU'] = ReLU()

self.layers['Affine2'] = Affine(self.params['W2'], self.params['b2'])

self.lastLayer = SoftmaxWithLoss()

def predict(self, x):

for layer in self.layers.values():

x = layer.forward(x)

return x

def loss(self, x, t):

y = self.predict(x)

loss = self.lastLayer.forward(y, t)

return loss

def accuracy(self, x, t):

y = self.predict(x)

y = np.argmax(y, axis=1)

if t.ndim != 1: t = np.argmax(t, axis=1)

accuracy = np.sum(y == t) / float(t.shape[0])

return accuracy

# 微分函数

def numerical_gradient1(self, f, x):

h = 1e-4

grad = np.zeros_like(x)

it = np.nditer(x, flags=['multi_index'], op_flags=['readwrite'])

while not it.finished:

idx = it.multi_index

tmp_val = x[idx]

x[idx] = float(tmp_val) + h

fxh1 = f(x) # f(x+h)

x[idx] = tmp_val - h

fxh2 = f(x) # f(x-h)

grad[idx] = (fxh1 - fxh2) / (2 * h)

x[idx] = tmp_val # 还原值

it.iternext()

return grad

# 通过数值微分计算关于权重参数的梯度

def numerical_gradient(self, x, t):

loss_W = lambda W: self.loss(x, t)

grad = {}

grad['W1'] = self.numerical_gradient1(loss_W, self.params['W1'])

grad['b1'] = self.numerical_gradient1(loss_W, self.params['b1'])

grad['W2'] = self.numerical_gradient1(loss_W, self.params['W2'])

grad['b2'] = self.numerical_gradient1(loss_W, self.params['b2'])

return grad

# 通过误差反向传播法计算权重参数的梯度误差

def gradient(self, x, t):

# 正向传播

self.loss(x, t)

# 反向传播

dout = 1

dout = self.lastLayer.backward(dout)

layers = list(self.layers.values())

layers.reverse()

for layer in layers:

dout = layer.backward(dout)

# 设定

grads = {}

grads['W1'] = self.layers['Affine1'].dW

grads['b1'] = self.layers['Affine1'].db

grads['W2'] = self.layers['Affine2'].dW

grads['b2'] = self.layers['Affine2'].db

return grads

# 读入数据

def get_data():

(x_train, t_train), (x_test, t_test) = load_mnist(normalize=True, one_hot_label=True)

return (x_train, t_train), (x_test, t_test)

# 读入数据

(x_train, t_train), (x_test, t_test) = get_data()

network = TwoLayerNet(input_size=784, hidden_size=50, output_size=10)

# 超参数

iter_num = 10000

train_size = x_train.shape[0]

batch_size = 100

lr = 0.01

momentum = 0.9

β1 = 0.9

β2 = 0.999

iter_per_epoch = max(train_size / batch_size, 1)

train_loss_list = []

train_acc_list = []

test_acc_list = []

# 可替换的类

optimizer = Adam(lr, β1, β2)

for i in range(iter_num):

batch_mask = np.random.choice(train_size, batch_size)

x_batch = x_train[batch_mask]

t_batch = t_train[batch_mask]

grads = network.gradient(x_batch, t_batch)

params = network.params

optimizer.update(params, grads)

loss = network.loss(x_batch, t_batch)

train_loss_list.append(loss)

if i % iter_per_epoch == 0:

train_acc = network.accuracy(x_train, t_train)

train_acc_list.append(train_acc)

test_acc = network.accuracy(x_test, t_test)

test_acc_list.append(test_acc)

print('train_acc,test_acc|', str(train_acc) + ',' + str(test_acc))

# 绘制识别精度图像

plt.rcParams['font.sans-serif'] = ['SimHei'] # 解决中文乱码

plt.rcParams['axes.unicode_minus'] = False # 解决负号不显示的问题

plt.figure(figsize=(8, 4))

plt.subplot(1, 2, 1)

x_data = np.arange(0, len(train_acc_list))

plt.plot(x_data, train_acc_list, 'b')

plt.plot(x_data, test_acc_list, 'r')

plt.xlabel('epoch')

plt.ylabel('accuracy')

plt.ylim(0.0, 1.0)

plt.title('训练数据和测试数据的识别精度')

plt.legend(['train_acc', 'test_acc'])

plt.subplot(1, 2, 2)

x_data = np.arange(0, len(train_loss_list))

plt.plot(x_data, train_loss_list, 'g')

plt.xlabel('iters_num')

plt.ylabel('loss')

plt.title('损失函数')

plt.show()

6.各种最优化方法绘制梯度更新路径图的代码

1).代码实现:

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import numpy as np

import matplotlib.pyplot as plt

from collections import OrderedDict

class SGD:

def __init__(self, lr=0.01):

self.lr = lr

def update(self, params, grads):

for key in params.keys():

params[key] -= self.lr * grads[key]

class Momentum:

def __init__(self, lr=0.01, momentum=0.9):

self.lr = lr

self.momentum = momentum

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = np.zeros_like(val)

for key in params.keys():

self.v[key] = self.momentum * self.v[key] - self.lr * grads[key]

params[key] += self.v[key]

class AdaGrad:

def __init__(self, lr=0.01):

self.lr = lr

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

for key in params.keys():

self.h[key] += grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7)

class Adam:

def __init__(self, lr=0.001, beta1=0.9, beta2=0.999):

self.lr = lr

self.beta1 = beta1

self.beta2 = beta2

self.iter = 0

self.m = None

self.v = None

def update(self, params, grads):

if self.m is None:

self.m, self.v = {}, {}

for key, val in params.items():

self.m[key] = np.zeros_like(val)

self.v[key] = np.zeros_like(val)

self.iter += 1

lr_t = self.lr * np.sqrt(1.0 - self.beta2 ** self.iter) / (1.0 - self.beta1 ** self.iter)

for key in params.keys():

self.m[key] += (1 - self.beta1) * (grads[key] - self.m[key])

self.v[key] += (1 - self.beta2) * (grads[key] ** 2 - self.v[key])

params[key] -= lr_t * self.m[key] / (np.sqrt(self.v[key]) + 1e-7)

def f(x, y):

return x ** 2 / 20.0 + y ** 2

def df(x, y):

return x / 10.0, 2.0 * y

init_pos = (-7.0, 2.0)

params = {}

params['x'], params['y'] = init_pos[0], init_pos[1]

grads = {}

grads['x'], grads['y'] = 0, 0

optimizers = OrderedDict()

optimizers["SGD"] = SGD(lr=0.95)

optimizers["Momentum"] = Momentum(lr=0.1)

optimizers["AdaGrad"] = AdaGrad(lr=1.5)

optimizers["Adam"] = Adam(lr=0.3)

idx = 1

plt.figure(figsize=(12, 10))

for key in optimizers:

optimizer = optimizers[key]

x_history = []

y_history = []

params['x'], params['y'] = init_pos[0], init_pos[1]

for i in range(30):

x_history.append(params['x'])

y_history.append(params['y'])

grads['x'], grads['y'] = df(params['x'], params['y'])

optimizer.update(params, grads)

x = np.arange(-10, 10, 0.01)

y = np.arange(-5, 5, 0.01)

X, Y = np.meshgrid(x, y)

Z = f(X, Y)

# for simple contour line

mask = Z > 7

Z[mask] = 0

# plot

plt.subplot(2, 2, idx)

idx += 1

plt.plot(x_history, y_history, 'o-', color="red")

plt.contour(X, Y, Z)

plt.ylim(-10, 10)

plt.xlim(-10, 10)

plt.plot(0, 0, '+')

plt.title(key)

plt.xlabel("x")

plt.ylabel("y")

plt.show()