LLM应用的A/B测试工程2026:如何科学评估Prompt和模型变更

news2026/5/5 6:54:17

直觉驱动的优化是个陷阱“我感觉这个Prompt写得更好”——这句话在AI应用开发中非常危险。LLM的输出有随机性人的感知有偏差小样本测试会产生噪声。当你凭直觉认为修改后的Prompt效果更好时很可能只是测试了少数几个有利于新版本的例子。真实情况可能是新Prompt在某些类型的问题上更好但在另一些类型上更差。如果你没有科学的A/B测试体系这类变化你永远发现不了。本文从工程实践角度建立一套完整的LLM A/B测试体系。## 一、LLM A/B测试的独特挑战传统A/B测试的挑战统计学、流量分配、显著性在LLM场景同样存在。但LLM还有额外的特殊挑战输出是文本不是点击率传统A/B测试比较的是CTR、转化率等数值指标而LLM输出是自然语言需要先定义什么是更好的输出。评估本身需要LLM要评估LLM输出的质量往往又需要另一个LLM来打分——评估者偏差怎么控制上下文依赖性强同一个Prompt在不同类型的输入下可能表现差异极大。延迟不对称模型A比模型B快了200ms但准确率低了3%——这个权衡怎么量化## 二、构建评估指标体系### 2.1 自动化评估指标pythonfrom dataclasses import dataclassfrom typing import List, Dict, Optionalimport numpy as npdataclassclass EvaluationResult: 单次评估结果 response_id: str variant: str # control 或 treatment input_text: str output_text: str latency_ms: float token_count: int cost_usd: float # 自动化指标 factual_accuracy: Optional[float] None # 事实准确性 format_compliance: Optional[float] None # 格式符合率 length_score: Optional[float] None # 长度合适度 # LLM评估指标 llm_quality_score: Optional[float] None # 整体质量0-10 llm_helpfulness: Optional[float] None # 有用性0-10 llm_accuracy: Optional[float] None # 准确性0-10class AutomaticMetrics: 自动化评估指标计算 staticmethod def format_compliance_rate(output: str, expected_format: str) - float: 检查输出是否符合预期格式 expected_format: json, markdown, list, numbered_list import json import re if expected_format json: try: json.loads(output.strip()) return 1.0 except: # 检查是否包含JSON块 if re.search(r\{.*\}, output, re.DOTALL): return 0.5 return 0.0 elif expected_format markdown: has_headers bool(re.search(r^#{1,4}\s, output, re.MULTILINE)) has_code bool(re.search(r‘, output)) has_emphasis bool(re.search(r’**|*|__‘, output)) return (has_headers has_code has_emphasis) / 3 elif expected_format “numbered_list”: items re.findall(r’^\d.\s’, output, re.MULTILINE) return min(1.0, len(items) / 3) # 至少3条认为合格 return 0.5 # 无法判断时给中间分 staticmethod def length_appropriateness(output: str, target_length: int, tolerance: float 0.3) - float: “”“评估输出长度是否合适”“” actual len(output) ratio actual / target_length if abs(ratio - 1.0) tolerance: return 1.0 # 在容忍范围内 elif ratio (1 - tolerance): return ratio / (1 - tolerance) # 太短 else: return (1 - tolerance) / ratio # 太长 staticmethod def contains_forbidden_phrases(output: str, forbidden: List[str]) - float: “”“检查是否包含禁止词汇/短语”“” output_lower output.lower() violations sum(1 for phrase in forbidden if phrase.lower() in output_lower) return 1.0 - (violations / len(forbidden)) if forbidden else 1.0### 2.2 LLM-as-Judge评估用LLM评估LLM输出是目前最实用的方案但需要仔细设计评估提示pythonfrom anthropic import Anthropicevaluator_client Anthropic()def llm_judge_single( question: str, reference_answer: Optional[str], response_a: str, response_b: str, criteria: List[str]) - Dict: “” 使用LLM作为裁判比较两个响应 采用成对比较而非绝对评分减少评估偏差 “” criteria_text “\n”.join([f{i1}. {c} for i, c in enumerate(criteria)]) prompt f““你是一个严格、公正的AI回答质量评估者。 问题{question}{f参考答案{reference_answer}” if reference_answer else “”}回答A{response_a}回答B{response_b}评估标准{criteria_text}请严格按照以下JSON格式输出评估结果{{ “winner”: “A” 或 “B” 或 “tie”, “confidence”: 0-1之间的浮点数1极度确信0无法判断, “a_scores”: {{{”, “.join([f’”{c[:20]}“: 0-10’ for c in criteria])}}}, “b_scores”: {{{”, “.join([f’”{c[:20]}“: 0-10’ for c in criteria])}}}, “reasoning”: “简短说明判断理由50字以内”}}评估规则- 只关注质量不考虑风格偏好- 如果两者质量相近差异1分判为tie- 必须给出具体的判断理由”“” # 为避免位置偏差随机打乱A/B顺序 import random swapped random.random() 0.5 if swapped: prompt prompt.replace(“回答A”, “回答X”).replace(“回答B”, “回答Y”) prompt prompt.replace(response_a, f[X] {response_a}“) prompt prompt.replace(response_b, f”[Y] {response_b}“) response evaluator_client.messages.create( model“claude-4-sonnet-20260101”, max_tokens1024, messages[{“role”: “user”, “content”: prompt}] ) import json result json.loads(response.content[0].text) # 如果打乱了顺序还原结果 if swapped and result[“winner”] in [“A”, “B”]: result[“winner”] “B” if result[“winner”] “A” else “A” return resultdef batch_llm_judge( test_cases: List[Dict], control_responses: List[str], treatment_responses: List[str], criteria: List[str], sample_size: int 50 # 不需要全量评估) - Dict: “”“批量LLM评估”” # 如果样本量大随机采样 indices list(range(len(test_cases))) if len(indices) sample_size: import random indices random.sample(indices, sample_size) wins {“A”: 0, “B”: 0, “tie”: 0} all_scores {“A”: [], “B”: []} for i in indices: result llm_judge_single( questiontest_cases[i][“question”], reference_answertest_cases[i].get(“reference”), response_acontrol_responses[i], response_btreatment_responses[i], criteriacriteria ) wins[result[“winner”]] 1 # 收集各维度评分 for crit in criteria: crit_key crit[:20] if crit_key in result.get(“a_scores”, {}): all_scores[“A”].append(result[“a_scores”][crit_key]) if crit_key in result.get(“b_scores”, {}): all_scores[“B”].append(result[“b_scores”][crit_key]) total sum(wins.values()) return { “control_win_rate”: wins[“A”] / total, “treatment_win_rate”: wins[“B”] / total, “tie_rate”: wins[“tie”] / total, “control_avg_score”: np.mean(all_scores[“A”]) if all_scores[“A”] else None, “treatment_avg_score”: np.mean(all_scores[“B”]) if all_scores[“B”] else None, “sample_size”: total }## 三、统计显著性检验treatment赢了52% vs control的48%“——这有意义吗需要统计检验pythonfrom scipy import statsimport numpy as npclass StatisticalAnalyzer: “”“A/B测试统计分析”” staticmethod def proportion_z_test( control_wins: int, treatment_wins: int, ties: int, alpha: float 0.05 ) - Dict: “” 比例Z检验检验治疗组胜率是否显著高于控制组 排除平局 “” from scipy.stats import binomtest total control_wins treatment_wins if total 0: return {“significant”: False, “error”: “无有效比较”} # 双侧检验治疗组vs控制组是否有显著差异 result binomtest(treatment_wins, total, 0.5, alternative‘greater’) treatment_win_rate treatment_wins / total return { “significant”: result.pvalue alpha, “p_value”: result.pvalue, “treatment_win_rate”: treatment_win_rate, “control_win_rate”: control_wins / total, “confidence_level”: (1 - alpha) * 100, “recommendation”: “采纳治疗组” if result.pvalue alpha else “差异不显著保持现状” } staticmethod def compute_required_sample_size( baseline_rate: float 0.5, minimum_detectable_effect: float 0.05, alpha: float 0.05, power: float 0.8 ) - int: “” 计算需要的样本量 baseline_rate: 基准胜率一般为0.5 minimum_detectable_effect: 最小可检测效果如0.05表示5%的提升 “” from statsmodels.stats.power import NormalIndPower effect_size minimum_detectable_effect / np.sqrt( baseline_rate * (1 - baseline_rate) ) analysis NormalIndPower() n analysis.solve_power( effect_sizeeffect_size, alphaalpha, powerpower, alternative‘two-sided’ ) return int(np.ceil(n))## 四、完整A/B测试流水线pythonclass LLMExperiment: “”“完整的LLM A/B测试管理器”“” definit(self, experiment_name: str): self.name experiment_name self.results [] def run_experiment( self, test_cases: List[Dict], control_fn, # 控制组原始Prompt/模型调用函数 treatment_fn, # 治疗组新Prompt/模型调用函数 evaluation_criteria: List[str], sample_size: int None ) - Dict: “”“运行完整实验”“” if sample_size and sample_size len(test_cases): import random test_cases random.sample(test_cases, sample_size) print(f实验开始{self.name}“) print(f样本数量{len(test_cases)}”) # 并行执行A/B import concurrent.futures control_responses [] treatment_responses [] control_latencies [] treatment_latencies [] with concurrent.futures.ThreadPoolExecutor(max_workers5) as executor: control_futures [ executor.submit(self._timed_call, control_fn, tc) for tc in test_cases ] treatment_futures [ executor.submit(self._timed_call, treatment_fn, tc) for tc in test_cases ] control_results [f.result() for f in control_futures] treatment_results [f.result() for f in treatment_futures] control_responses [r[“response”] for r in control_results] treatment_responses [r[“response”] for r in treatment_results] control_latencies [r[“latency”] for r in control_results] treatment_latencies [r[“latency”] for r in treatment_results] # LLM评估 print(“进行LLM质量评估…”) quality_results batch_llm_judge( test_cases, control_responses, treatment_responses, evaluation_criteria ) # 统计检验 wins_a int(quality_results[“control_win_rate”] * len(test_cases)) wins_b int(quality_results[“treatment_win_rate”] * len(test_cases)) ties len(test_cases) - wins_a - wins_b stats_result StatisticalAnalyzer.proportion_z_test(wins_a, wins_b, ties) # 汇总报告 report { “experiment_name”: self.name, “sample_size”: len(test_cases), “quality”: quality_results, “statistics”: stats_result, “performance”: { “control_avg_latency_ms”: np.mean(control_latencies), “treatment_avg_latency_ms”: np.mean(treatment_latencies), “latency_difference_ms”: np.mean(treatment_latencies) - np.mean(control_latencies) } } self._print_report(report) return report def _timed_call(self, fn, test_case: Dict) - Dict: import time start time.time() response fn(test_case) latency (time.time() - start) * 1000 return {“response”: response, “latency”: latency} def _print_report(self, report: Dict): print(“\n” “”*50) print(f实验结果{report[‘experiment_name’]}“) print(”“*50) q report[“quality”] print(f控制组胜率{q[‘control_win_rate’]:.1%}”) print(f治疗组胜率{q[‘treatment_win_rate’]:.1%}“) print(f平局率{q[‘tie_rate’]:.1%}”) s report[“statistics”] print(f\n统计显著性{‘是’ if s[‘significant’] else ‘否’}“) print(fP值{s[‘p_value’]:.4f}”) print(f建议{s[‘recommendation’]}“) p report[“performance”] print(f”\n延迟对比控制组 {p[‘control_avg_latency_ms’]:.0f}ms vs f治疗组 {p[‘treatment_avg_latency_ms’]:.0f}ms)## 五、实践建议测试用例构建不要用随机文本要用真实用户输入。从生产日志中取样确保覆盖所有使用场景。**评估者选择**用比被测模型更强的模型做评估者Evaluator模型 ≥ 被测模型否则评估结果不可靠。**最小样本量**先用样本量计算公式算出需要多少样本不要拍脑袋决定测100条还是1000条。**避免多重比较问题**不要同时测试多个变量每次只改一个维度Prompt措辞 OR 模型 OR temperature否则无法判断是哪个变量起作用。## 结语LLM应用的优化不应该靠感觉应该靠数据。建立科学的A/B测试体系需要投入时间但这是构建高质量AI产品的必要成本。一个没有测试体系的AI团队就像在蒙眼飞行——运气好的时候没事运气差的时候才发现优化把产品质量拉低了。

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如若转载,请注明出处:http://www.coloradmin.cn/o/2584194.html

如若内容造成侵权/违法违规/事实不符,请联系多彩编程网进行投诉反馈,一经查实,立即删除!相关文章

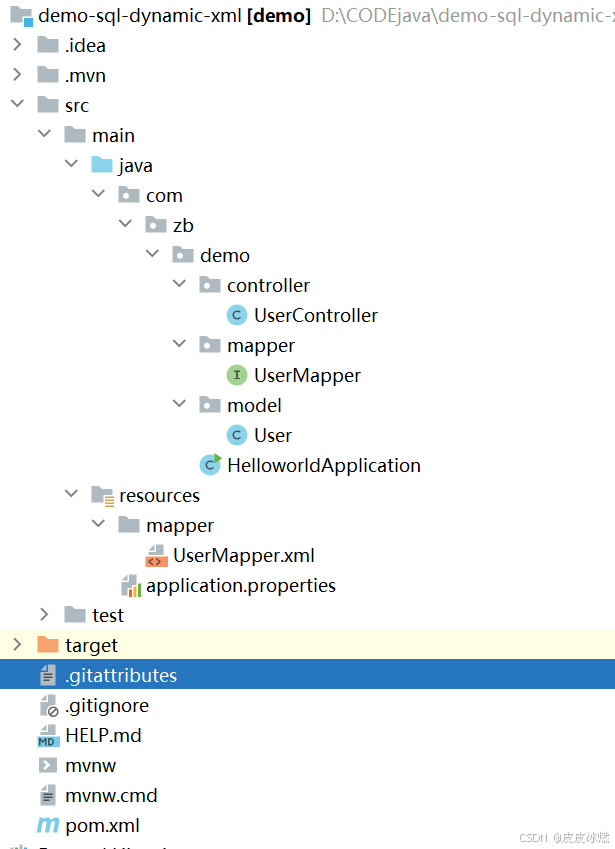

SpringBoot-17-MyBatis动态SQL标签之常用标签

文章目录 1 代码1.1 实体User.java1.2 接口UserMapper.java1.3 映射UserMapper.xml1.3.1 标签if1.3.2 标签if和where1.3.3 标签choose和when和otherwise1.4 UserController.java2 常用动态SQL标签2.1 标签set2.1.1 UserMapper.java2.1.2 UserMapper.xml2.1.3 UserController.ja…

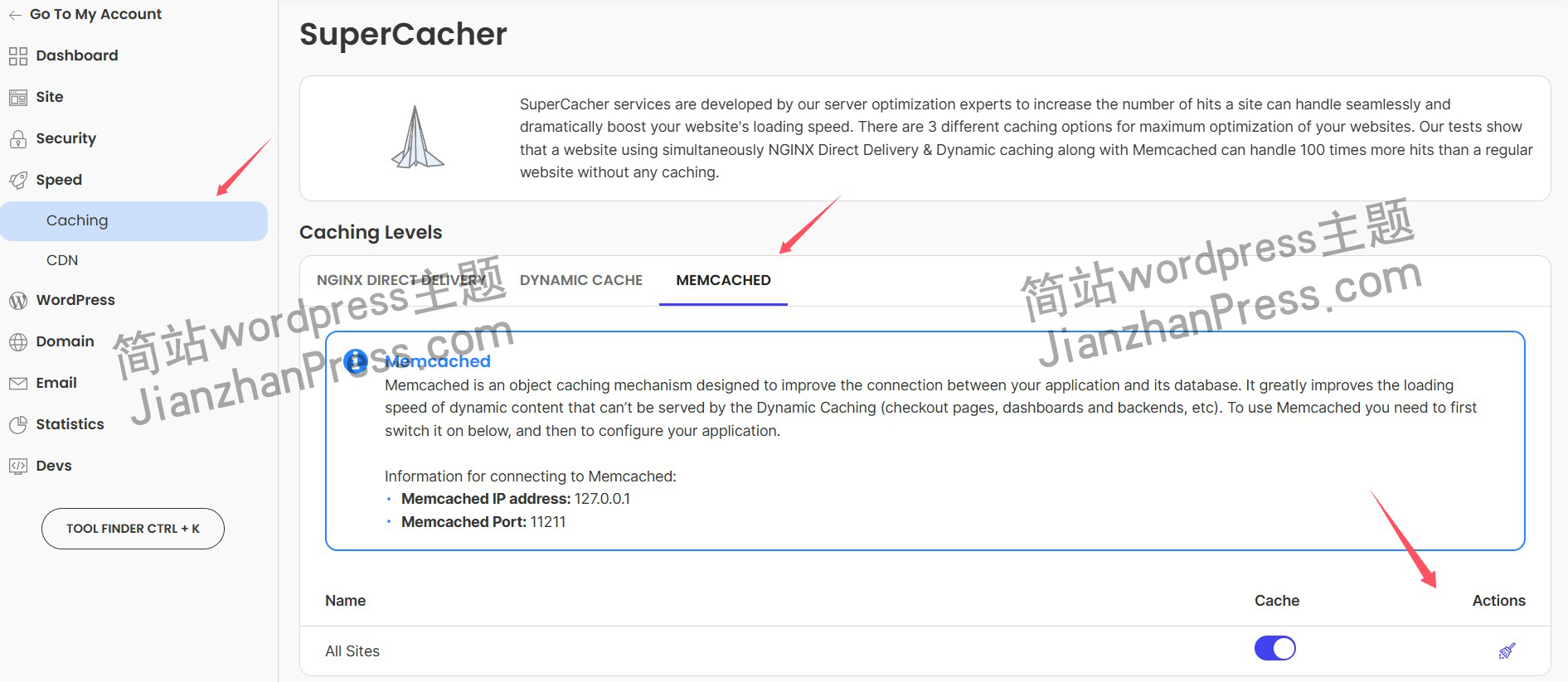

wordpress后台更新后 前端没变化的解决方法

使用siteground主机的wordpress网站,会出现更新了网站内容和修改了php模板文件、js文件、css文件、图片文件后,网站没有变化的情况。

不熟悉siteground主机的新手,遇到这个问题,就很抓狂,明明是哪都没操作错误&#x…

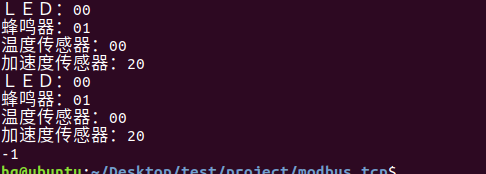

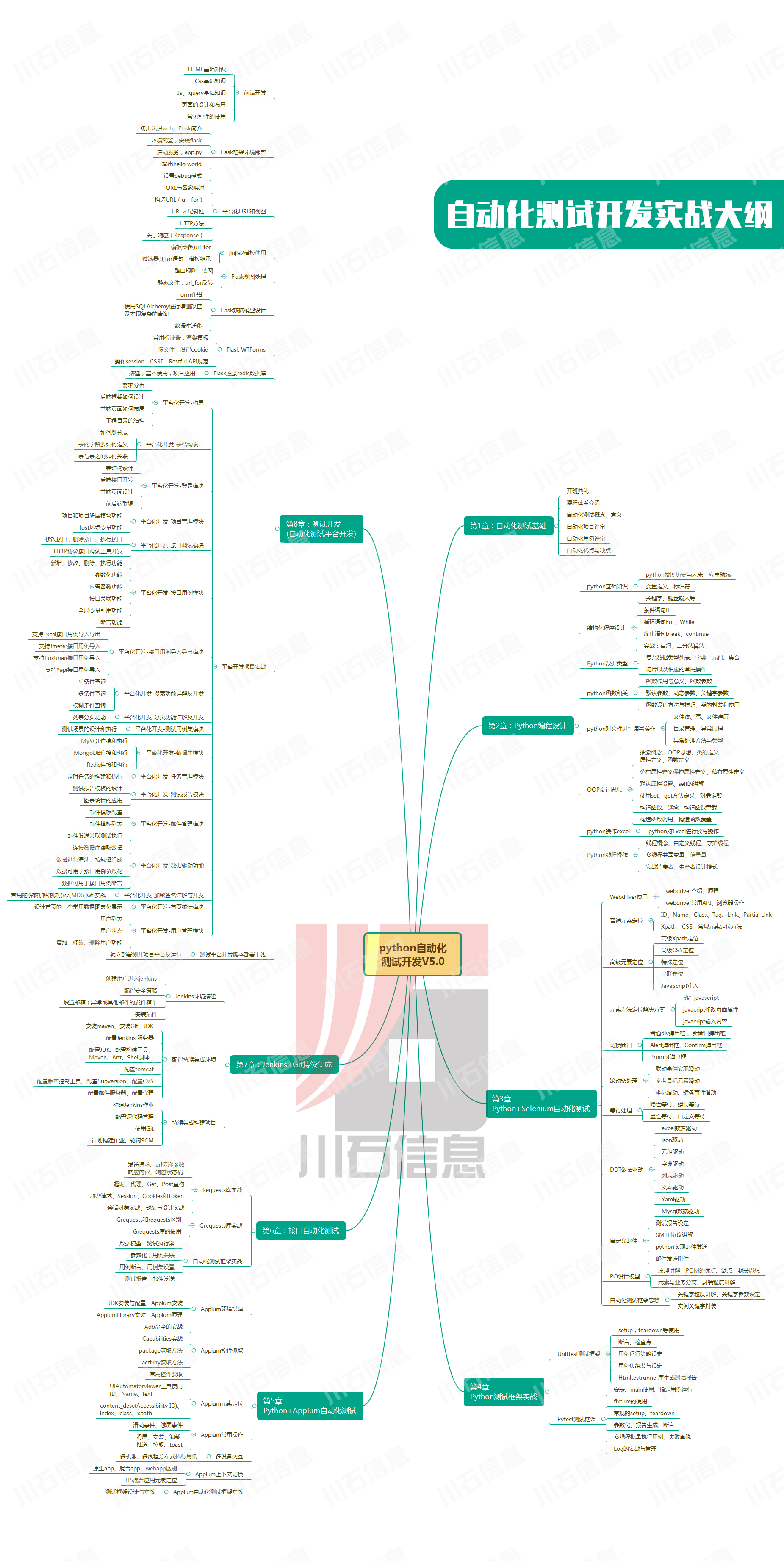

网络编程(Modbus进阶)

思维导图 Modbus RTU(先学一点理论)

概念 Modbus RTU 是工业自动化领域 最广泛应用的串行通信协议,由 Modicon 公司(现施耐德电气)于 1979 年推出。它以 高效率、强健性、易实现的特点成为工业控制系统的通信标准。 包…

UE5 学习系列(二)用户操作界面及介绍

这篇博客是 UE5 学习系列博客的第二篇,在第一篇的基础上展开这篇内容。博客参考的 B 站视频资料和第一篇的链接如下:

【Note】:如果你已经完成安装等操作,可以只执行第一篇博客中 2. 新建一个空白游戏项目 章节操作,重…

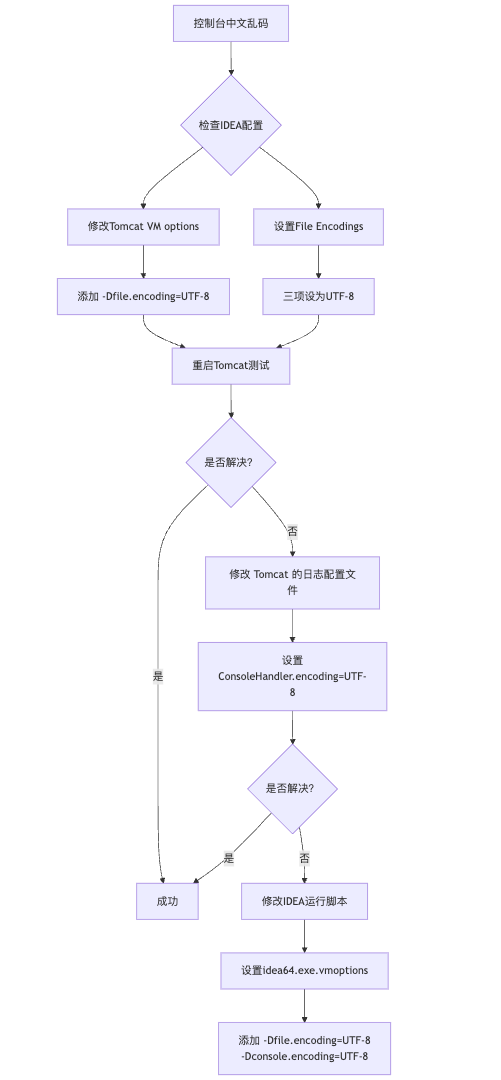

IDEA运行Tomcat出现乱码问题解决汇总

最近正值期末周,有很多同学在写期末Java web作业时,运行tomcat出现乱码问题,经过多次解决与研究,我做了如下整理:

原因:

IDEA本身编码与tomcat的编码与Windows编码不同导致,Windows 系统控制台…

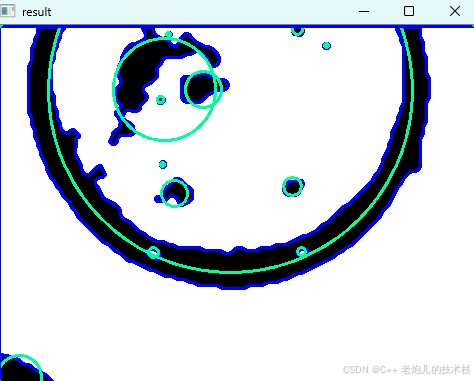

利用最小二乘法找圆心和半径

#include <iostream>

#include <vector>

#include <cmath>

#include <Eigen/Dense> // 需安装Eigen库用于矩阵运算 // 定义点结构

struct Point { double x, y; Point(double x_, double y_) : x(x_), y(y_) {}

}; // 最小二乘法求圆心和半径 …

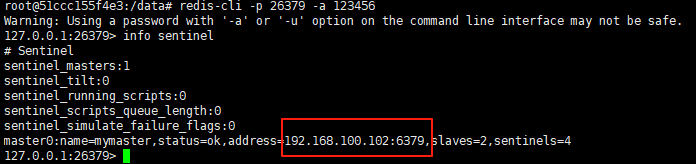

使用docker在3台服务器上搭建基于redis 6.x的一主两从三台均是哨兵模式

一、环境及版本说明

如果服务器已经安装了docker,则忽略此步骤,如果没有安装,则可以按照一下方式安装: 1. 在线安装(有互联网环境): 请看我这篇文章 传送阵>> 点我查看 2. 离线安装(内网环境):请看我这篇文章 传送阵>> 点我查看

说明:假设每台服务器已…

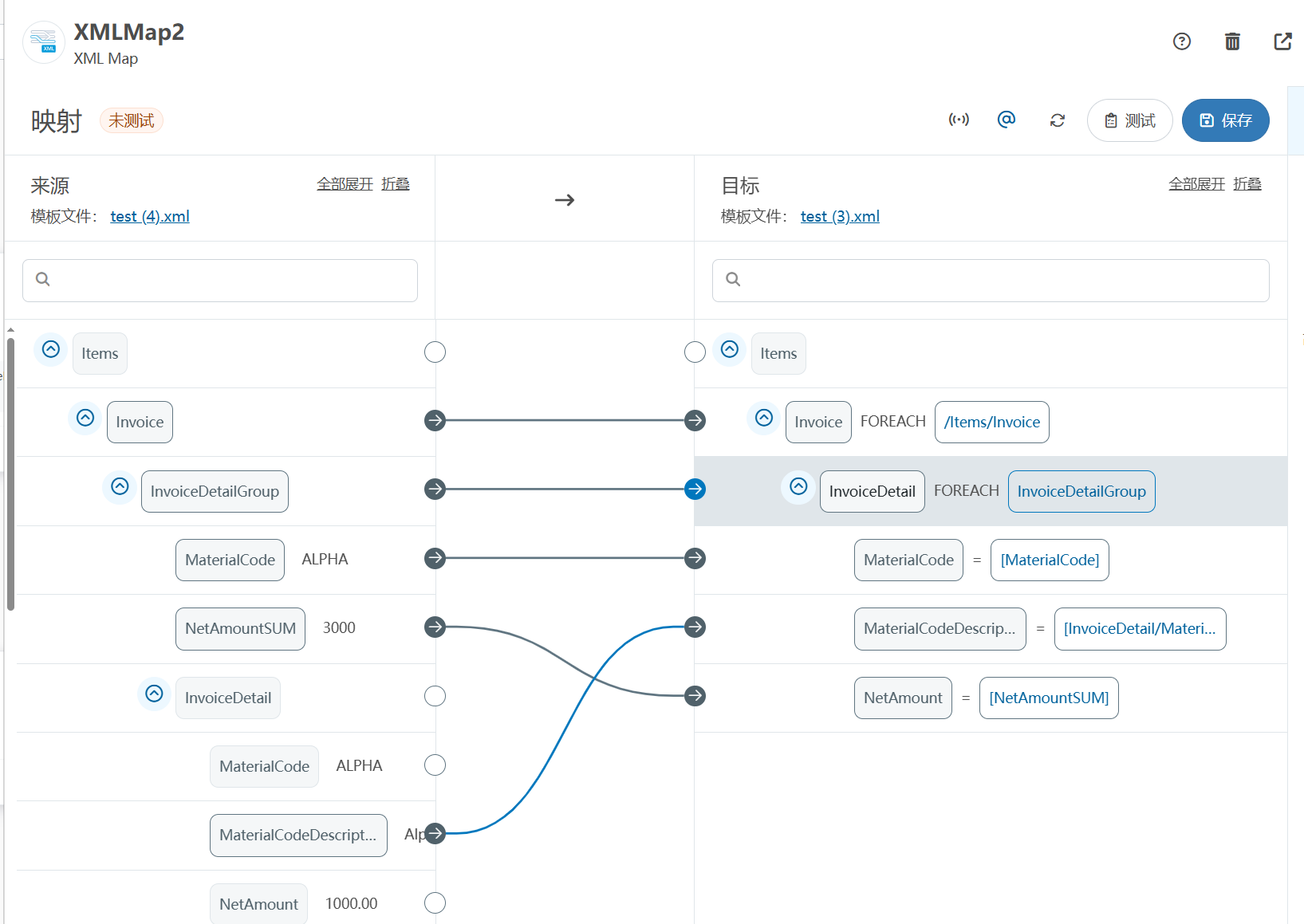

XML Group端口详解

在XML数据映射过程中,经常需要对数据进行分组聚合操作。例如,当处理包含多个物料明细的XML文件时,可能需要将相同物料号的明细归为一组,或对相同物料号的数量进行求和计算。传统实现方式通常需要编写脚本代码,增加了开…

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器的上位机配置操作说明

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器专为工业环境精心打造,完美适配AGV和无人叉车。同时,集成以太网与语音合成技术,为各类高级系统(如MES、调度系统、库位管理、立库等)提供高效便捷的语音交互体验。

L…

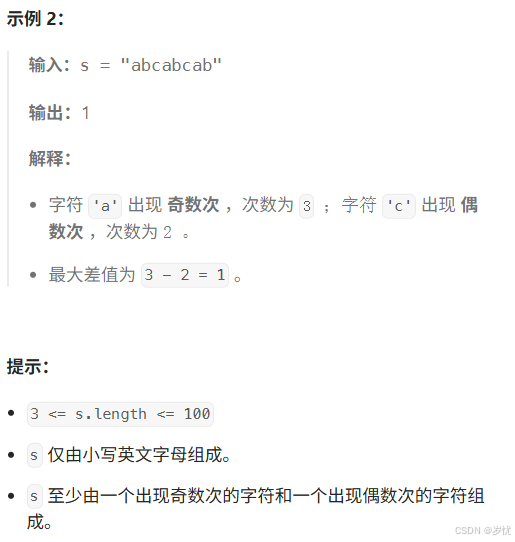

(LeetCode 每日一题) 3442. 奇偶频次间的最大差值 I (哈希、字符串)

题目:3442. 奇偶频次间的最大差值 I 思路 :哈希,时间复杂度0(n)。 用哈希表来记录每个字符串中字符的分布情况,哈希表这里用数组即可实现。

C版本:

class Solution {

public:int maxDifference(string s) {int a[26]…

【大模型RAG】拍照搜题技术架构速览:三层管道、两级检索、兜底大模型

摘要

拍照搜题系统采用“三层管道(多模态 OCR → 语义检索 → 答案渲染)、两级检索(倒排 BM25 向量 HNSW)并以大语言模型兜底”的整体框架: 多模态 OCR 层 将题目图片经过超分、去噪、倾斜校正后,分别用…

【Axure高保真原型】引导弹窗

今天和大家中分享引导弹窗的原型模板,载入页面后,会显示引导弹窗,适用于引导用户使用页面,点击完成后,会显示下一个引导弹窗,直至最后一个引导弹窗完成后进入首页。具体效果可以点击下方视频观看或打开下方…

接口测试中缓存处理策略

在接口测试中,缓存处理策略是一个关键环节,直接影响测试结果的准确性和可靠性。合理的缓存处理策略能够确保测试环境的一致性,避免因缓存数据导致的测试偏差。以下是接口测试中常见的缓存处理策略及其详细说明:

一、缓存处理的核…

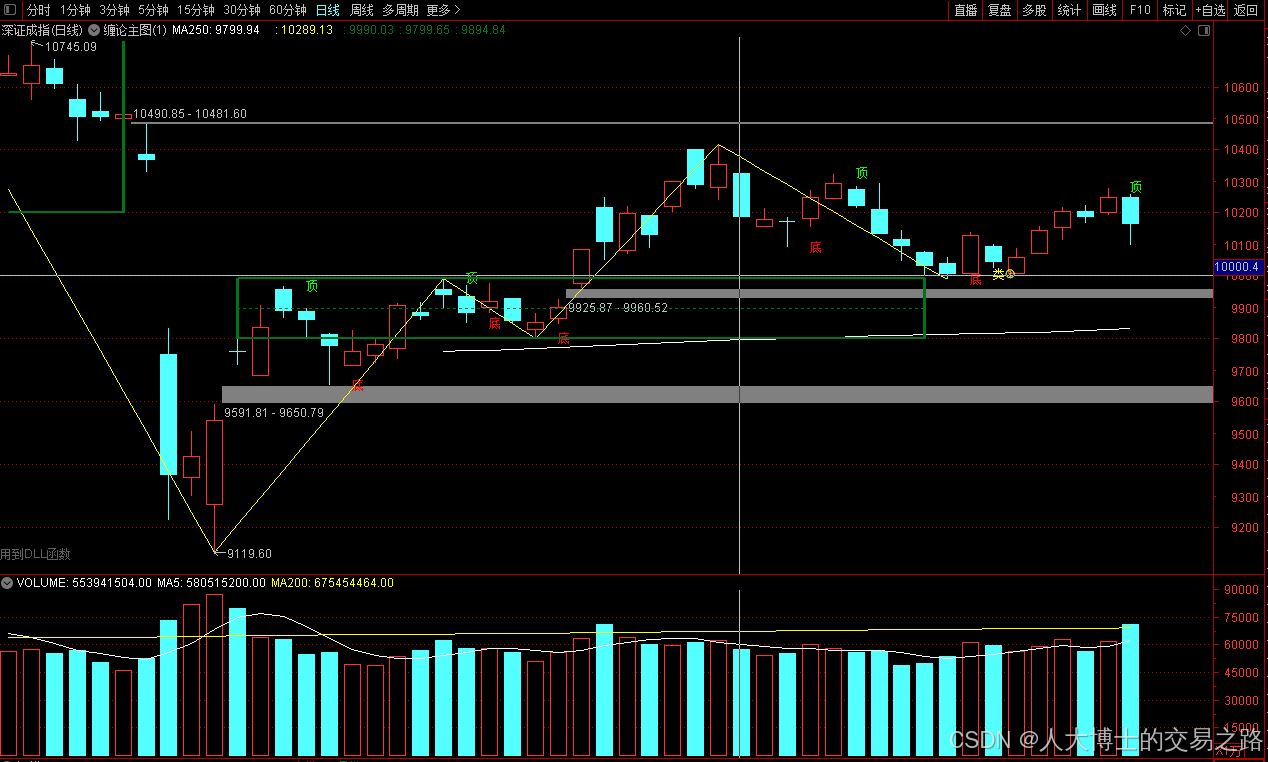

龙虎榜——20250610

上证指数放量收阴线,个股多数下跌,盘中受消息影响大幅波动。 深证指数放量收阴线形成顶分型,指数短线有调整的需求,大概需要一两天。 2025年6月10日龙虎榜行业方向分析 1. 金融科技

代表标的:御银股份、雄帝科技

驱动…

观成科技:隐蔽隧道工具Ligolo-ng加密流量分析

1.工具介绍

Ligolo-ng是一款由go编写的高效隧道工具,该工具基于TUN接口实现其功能,利用反向TCP/TLS连接建立一条隐蔽的通信信道,支持使用Let’s Encrypt自动生成证书。Ligolo-ng的通信隐蔽性体现在其支持多种连接方式,适应复杂网…

铭豹扩展坞 USB转网口 突然无法识别解决方法

当 USB 转网口扩展坞在一台笔记本上无法识别,但在其他电脑上正常工作时,问题通常出在笔记本自身或其与扩展坞的兼容性上。以下是系统化的定位思路和排查步骤,帮助你快速找到故障原因:

背景:

一个M-pard(铭豹)扩展坞的网卡突然无法识别了,扩展出来的三个USB接口正常。…

未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?

编辑:陈萍萍的公主一点人工一点智能 未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?RWM通过双自回归机制有效解决了复合误差、部分可观测性和随机动力学等关键挑战,在不依赖领域特定归纳偏见的条件下实现了卓越的预测准…

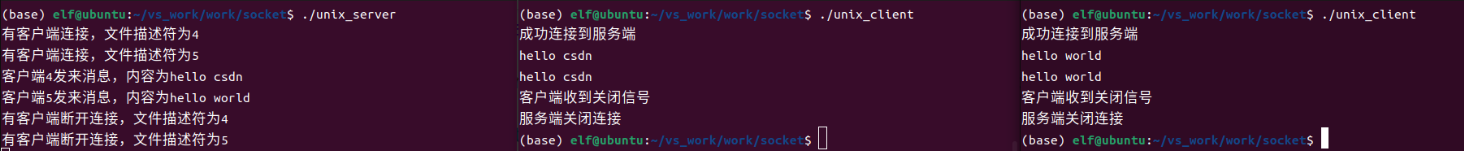

Linux应用开发之网络套接字编程(实例篇)

服务端与客户端单连接

服务端代码

#include <sys/socket.h>

#include <sys/types.h>

#include <netinet/in.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <arpa/inet.h>

#include <pthread.h>

…

华为云AI开发平台ModelArts

华为云ModelArts:重塑AI开发流程的“智能引擎”与“创新加速器”!

在人工智能浪潮席卷全球的2025年,企业拥抱AI的意愿空前高涨,但技术门槛高、流程复杂、资源投入巨大的现实,却让许多创新构想止步于实验室。数据科学家…

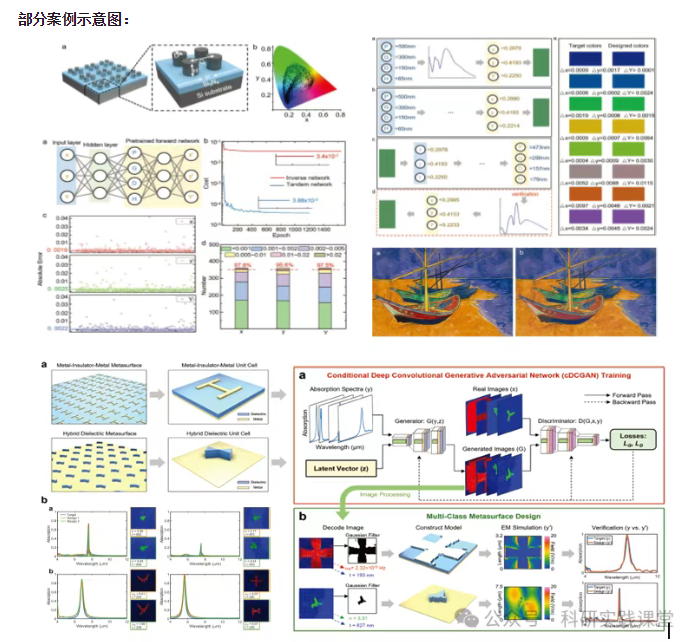

深度学习在微纳光子学中的应用

深度学习在微纳光子学中的主要应用方向

深度学习与微纳光子学的结合主要集中在以下几个方向:

逆向设计 通过神经网络快速预测微纳结构的光学响应,替代传统耗时的数值模拟方法。例如设计超表面、光子晶体等结构。

特征提取与优化 从复杂的光学数据中自…