python实现skip-gram(跳词)示例

news2026/4/2 19:57:50

文章目录示例什么是跳词?一句话就是用中心词去预测它周围的词。它是 Word2Vec 里最常用的一种训练方式。示例1、安装依赖pip install matplotlib# 其他torch等依赖早就安装了2、创建python文件skip_gram_demo.py代码importtorchimporttorch.nnasnnimporttorch.optimasoptimimportmatplotlib.pyplotaspltfromcollectionsimportCounter# # 1. 数据准备与预处理# # 一个简单的微型语料库corpus deep learning is powerful machine learning is a subset of artificial intelligence deep learning models are inspired by the brain natural language processing uses deep learning # 文本清洗与分词wordscorpus.lower().split()# 构建词汇表 (Word - Index)vocablist(set(words))word_to_idx{w:ifori,winenumerate(vocab)}idx_to_word{i:wfori,winenumerate(vocab)}vocab_sizelen(vocab)print(f词汇表大小:{vocab_size})print(f词汇表:{vocab})# 生成训练数据 (Skip-gram: 输入中心词 - 输出上下文词)defcreate_dataloader(words,word_to_idx,window_size2):inputs[]targets[]foriinrange(1,len(words)-1):center_wordwords[i]center_idxword_to_idx[center_word]# 获取上下文窗口# 比如 window_size2则取前后各2个词forjinrange(i-window_size,iwindow_size1):ifj!iand0jlen(words):context_wordwords[j]context_idxword_to_idx[context_word]inputs.append(center_idx)targets.append(context_idx)returntorch.tensor(inputs,dtypetorch.long),torch.tensor(targets,dtypetorch.long)inputs,targetscreate_dataloader(words,word_to_idx,window_size2)# # 2. 定义 Skip-gram 模型# classSkipGramModel(nn.Module):def__init__(self,vocab_size,embedding_dim):super(SkipGramModel,self).__init__()# 中心词嵌入层 (W)self.w_innn.Embedding(vocab_size,embedding_dim)# 上下文词嵌入层 (W)self.w_outnn.Embedding(vocab_size,embedding_dim)# 初始化权重nn.init.xavier_uniform_(self.w_in.weight)nn.init.xavier_uniform_(self.w_out.weight)defforward(self,x):# x: (batch_size,)# 获取中心词的向量embedsself.w_in(x)# (batch_size, embedding_dim)returnembedsdefloss(self,x,y):# x: 中心词索引, y: 上下文词索引# 1. 获取中心词向量v_centerself.w_in(x)# (batch_size, dim)# 2. 获取上下文词向量v_contextself.w_out(y)# (batch_size, dim)# 3. 计算点积 (相似度)# 这里的逻辑是点积越大概率越大scoretorch.sum(torch.mul(v_center,v_context),dim1)# (batch_size,)# 4. 使用负对数似然损失 (简化版未包含负采样)# 实际大规模训练中通常配合 Negative Sampling 使用# 这里为了演示简单直接最大化目标词的概率loss-torch.mean(score)returnloss# # 3. 训练模型# embedding_dim10# 词向量维度learning_rate0.01epochs1000modelSkipGramModel(vocab_size,embedding_dim)optimizeroptim.SGD(model.parameters(),lrlearning_rate)print(\n开始训练...)forepochinrange(epochs):optimizer.zero_grad()# 前向传播lossmodel.loss(inputs,targets)# 反向传播loss.backward()optimizer.step()if(epoch1)%2000:print(fEpoch{epoch1}, Loss:{loss.item():.4f})# # 4. 结果可视化与测试# print(\n训练完成查看词向量相似度...)# 获取嵌入权重embeddingsmodel.w_in.weight.data.numpy()# 简单的余弦相似度计算defcosine_similarity(w1,w2):returnnp.dot(w1,w2)/(np.linalg.norm(w1)*np.linalg.norm(w2))# 测试几个词test_words[learning,deep,artificial,brain]importnumpyasnpforw1intest_words:ifw1inword_to_idx:vec1embeddings[word_to_idx[w1]]print(f\n与 {w1} 最相似的词:)similarities[]forw2invocab:ifw1!w2:vec2embeddings[word_to_idx[w2]]simcosine_similarity(vec1,vec2)similarities.append((w2,sim))# 排序并打印前3个similarities.sort(keylambdax:x[1],reverseTrue)forword,scoreinsimilarities[:3]:print(f{word}:{score:.4f})# 2D 可视化 (PCA 降维)fromsklearn.decompositionimportPCA pcaPCA(n_components2)reduced_embedspca.fit_transform(embeddings)plt.figure(figsize(10,8))fori,wordinenumerate(vocab):plt.scatter(reduced_embeds[i,0],reduced_embeds[i,1])plt.annotate(word,(reduced_embeds[i,0],reduced_embeds[i,1]))plt.title(Word Embeddings Visualization (PCA))plt.xlabel(PC1)plt.ylabel(PC2)plt.grid(True)plt.show()输出结果词汇表大小:20词汇表:[artificial,inspired,brain,natural,is,are,learning,by,machine,powerful,processing,language,a,intelligence,uses,subset,deep,models,the,of]开始训练...Epoch200,Loss:-0.0312Epoch400,Loss:-0.0661Epoch600,Loss:-0.1041Epoch800,Loss:-0.1467Epoch1000,Loss:-0.1957训练完成查看词向量相似度...与learning最相似的词:inspired:0.6657are:0.4793is:0.4745与deep最相似的词:machine:0.6026intelligence:0.5229processing:0.4629与artificial最相似的词:is:0.5218by:0.5195the:0.5013与brain最相似的词:subset:0.2076powerful:0.1457language:0.0755解读给了一堆杂乱的文字它居然将这些词分出了远近关系。成功了。

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如若转载,请注明出处:http://www.coloradmin.cn/o/2476463.html

如若内容造成侵权/违法违规/事实不符,请联系多彩编程网进行投诉反馈,一经查实,立即删除!相关文章

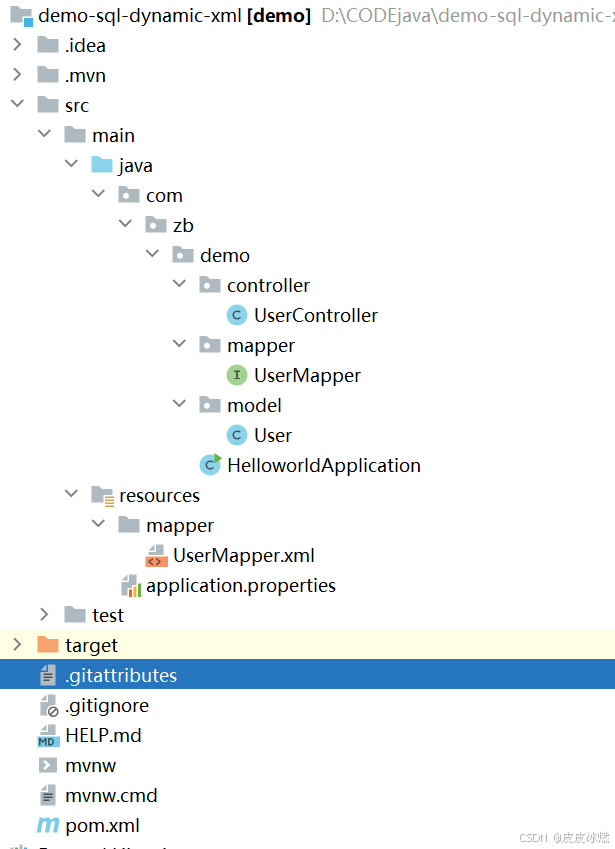

SpringBoot-17-MyBatis动态SQL标签之常用标签

文章目录 1 代码1.1 实体User.java1.2 接口UserMapper.java1.3 映射UserMapper.xml1.3.1 标签if1.3.2 标签if和where1.3.3 标签choose和when和otherwise1.4 UserController.java2 常用动态SQL标签2.1 标签set2.1.1 UserMapper.java2.1.2 UserMapper.xml2.1.3 UserController.ja…

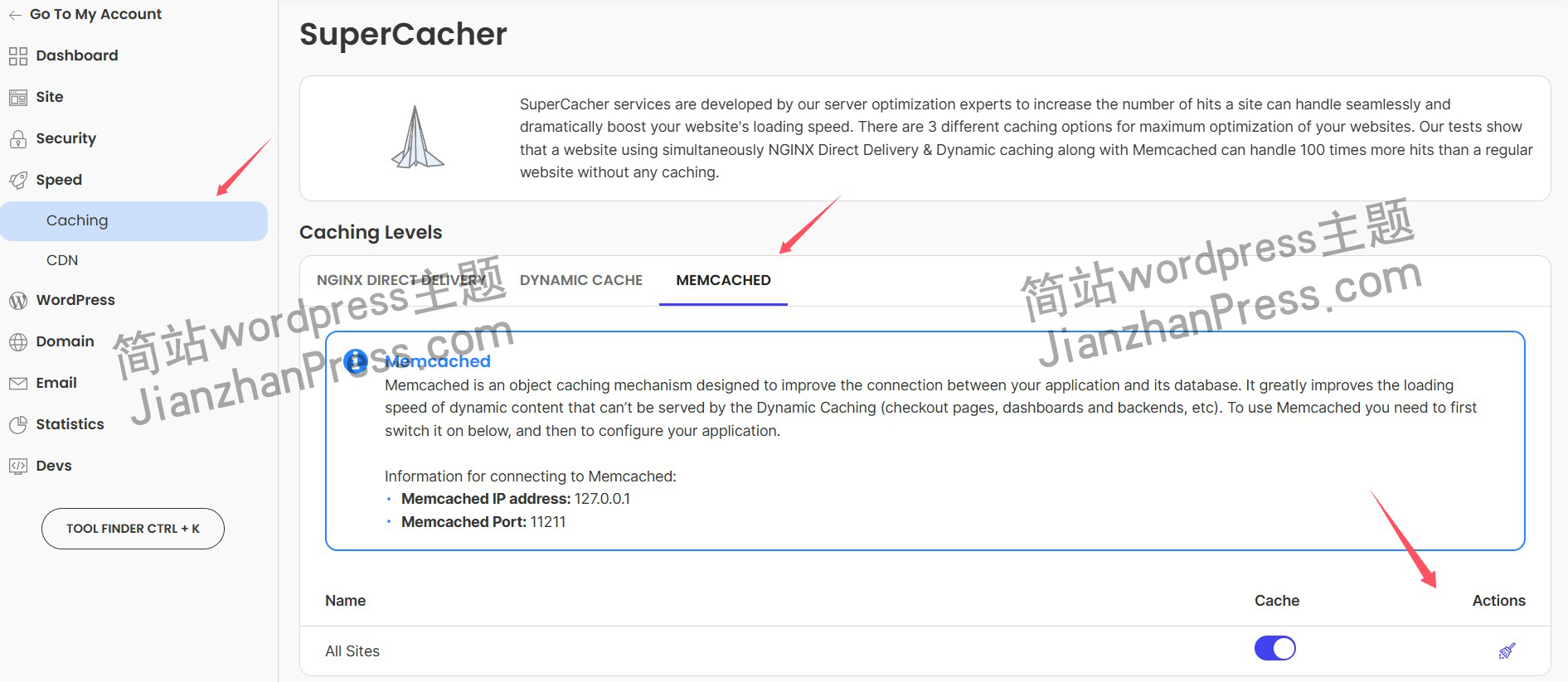

wordpress后台更新后 前端没变化的解决方法

使用siteground主机的wordpress网站,会出现更新了网站内容和修改了php模板文件、js文件、css文件、图片文件后,网站没有变化的情况。

不熟悉siteground主机的新手,遇到这个问题,就很抓狂,明明是哪都没操作错误&#x…

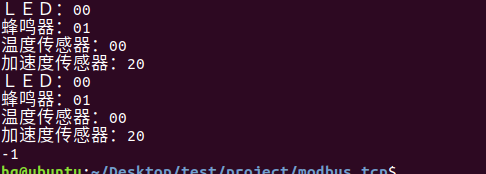

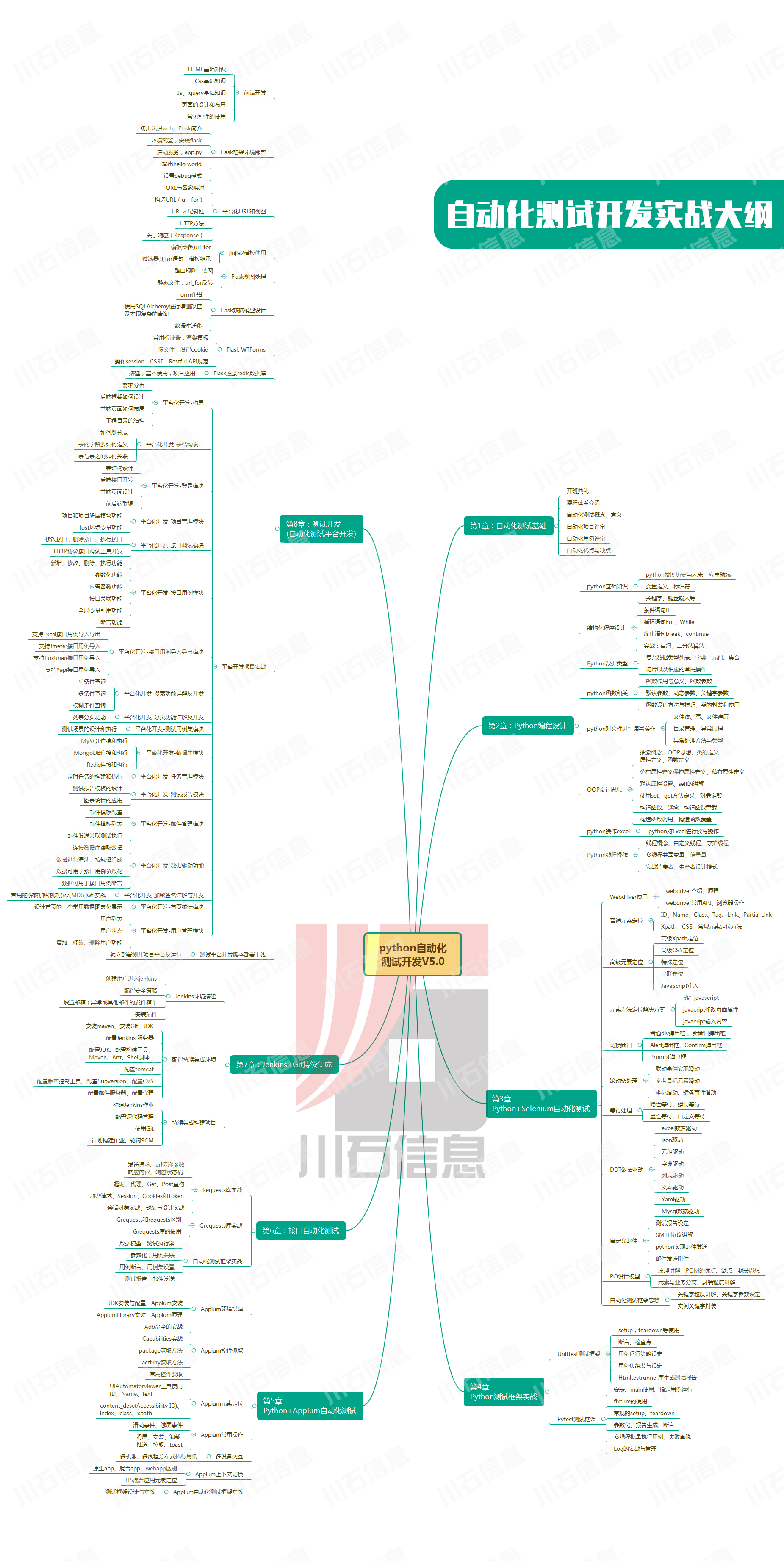

网络编程(Modbus进阶)

思维导图 Modbus RTU(先学一点理论)

概念 Modbus RTU 是工业自动化领域 最广泛应用的串行通信协议,由 Modicon 公司(现施耐德电气)于 1979 年推出。它以 高效率、强健性、易实现的特点成为工业控制系统的通信标准。 包…

UE5 学习系列(二)用户操作界面及介绍

这篇博客是 UE5 学习系列博客的第二篇,在第一篇的基础上展开这篇内容。博客参考的 B 站视频资料和第一篇的链接如下:

【Note】:如果你已经完成安装等操作,可以只执行第一篇博客中 2. 新建一个空白游戏项目 章节操作,重…

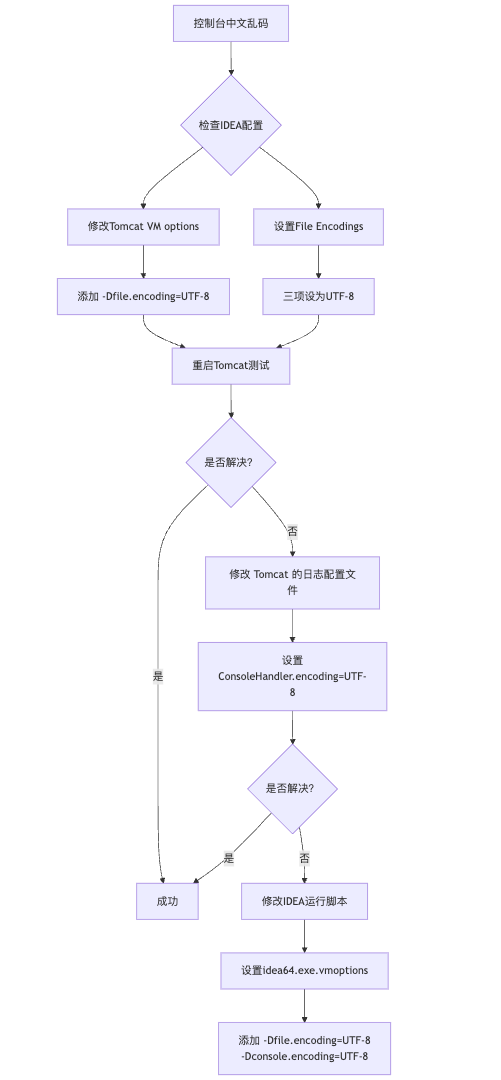

IDEA运行Tomcat出现乱码问题解决汇总

最近正值期末周,有很多同学在写期末Java web作业时,运行tomcat出现乱码问题,经过多次解决与研究,我做了如下整理:

原因:

IDEA本身编码与tomcat的编码与Windows编码不同导致,Windows 系统控制台…

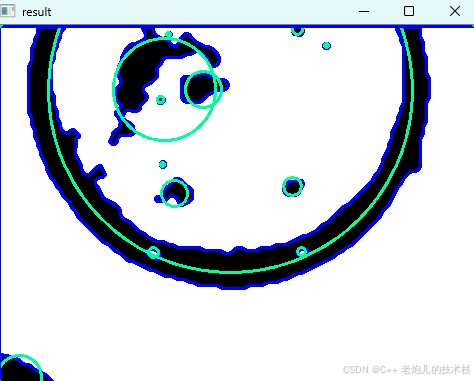

利用最小二乘法找圆心和半径

#include <iostream>

#include <vector>

#include <cmath>

#include <Eigen/Dense> // 需安装Eigen库用于矩阵运算 // 定义点结构

struct Point { double x, y; Point(double x_, double y_) : x(x_), y(y_) {}

}; // 最小二乘法求圆心和半径 …

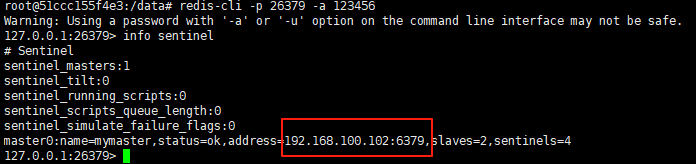

使用docker在3台服务器上搭建基于redis 6.x的一主两从三台均是哨兵模式

一、环境及版本说明

如果服务器已经安装了docker,则忽略此步骤,如果没有安装,则可以按照一下方式安装: 1. 在线安装(有互联网环境): 请看我这篇文章 传送阵>> 点我查看 2. 离线安装(内网环境):请看我这篇文章 传送阵>> 点我查看

说明:假设每台服务器已…

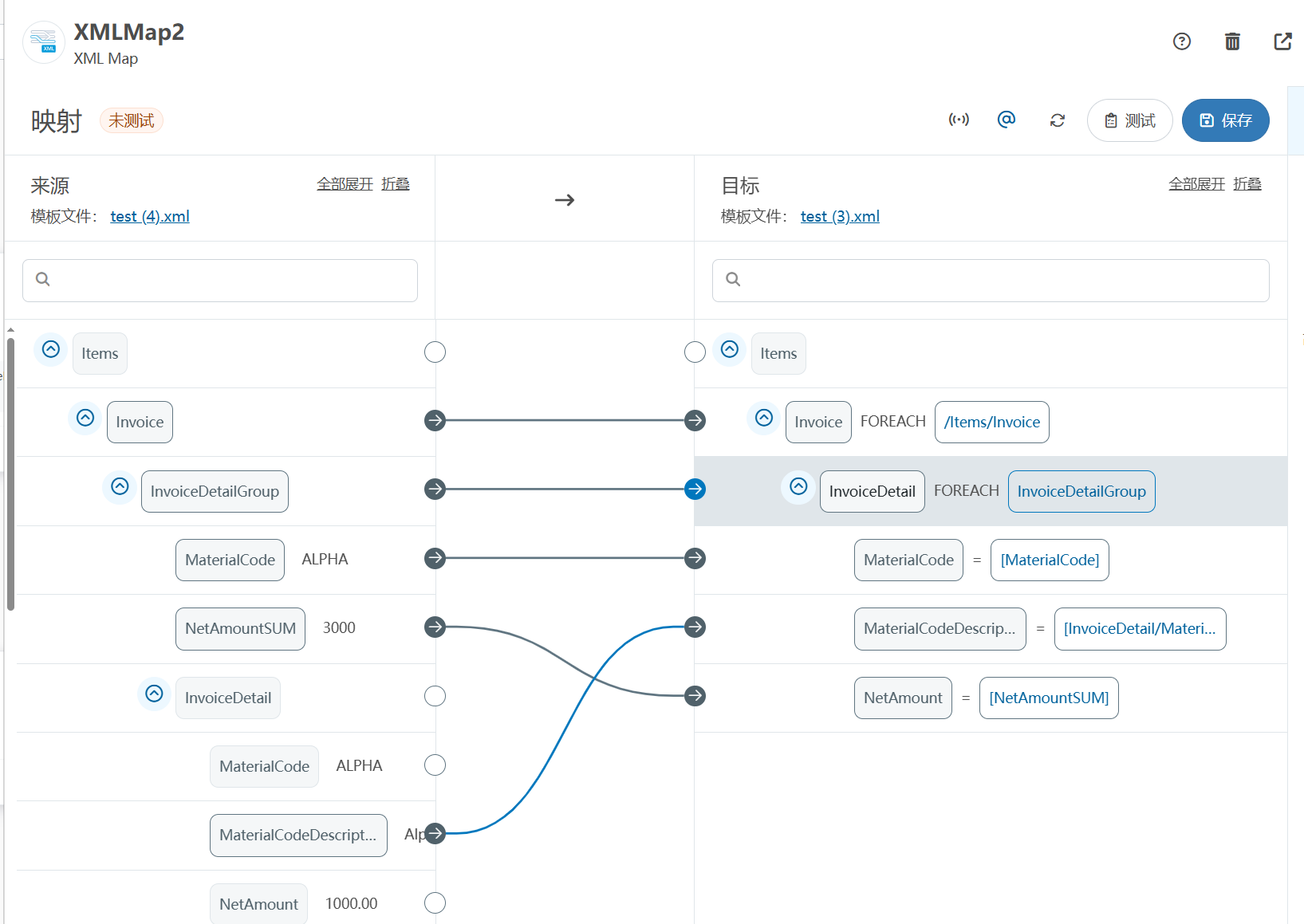

XML Group端口详解

在XML数据映射过程中,经常需要对数据进行分组聚合操作。例如,当处理包含多个物料明细的XML文件时,可能需要将相同物料号的明细归为一组,或对相同物料号的数量进行求和计算。传统实现方式通常需要编写脚本代码,增加了开…

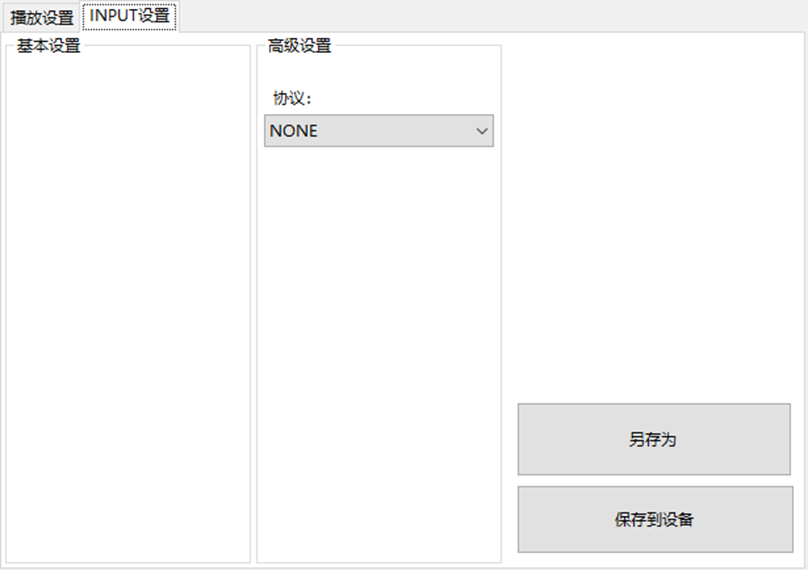

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器的上位机配置操作说明

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器专为工业环境精心打造,完美适配AGV和无人叉车。同时,集成以太网与语音合成技术,为各类高级系统(如MES、调度系统、库位管理、立库等)提供高效便捷的语音交互体验。

L…

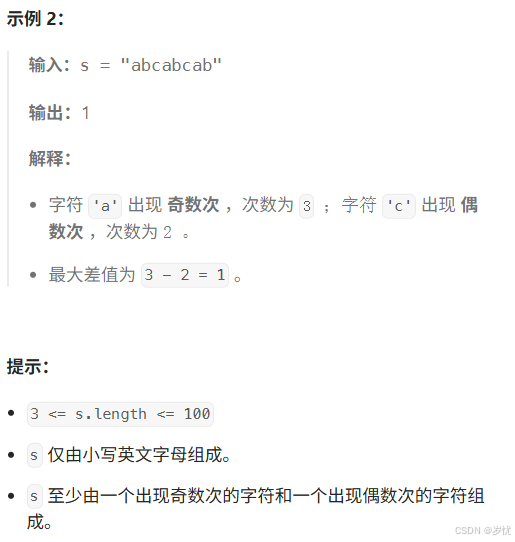

(LeetCode 每日一题) 3442. 奇偶频次间的最大差值 I (哈希、字符串)

题目:3442. 奇偶频次间的最大差值 I 思路 :哈希,时间复杂度0(n)。 用哈希表来记录每个字符串中字符的分布情况,哈希表这里用数组即可实现。

C版本:

class Solution {

public:int maxDifference(string s) {int a[26]…

【大模型RAG】拍照搜题技术架构速览:三层管道、两级检索、兜底大模型

摘要

拍照搜题系统采用“三层管道(多模态 OCR → 语义检索 → 答案渲染)、两级检索(倒排 BM25 向量 HNSW)并以大语言模型兜底”的整体框架: 多模态 OCR 层 将题目图片经过超分、去噪、倾斜校正后,分别用…

【Axure高保真原型】引导弹窗

今天和大家中分享引导弹窗的原型模板,载入页面后,会显示引导弹窗,适用于引导用户使用页面,点击完成后,会显示下一个引导弹窗,直至最后一个引导弹窗完成后进入首页。具体效果可以点击下方视频观看或打开下方…

接口测试中缓存处理策略

在接口测试中,缓存处理策略是一个关键环节,直接影响测试结果的准确性和可靠性。合理的缓存处理策略能够确保测试环境的一致性,避免因缓存数据导致的测试偏差。以下是接口测试中常见的缓存处理策略及其详细说明:

一、缓存处理的核…

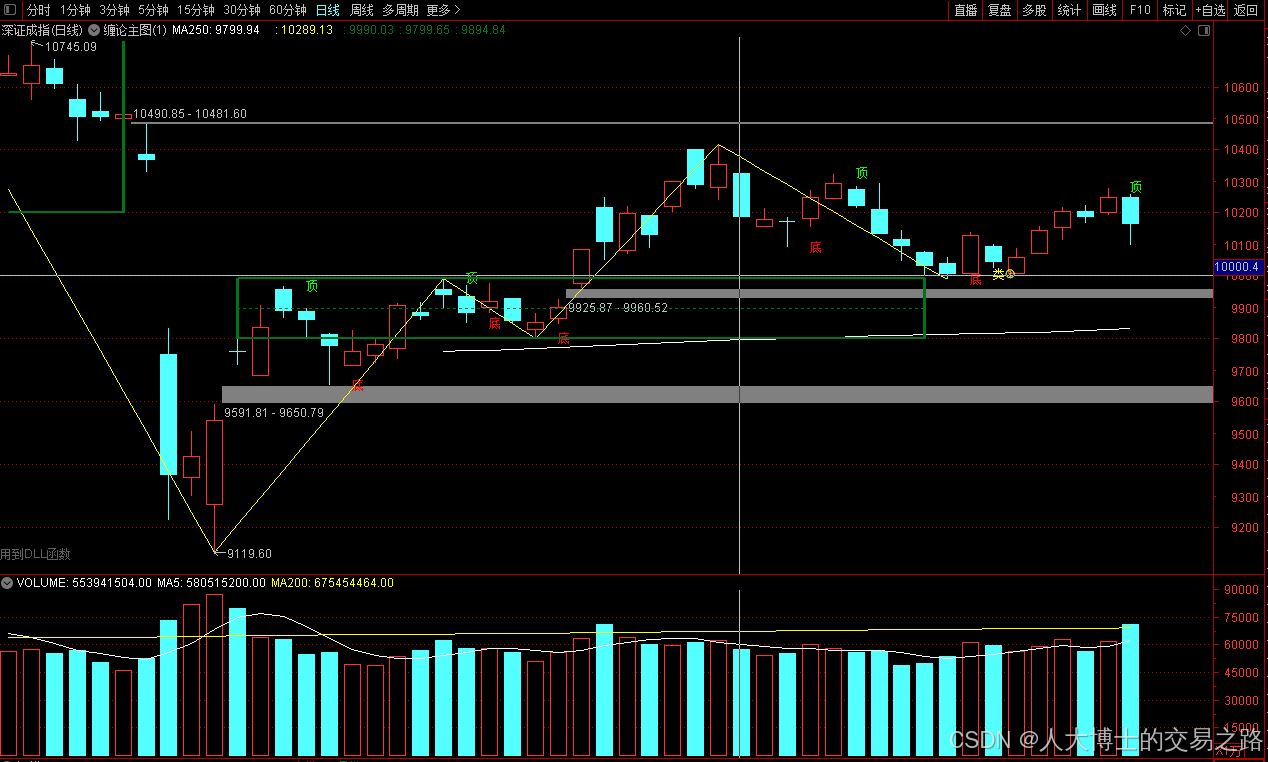

龙虎榜——20250610

上证指数放量收阴线,个股多数下跌,盘中受消息影响大幅波动。 深证指数放量收阴线形成顶分型,指数短线有调整的需求,大概需要一两天。 2025年6月10日龙虎榜行业方向分析 1. 金融科技

代表标的:御银股份、雄帝科技

驱动…

观成科技:隐蔽隧道工具Ligolo-ng加密流量分析

1.工具介绍

Ligolo-ng是一款由go编写的高效隧道工具,该工具基于TUN接口实现其功能,利用反向TCP/TLS连接建立一条隐蔽的通信信道,支持使用Let’s Encrypt自动生成证书。Ligolo-ng的通信隐蔽性体现在其支持多种连接方式,适应复杂网…

铭豹扩展坞 USB转网口 突然无法识别解决方法

当 USB 转网口扩展坞在一台笔记本上无法识别,但在其他电脑上正常工作时,问题通常出在笔记本自身或其与扩展坞的兼容性上。以下是系统化的定位思路和排查步骤,帮助你快速找到故障原因:

背景:

一个M-pard(铭豹)扩展坞的网卡突然无法识别了,扩展出来的三个USB接口正常。…

未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?

编辑:陈萍萍的公主一点人工一点智能 未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?RWM通过双自回归机制有效解决了复合误差、部分可观测性和随机动力学等关键挑战,在不依赖领域特定归纳偏见的条件下实现了卓越的预测准…

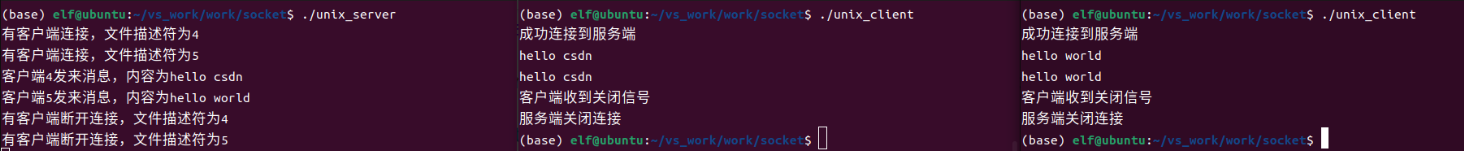

Linux应用开发之网络套接字编程(实例篇)

服务端与客户端单连接

服务端代码

#include <sys/socket.h>

#include <sys/types.h>

#include <netinet/in.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <arpa/inet.h>

#include <pthread.h>

…

华为云AI开发平台ModelArts

华为云ModelArts:重塑AI开发流程的“智能引擎”与“创新加速器”!

在人工智能浪潮席卷全球的2025年,企业拥抱AI的意愿空前高涨,但技术门槛高、流程复杂、资源投入巨大的现实,却让许多创新构想止步于实验室。数据科学家…

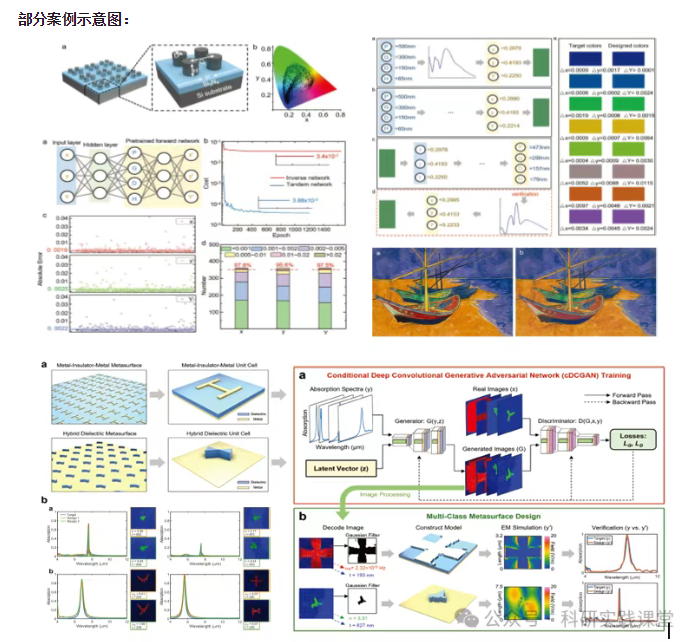

深度学习在微纳光子学中的应用

深度学习在微纳光子学中的主要应用方向

深度学习与微纳光子学的结合主要集中在以下几个方向:

逆向设计 通过神经网络快速预测微纳结构的光学响应,替代传统耗时的数值模拟方法。例如设计超表面、光子晶体等结构。

特征提取与优化 从复杂的光学数据中自…