DriveVLA-W0:世界模型在自动驾驶中放大数据规模定律【在动作信号的基础上增加视觉自监督信号可增强VLA效果(扩散世界模型、自回归世界模型效果都不错,图4显示扩散策略稍好一些)】

news2026/3/31 1:05:00

第001/22页(英文原文)DRIVEVLA-W0: WORLD MODELS AMPLIFY DATA SCALING LAW IN AUTONOMOUS DRIVINGYingyan Li1∗ Shuyao Shang1∗ Weisong Liu1∗ Bing Zhan1∗ Haochen Wang1∗Yuqi Wang1 Yuntao Chen1 Xiaoman Wang2 Yasong An2Chufeng Tang2 Lu Hou2 Lue Fan1B Zhaoxiang Zhang1B1NLPR, Institute of Automation, Chinese Academy of Sciences (CASIA)2Yinwang Intelligent Technology Co. Ltd.{liyingyan2021,shangshuyao2024,liuweisong2024,zhanbing2024}@ia.ac.cn{lue.fan, zhaoxiang.zhang}@ia.ac.cnCode: https://github.com/BraveGroup/DriveVLA-W0Figure 1: World modeling as a catalyst for VLA data scalability. (a): Unlike standard VLAs trained solely on action supervision, our DriveVLA-W0 is trained to predict both future actions and visual scenes. (b): This world modeling task provides a dense source of supervision, enabling our model to better harness the benefits of large-scale data.ABSTRACTScaling Vision-Language-Action (VLA) models on large-scale data offers a promising path to achieving a more generalized driving intelligence. However, VLA models are limited by a “supervision deficit”: the vast model capacity is supervised by sparse, low-dimensional actions, leaving much of their representational power underutilized. To remedy this, we propose DriveVLA-W0, a training paradigm that employs world modeling to predict future images. This task generates a dense, self-supervised signal that compels the model to learn the underlying dynamics of the driving environment. We showcase the paradigm’s versatility by instantiating it for two dominant VLA archetypes: an autoregressive world model for VLAs that use discrete visual tokens, and a diffusion world model for those operating on continuous visual features. Building on the rich representations learned from world modeling, we introduce a lightweight action expert to address the inference latency for real-time deployment. Extensive experiments on the NAVSIM v1/v2 benchmark and a680 x 6 8 0 \mathrm { x }680xlarger in-house dataset demonstrate that DriveVLA-W0 significantly outperforms BEV and VLA baselines. Crucially, it amplifies the data scaling law, showing that performance gains accelerate as the training dataset size increases.1 INTRODUCTIONThe promise of scaling laws (Kaplan et al., 2020; Zhai et al., 2022; Baniodeh et al., 2025; Dehghani et al., 2023) presents an attractive path toward more generalized driving intelligence, with the hope that petabytes of driving data can be harnessed to train powerful foundation models. In the current第001/22页(中文翻译)DRIVEVLA‑W0:世界模型在自动驾驶中放大数据规模定律图 1:世界建模作为VLA数据可扩展性的催化剂。(a):与仅通过动作监督训练的标准VLA不同,我们的DriveVLA‑W0被训练来预测未来的动作和视觉场景。(b):这项世界建模任务提供了密集的监督来源,使我们的模型能够更好地利用大规模数据的优势。摘要在大型数据集上扩展视觉‑语言‑动作模型为实现更通用的驾驶智能提供了一条前景广阔的路径。然而,VLA模型受限于“监督赤字”:巨大的模型容量仅由稀疏、低维的动作进行监督,导致其大部分表征能力未被充分利用。为解决此问题,我们提出了DriveVLA-W0,一种采用世界建模来预测未来图像的训练范式。这项任务产生了密集的自监督信号,迫使模型学习驾驶环境的基本动态。我们通过将其应用于两种主流的VLA架构来展示该范式的通用性:为使用离散视觉标记的VLA构建自回归世界模型,以及为基于连续视觉特征的VLA构建扩散世界模型。基于从世界建模中学到的丰富表征,我们引入了一个轻量级的动作专家模块,以解决实时部署的推理延迟问题。在NAVSIM v1/v2基准测试和一个规模扩大680倍的内部数据集上进行的大量实验表明,DriveVLA‑W0显著优于BEV和VLA基线模型。至关重要的是,它放大了数据缩放定律,表明随着训练数据集规模的增加,性能提升会加速。1 引言扩展定律(Kaplan 等人,2020;Zhai 等人,2022;Baniodeh 等人,2025;Dehghani 等人,2023)的前景,为通向更通用的驾驶智能提供了一条诱人的路径,人们希望利用PB级的驾驶数据来训练强大的基础模型。在当前第002/22页(英文原文)landscape, two dominant paradigms exist. On one side are specialized models (Hu et al., 2022; Jiang et al., 2023) centered around Bird’s-Eye-View (BEV) representations (Li et al., 2022; Huang et al., 2021). These models are built upon carefully designed geometric priors, which, while effective for driving-specific tasks, make it less straightforward to leverage non-driving datasets. In addition, their relatively compact architectures may constrain their potential for large-scale data scalability. In response, Vision-Language-Action (VLA) models (Fu et al., 2025; Li et al., 2025b; Zhou et al., 2025c) have emerged as a promising alternative. By leveraging large-scale Vision-Language Models (VLMs) (Wang et al., 2024b; Bai et al., 2025) pretrained on internet-scale data, VLAs possess a significantly larger model size and a greater intrinsic potential for scaling.However, this scaling potential is largely unrealized due to a critical challenge: the immense model size of VLA models is met with extremely sparse supervisory signals. The standard paradigm involves fine-tuning these VLM models solely on expert actions. This tasks the model with mapping high-dimensional sensory inputs to a few low-dimensional control signals (e.g., waypoints). This creates a severe “supervision deficit”. This deficit prevents the model from learning rich world representations, a fundamental limitation that cannot be overcome by simply increasing the volume of action-only training data. In fact, we observe that without sufficient supervision, large VLA models can even underperform smaller, specialized BEV models.To address this supervision deficit, we harness the power of world modeling (Li et al., 2024a; Wang et al., 2025; Cen et al., 2025; Chen et al., 2024), integrating it as a dense self-supervised objective to supplement the sparse action signal. By tasking the model with predicting future images, we generate a dense and rich supervisory signal at every timestep. This objective forces the model to learn the underlying dynamics of the environment and build a rich, predictive world representation. To validate the effectiveness of our approach, we implement it across the two dominant VLA architectural families, which are primarily differentiated by their visual representation: discrete tokens versus continuous features. For VLAs that represent images as discrete visual tokens, world modeling is a natural extension. We propose an autoregressive world model to predict the sequence of discrete visual tokens of future images. For VLAs that operate on continuous features, this task is more challenging as they lack a visual vocabulary, making a direct next-token prediction approach infeasible. To bridge this gap, we introduce a diffusion world model that generates future image pixels conditioned on the vision and action features produced at the current frame.We validate our world modeling approach across multiple data scales, from academic benchmarks to a massive in-house dataset. First, experiments scaling at academic benchmarks reveal that world modeling is crucial for generalization, as it learns robust visual patterns rather than overfitting to dataset-specific action patterns. To study true scaling laws, we then leverage a massive 70M-frame in-house dataset, as Figure 1 shows. This confirms our central hypothesis: world modeling amplifies the data scaling law. This advantage stems from the dense visual supervision provided by future frame prediction, creating a qualitative gap that cannot be closed by purely scaling the quantity of action-only data. Finally, to enable real-time deployment, we introduce a lightweight, MoE-based Action Expert. This expert decouples action generation from the large VLA backbone, reducing inference latency to just63.1 % 6 3 . 1 \%63.1%of the baseline VLA and creating an efficient testbed to study different action decoders at a massive scale. This reveals a compelling reversal of performance trends from smaller to larger data scales. While complex flow-matching decoders often hold an advantage on small datasets, we find that this relationship inverts at a massive scale, where the simpler autoregressive decoder emerges as the top performer. Our work makes three primary contributions:• We identify the “supervision deficit” as a critical bottleneck for scaling VLAs and propose DriveVLA-W0, a paradigm that uses world modeling to provide a dense, self-supervised learning signal from visual prediction.• Our experiments reveal two key scaling advantages of world modeling. First, it enhances generalization across domains with differing action distributions by learning transferable visual representations. Second, on a massive 70M-frame dataset, it amplifies the data scaling law, providing a benefit that simply scaling up action-only supervision cannot achieve.• We introduce a lightweight MoE-based Action Expert that reduces inference latency to63.1 % 6 3 . 1 \%63.1%of the baseline. Using this expert as a testbed, we uncover a compelling scaling law reversal for action decoders: simpler autoregressive models surpass more complex flow-matching ones at a massive scale, inverting the performance trend seen on smaller datasets.第002/22页(中文翻译)格局中,存在两种主导范式。一方面是基于鸟瞰图表示的专用模型(Hu 等人,2022;Jiang 等人,2023)(Li 等人,2022;Huang 等人,2021)。这些模型建立在精心设计的几何先验之上,虽然对驾驶特定任务有效,但使得利用非驾驶数据集变得不那么直接。此外,它们相对紧凑的架构可能限制其大规模数据扩展的潜力。作为响应,视觉‑语言‑动作模型(Fu 等人,2025;Li 等人,2025b;Zhou 等人,2025c)已成为一种有前景的替代方案。通过利用在互联网规模数据上预训练的大规模视觉‑语言模型(Wang 等人,2024b;Bai 等人,2025),VLA模型拥有显著更大的模型规模以及更强的内在扩展潜力。然而,这种规模化潜力在很大程度上未能实现,原因在于一个关键挑战:VLA模型巨大的模型规模与极其稀疏的监督信号形成了鲜明对比。标准范式仅涉及在专家动作数据上对这些VLM模型进行微调。这要求模型将高维度的感知输入映射到少数低维度的控制信号(例如,路径点)。这造成了严重的“监督赤字”。这种赤字阻碍了模型学习丰富的世界表征,这是一个根本性的限制,无法仅通过增加纯动作训练数据的数量来克服。事实上,我们观察到,在没有充分监督的情况下,大型VLA模型甚至可能表现不如更小、更专门的BEV模型。为了解决这一监督赤字,我们利用世界建模(Li等人,2024a;Wang等人,2025;Cen等人,2025;Chen等人,2024)的能力,将其作为一种密集的自监督目标来整合,以补充稀疏的动作信号。通过让模型预测未来图像,我们在每个时间步生成一个密集且丰富的监督信号。这一目标迫使模型学习环境的底层动态,并构建一个丰富的、预测性的世界表征。为了验证我们方法的有效性,我们在两大主导的VLA架构家族中实施了该方法,这两种架构的主要区别在于其视觉表征方式:离散标记与连续特征。对于将图像表示为离散视觉标记的VLA(自回归世界模型),世界建模是一个自然的延伸。我们提出了一种自回归世界模型来预测未来图像的离散视觉标记序列。当前图像先被量化成离散视觉 token;文本、视觉 token、历史动作 token 一起输入 VLA 主干;主干隐状态一方面送到动作头预测未来动作;另一方面送到自回归世界模型头,预测未来图像的离散视觉 token 序列。动作专家自回归世界模型Emu3 Transformer (8B)交错序列构建Token化MoVQGAN 视觉编码器输入层查询最近邻语言指令 Lt文本序列当前图像 It[H, W, 3]未来图像 It+1[H, W, 3]历史动作 At-1[T, 3]编码器 EncoderCNN + Attention向量量化器Vector QuantizerVQ索引 tokens[H', W'] 离散整数例: [18, 32]未来VQ索引[H', W'] 离散整数码本 Codebook[K, D] K=8192可学习向量文本Tokenizer词表映射FAST Tokenizer动作离散化文本tokens[L_txt]动作tokens[L_act]序列格式:St = [Lt-H, Vt-H, At-H-1, ..., Lt, Vt, At-1]input_ids[B, L] 整数序列Token Embedding[B, L, 4096]Transformer Layers自注意力 + FFN隐藏状态[B, L, 4096]世界模型预测头预测下一帧VQ tokens预测的VQ索引[B, H', W']交叉熵损失L_WM-AR动作解码器动作输出[B, 8, 3]对于在连续视觉特征 VLA(扩散世界模型),这项任务更具挑战性,因为它们缺乏视觉词汇表,使得直接的下一标记预测方法不可行。为了弥合这一差距,我们引入了一个扩散世界模型,该模型基于当前帧产生的视觉和动作特征来生成未来图像像素。图像不被量化成视觉 token,而是编码成连续视觉特征;VLA 主干输出的视觉隐藏状态和动作隐藏状态被抽取出来;这些特征作为条件输入扩散模型;扩散模型学习生成未来图像像素(或其 VAE latent)。动作专家ROSS扩散世界模型ROSS隐藏状态提取Qwen2.5-VL Transformer (7B)序列构建Token化ViT视觉编码器输入层

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如若转载,请注明出处:http://www.coloradmin.cn/o/2466773.html

如若内容造成侵权/违法违规/事实不符,请联系多彩编程网进行投诉反馈,一经查实,立即删除!相关文章

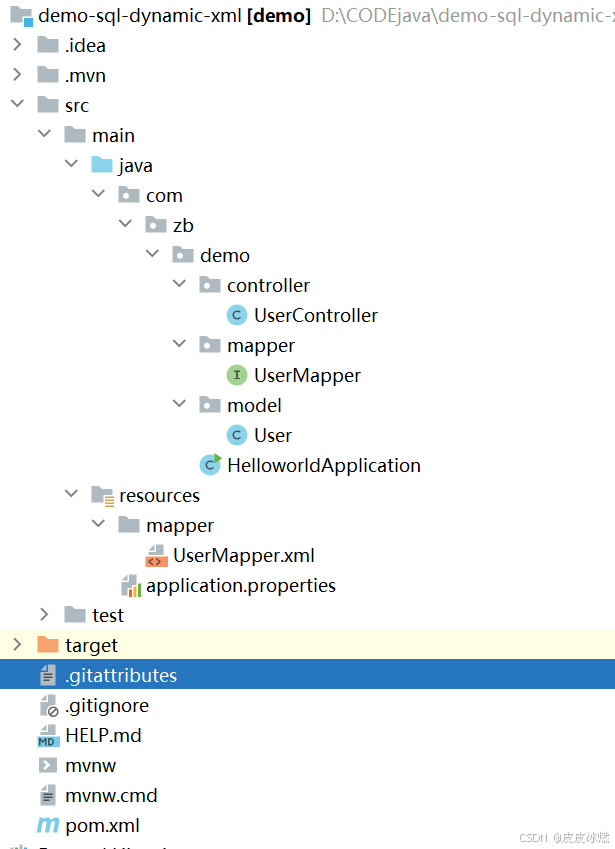

SpringBoot-17-MyBatis动态SQL标签之常用标签

文章目录 1 代码1.1 实体User.java1.2 接口UserMapper.java1.3 映射UserMapper.xml1.3.1 标签if1.3.2 标签if和where1.3.3 标签choose和when和otherwise1.4 UserController.java2 常用动态SQL标签2.1 标签set2.1.1 UserMapper.java2.1.2 UserMapper.xml2.1.3 UserController.ja…

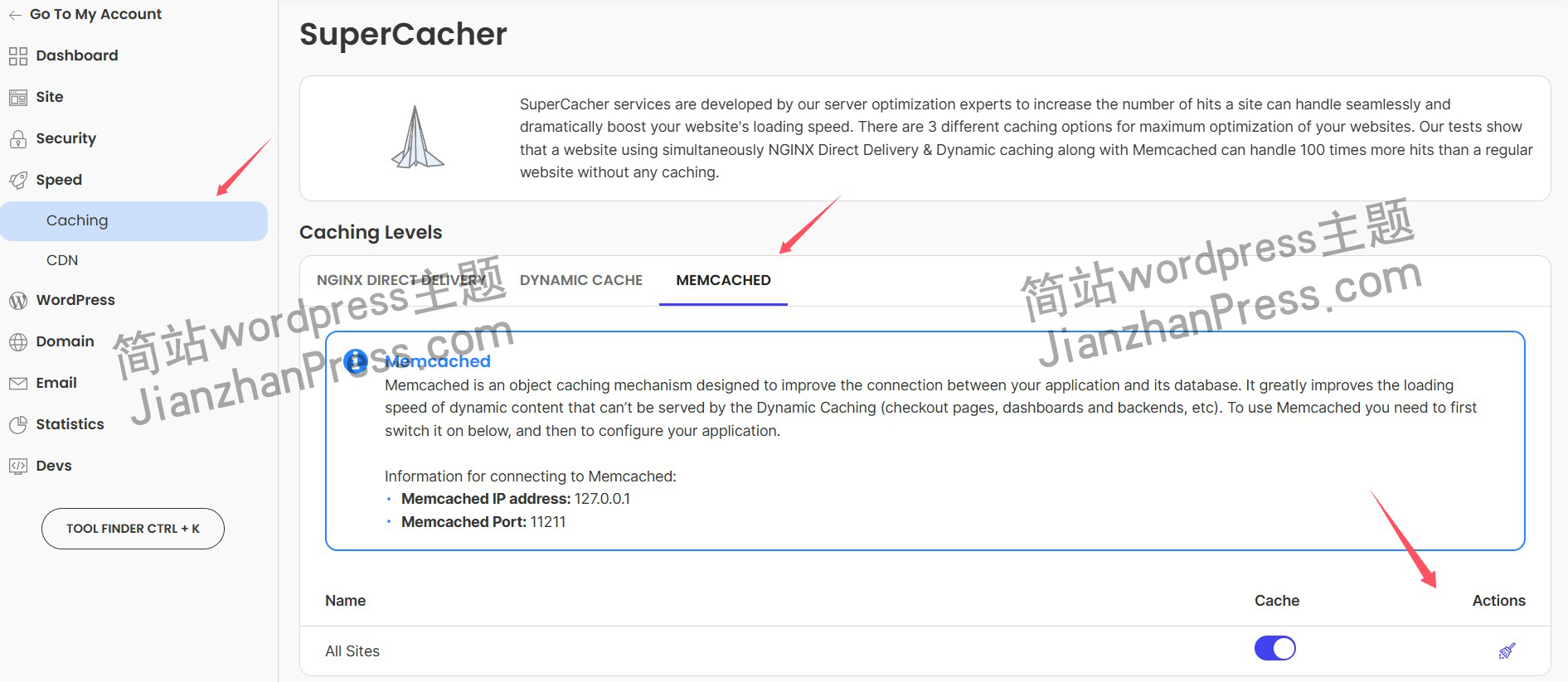

wordpress后台更新后 前端没变化的解决方法

使用siteground主机的wordpress网站,会出现更新了网站内容和修改了php模板文件、js文件、css文件、图片文件后,网站没有变化的情况。

不熟悉siteground主机的新手,遇到这个问题,就很抓狂,明明是哪都没操作错误&#x…

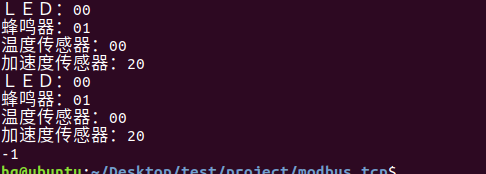

网络编程(Modbus进阶)

思维导图 Modbus RTU(先学一点理论)

概念 Modbus RTU 是工业自动化领域 最广泛应用的串行通信协议,由 Modicon 公司(现施耐德电气)于 1979 年推出。它以 高效率、强健性、易实现的特点成为工业控制系统的通信标准。 包…

UE5 学习系列(二)用户操作界面及介绍

这篇博客是 UE5 学习系列博客的第二篇,在第一篇的基础上展开这篇内容。博客参考的 B 站视频资料和第一篇的链接如下:

【Note】:如果你已经完成安装等操作,可以只执行第一篇博客中 2. 新建一个空白游戏项目 章节操作,重…

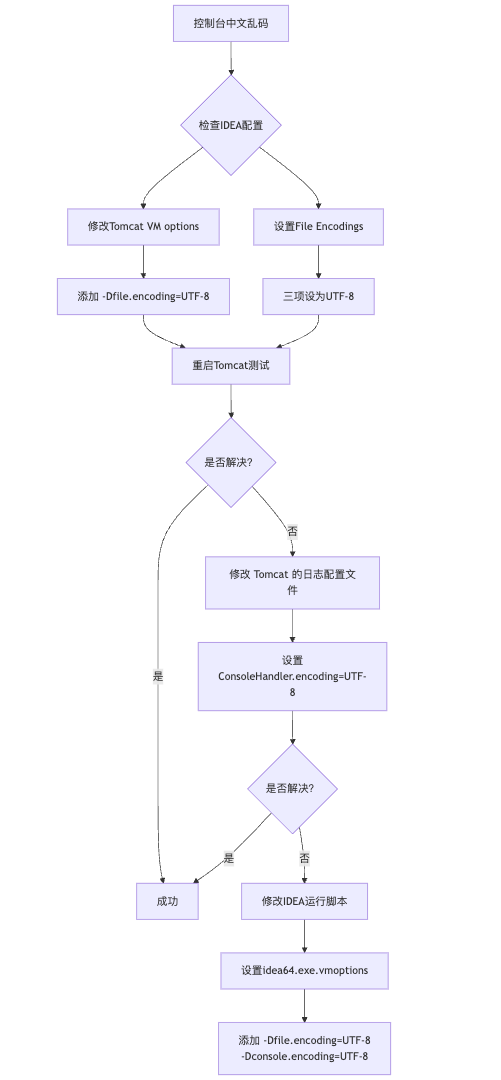

IDEA运行Tomcat出现乱码问题解决汇总

最近正值期末周,有很多同学在写期末Java web作业时,运行tomcat出现乱码问题,经过多次解决与研究,我做了如下整理:

原因:

IDEA本身编码与tomcat的编码与Windows编码不同导致,Windows 系统控制台…

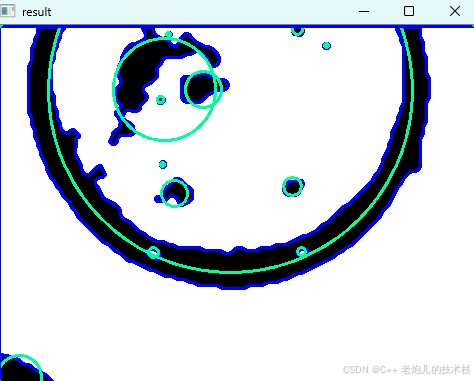

利用最小二乘法找圆心和半径

#include <iostream>

#include <vector>

#include <cmath>

#include <Eigen/Dense> // 需安装Eigen库用于矩阵运算 // 定义点结构

struct Point { double x, y; Point(double x_, double y_) : x(x_), y(y_) {}

}; // 最小二乘法求圆心和半径 …

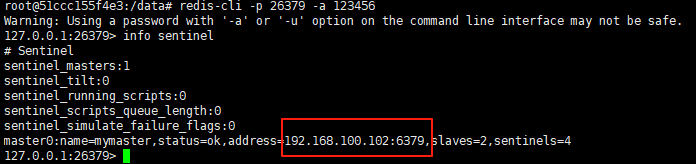

使用docker在3台服务器上搭建基于redis 6.x的一主两从三台均是哨兵模式

一、环境及版本说明

如果服务器已经安装了docker,则忽略此步骤,如果没有安装,则可以按照一下方式安装: 1. 在线安装(有互联网环境): 请看我这篇文章 传送阵>> 点我查看 2. 离线安装(内网环境):请看我这篇文章 传送阵>> 点我查看

说明:假设每台服务器已…

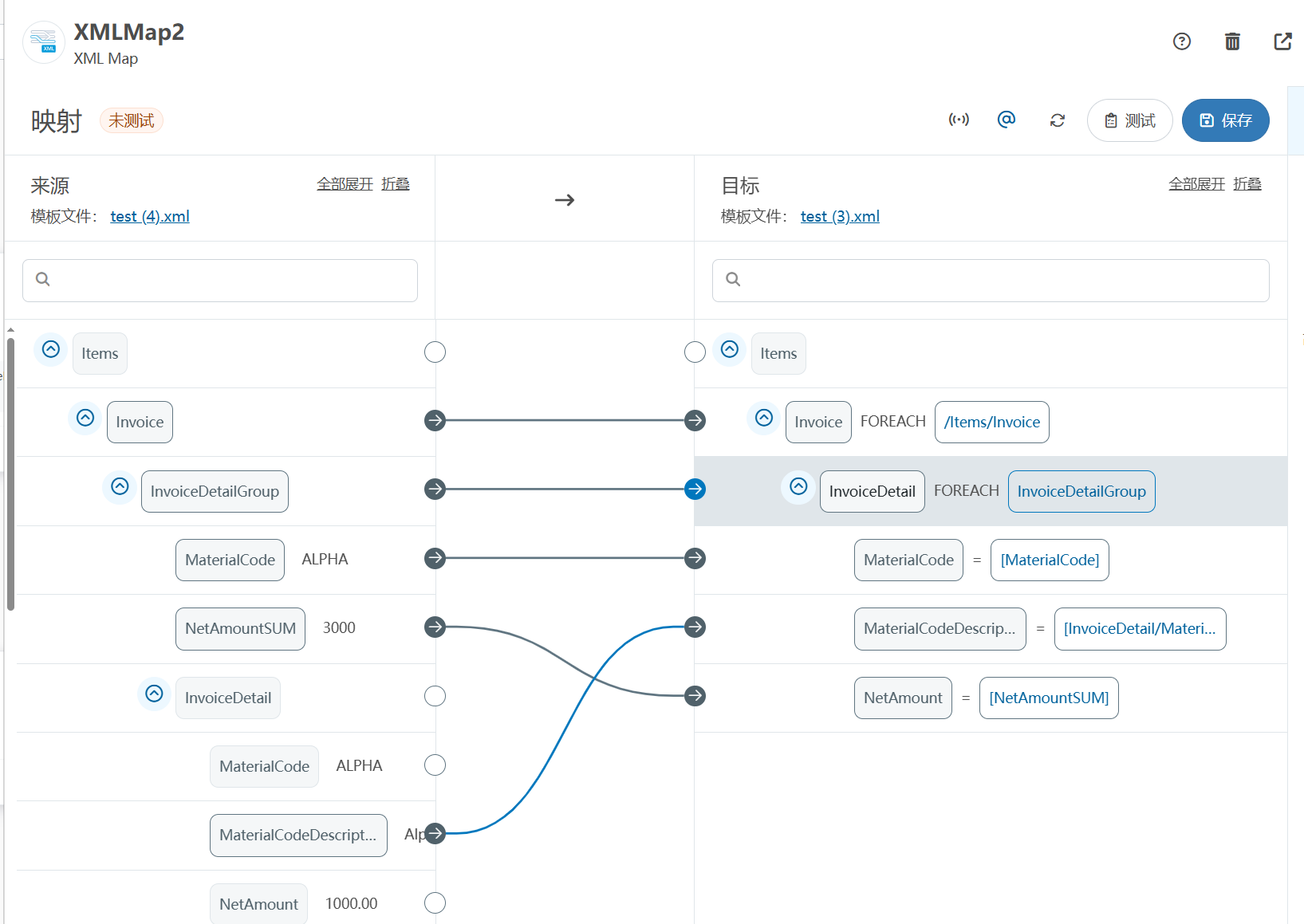

XML Group端口详解

在XML数据映射过程中,经常需要对数据进行分组聚合操作。例如,当处理包含多个物料明细的XML文件时,可能需要将相同物料号的明细归为一组,或对相同物料号的数量进行求和计算。传统实现方式通常需要编写脚本代码,增加了开…

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器的上位机配置操作说明

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器专为工业环境精心打造,完美适配AGV和无人叉车。同时,集成以太网与语音合成技术,为各类高级系统(如MES、调度系统、库位管理、立库等)提供高效便捷的语音交互体验。

L…

(LeetCode 每日一题) 3442. 奇偶频次间的最大差值 I (哈希、字符串)

题目:3442. 奇偶频次间的最大差值 I 思路 :哈希,时间复杂度0(n)。 用哈希表来记录每个字符串中字符的分布情况,哈希表这里用数组即可实现。

C版本:

class Solution {

public:int maxDifference(string s) {int a[26]…

【大模型RAG】拍照搜题技术架构速览:三层管道、两级检索、兜底大模型

摘要

拍照搜题系统采用“三层管道(多模态 OCR → 语义检索 → 答案渲染)、两级检索(倒排 BM25 向量 HNSW)并以大语言模型兜底”的整体框架: 多模态 OCR 层 将题目图片经过超分、去噪、倾斜校正后,分别用…

【Axure高保真原型】引导弹窗

今天和大家中分享引导弹窗的原型模板,载入页面后,会显示引导弹窗,适用于引导用户使用页面,点击完成后,会显示下一个引导弹窗,直至最后一个引导弹窗完成后进入首页。具体效果可以点击下方视频观看或打开下方…

接口测试中缓存处理策略

在接口测试中,缓存处理策略是一个关键环节,直接影响测试结果的准确性和可靠性。合理的缓存处理策略能够确保测试环境的一致性,避免因缓存数据导致的测试偏差。以下是接口测试中常见的缓存处理策略及其详细说明:

一、缓存处理的核…

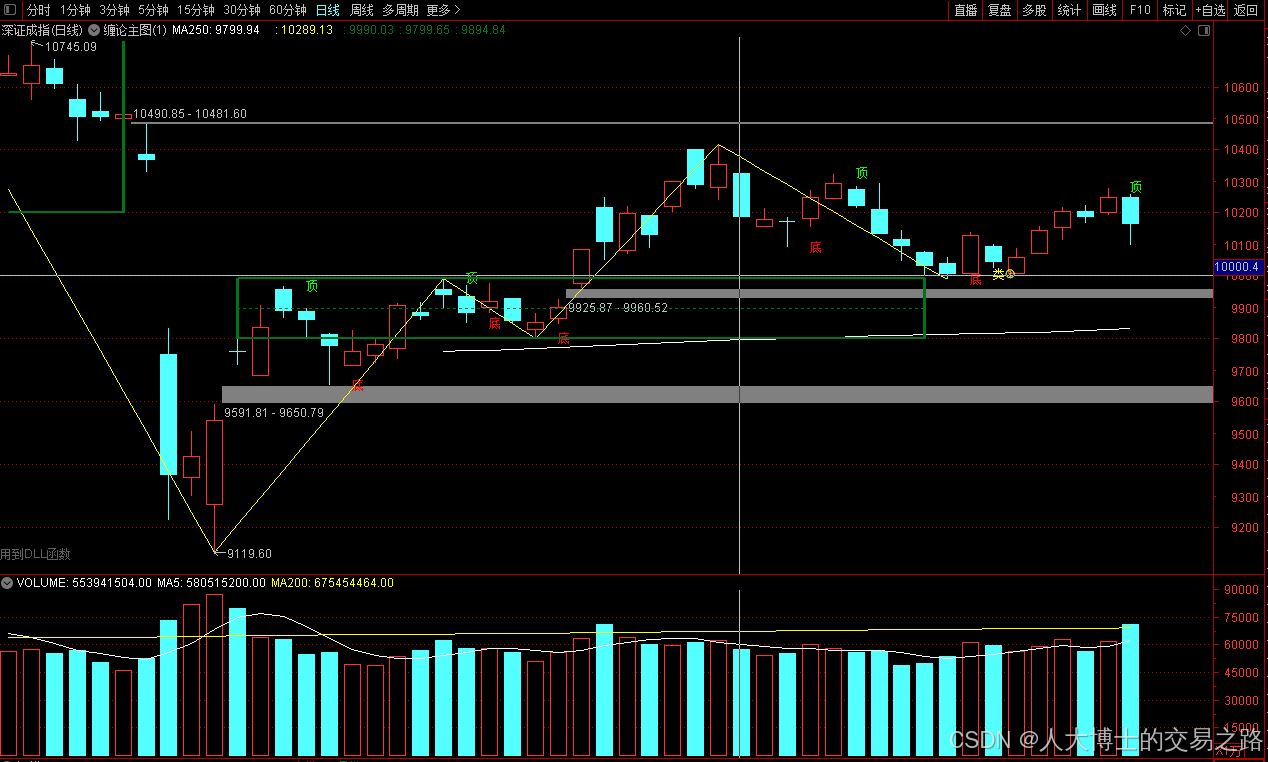

龙虎榜——20250610

上证指数放量收阴线,个股多数下跌,盘中受消息影响大幅波动。 深证指数放量收阴线形成顶分型,指数短线有调整的需求,大概需要一两天。 2025年6月10日龙虎榜行业方向分析 1. 金融科技

代表标的:御银股份、雄帝科技

驱动…

观成科技:隐蔽隧道工具Ligolo-ng加密流量分析

1.工具介绍

Ligolo-ng是一款由go编写的高效隧道工具,该工具基于TUN接口实现其功能,利用反向TCP/TLS连接建立一条隐蔽的通信信道,支持使用Let’s Encrypt自动生成证书。Ligolo-ng的通信隐蔽性体现在其支持多种连接方式,适应复杂网…

铭豹扩展坞 USB转网口 突然无法识别解决方法

当 USB 转网口扩展坞在一台笔记本上无法识别,但在其他电脑上正常工作时,问题通常出在笔记本自身或其与扩展坞的兼容性上。以下是系统化的定位思路和排查步骤,帮助你快速找到故障原因:

背景:

一个M-pard(铭豹)扩展坞的网卡突然无法识别了,扩展出来的三个USB接口正常。…

未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?

编辑:陈萍萍的公主一点人工一点智能 未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?RWM通过双自回归机制有效解决了复合误差、部分可观测性和随机动力学等关键挑战,在不依赖领域特定归纳偏见的条件下实现了卓越的预测准…

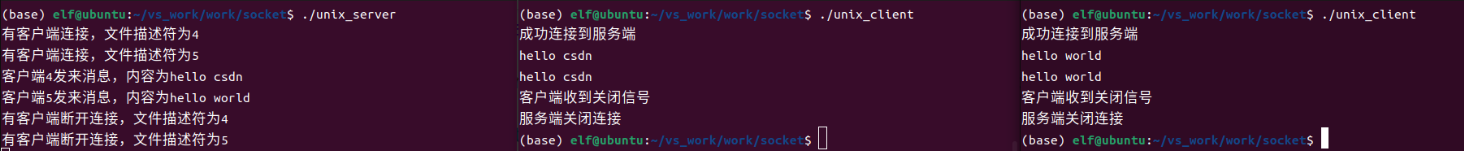

Linux应用开发之网络套接字编程(实例篇)

服务端与客户端单连接

服务端代码

#include <sys/socket.h>

#include <sys/types.h>

#include <netinet/in.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <arpa/inet.h>

#include <pthread.h>

…

华为云AI开发平台ModelArts

华为云ModelArts:重塑AI开发流程的“智能引擎”与“创新加速器”!

在人工智能浪潮席卷全球的2025年,企业拥抱AI的意愿空前高涨,但技术门槛高、流程复杂、资源投入巨大的现实,却让许多创新构想止步于实验室。数据科学家…

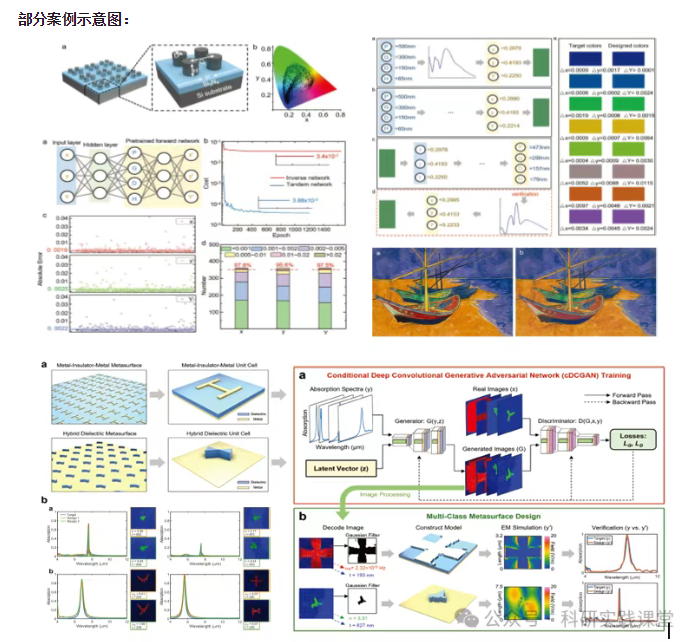

深度学习在微纳光子学中的应用

深度学习在微纳光子学中的主要应用方向

深度学习与微纳光子学的结合主要集中在以下几个方向:

逆向设计 通过神经网络快速预测微纳结构的光学响应,替代传统耗时的数值模拟方法。例如设计超表面、光子晶体等结构。

特征提取与优化 从复杂的光学数据中自…