Python异步爬虫实战:aiohttp并发采集与验证码异步处理完整教程

news2026/3/28 20:39:25

前言爬虫效率是每个数据工程师都关心的问题。当你需要采集上万个页面时同步请求一个一个排队等待的方式实在太慢了。Python的asyncio aiohttp组合可以让你的爬虫速度提升10-50倍而且代码改动并不大。本文将从零开始讲解异步爬虫的原理和实战包括并发控制、错误处理、以及如何在异步流程中处理验证码。同步 vs 异步为什么差这么多同步爬虫的瓶颈importrequestsimporttime urls[fhttps://httpbin.org/delay/1for_inrange(10)]starttime.time()forurlinurls:resprequests.get(url)print(f{resp.status_code},end )print(f\n同步耗时:{time.time()-start:.1f}秒)# 输出: 同步耗时: 10.3秒 (每个请求等1秒串行排队)问题很明显每个请求都在等网络IO返回CPU其实在空闲。异步爬虫的优势importaiohttpimportasyncioimporttimeasyncdeffetch(session,url):asyncwithsession.get(url)asresp:returnresp.statusasyncdefmain():urls[fhttps://httpbin.org/delay/1for_inrange(10)]asyncwithaiohttp.ClientSession()assession:tasks[fetch(session,url)forurlinurls]resultsawaitasyncio.gather(*tasks)print(f状态码:{results})starttime.time()asyncio.run(main())print(f异步耗时:{time.time()-start:.1f}秒)# 输出: 异步耗时: 1.2秒 (10个请求并发几乎同时完成)10个请求从10秒变成1秒这就是异步的威力。aiohttp基础安装pipinstallaiohttpSession管理importaiohttpimportasyncioasyncdefmain():# 创建session复用TCP连接性能更好asyncwithaiohttp.ClientSession()assession:# GET请求asyncwithsession.get(https://httpbin.org/get)asresp:dataawaitresp.json()print(data)# POST请求asyncwithsession.post(https://httpbin.org/post,json{key:value})asresp:dataawaitresp.json()print(data)# 自定义请求头headers{User-Agent:Mozilla/5.0 (Windows NT 10.0; Win64; x64) Chrome/124.0.0.0,Accept-Language:zh-CN,zh;q0.9,en;q0.8,}asyncwithsession.get(https://httpbin.org/headers,headersheaders)asresp:print(awaitresp.json())asyncio.run(main())超时和代理importaiohttpimportasyncioasyncdefmain():# 设置超时timeoutaiohttp.ClientTimeout(total30,connect10)# 设置代理proxyhttp://user:passproxy:8080asyncwithaiohttp.ClientSession(timeouttimeout)assession:asyncwithsession.get(https://httpbin.org/ip,proxyproxy)asresp:print(awaitresp.json())asyncio.run(main())并发控制Semaphore不加限制地并发会导致目标服务器拒绝连接甚至把自己的IP封了。用Semaphore控制并发数importaiohttpimportasyncioasyncdeffetch_with_limit(sem,session,url):asyncwithsem:# 信号量控制并发try:asyncwithsession.get(url)asresp:textawaitresp.text()return{url:url,status:resp.status,length:len(text)}exceptExceptionase:return{url:url,error:str(e)}asyncdefmain():urls[fhttps://httpbin.org/get?page{i}foriinrange(100)]semasyncio.Semaphore(10)# 最多同时10个请求connectoraiohttp.TCPConnector(limit20)# TCP连接池上限asyncwithaiohttp.ClientSession(connectorconnector)assession:tasks[fetch_with_limit(sem,session,url)forurlinurls]resultsawaitasyncio.gather(*tasks)success[rforrinresultsiferrornotinr]failed[rforrinresultsiferrorinr]print(f成功:{len(success)}, 失败:{len(failed)})asyncio.run(main())实战异步爬虫完整模板importaiohttpimportasynciofromdataclassesimportdataclassfromtypingimportOptionalimportloggingimportrandom logging.basicConfig(levellogging.INFO)loggerlogging.getLogger(__name__)dataclassclassScrapedItem:url:strtitle:strcontent:strstatus:intclassAsyncScraper:def__init__(self,concurrency10,delay_range(0.5,2.0),proxyNone):self.concurrencyconcurrency self.delay_rangedelay_range self.proxyproxy self.semasyncio.Semaphore(concurrency)self.results[]self.errors[]asyncdeffetch_page(self,session,url):asyncwithself.sem:# 随机延迟避免请求过于规律awaitasyncio.sleep(random.uniform(*self.delay_range))try:asyncwithsession.get(url,proxyself.proxy)asresp:ifresp.status200:htmlawaitresp.text()itemself.parse(url,html,resp.status)self.results.append(item)logger.info(f[OK]{url})returnitemelifresp.status403:logger.warning(f[403]{url}- 可能需要处理验证码)self.errors.append({url:url,status:403})else:logger.warning(f[{resp.status}]{url})self.errors.append({url:url,status:resp.status})exceptasyncio.TimeoutError:logger.error(f[TIMEOUT]{url})self.errors.append({url:url,error:timeout})exceptExceptionase:logger.error(f[ERROR]{url}:{e})self.errors.append({url:url,error:str(e)})defparse(self,url,html,status):# 替换为你的解析逻辑fromhtml.parserimportHTMLParser titleclassTitleParser(HTMLParser):defhandle_starttag(self,tag,attrs):nonlocaltitle self._in_titletagtitledefhandle_data(self,data):nonlocaltitleifgetattr(self,_in_title,False):titledata self._in_titleFalseparserTitleParser()parser.feed(html[:5000])returnScrapedItem(urlurl,titletitle,contenthtml[:500],statusstatus)asyncdefrun(self,urls):timeoutaiohttp.ClientTimeout(total30)connectoraiohttp.TCPConnector(limitself.concurrency*2)headers{User-Agent:Mozilla/5.0 (Windows NT 10.0; Win64; x64) Chrome/124.0.0.0,Accept:text/html,application/xhtmlxml,application/xml;q0.9,*/*;q0.8,Accept-Language:zh-CN,zh;q0.9,en;q0.8,}asyncwithaiohttp.ClientSession(timeouttimeout,connectorconnector,headersheaders)assession:tasks[self.fetch_page(session,url)forurlinurls]awaitasyncio.gather(*tasks,return_exceptionsTrue)logger.info(f完成:{len(self.results)}成功,{len(self.errors)}失败)returnself.results# 使用asyncdefmain():scraperAsyncScraper(concurrency5,delay_range(1.0,3.0))urls[fhttps://httpbin.org/get?id{i}foriinrange(50)]resultsawaitscraper.run(urls)foriteminresults[:3]:print(f{item.title}-{item.url})asyncio.run(main())异步流程中处理验证码异步爬虫遇到验证码时不能阻塞整个事件循环。正确做法是将验证码解决也异步化importaiohttpimportasynciofrompassxapiimportAsyncPassXAPIclassAsyncScraperWithCaptcha:def__init__(self,captcha_api_key,concurrency10):self.semasyncio.Semaphore(concurrency)self.solverAsyncPassXAPI(api_keycaptcha_api_key)asyncdeffetch_with_captcha(self,session,url):asyncwithself.sem:asyncwithsession.get(url)asresp:htmlawaitresp.text()# 检测验证码ifdata-sitekeyinhtml:tokenawaitself._solve_captcha(html,url)iftoken:asyncwithsession.post(url,data{cf-turnstile-response:token,g-recaptcha-response:token,})asretry_resp:returnawaitretry_resp.text()returnhtmlasyncdef_solve_captcha(self,html,url):importrematchre.search(rdata-sitekey([^]),html)ifnotmatch:returnNonesitekeymatch.group(1)ifcf-turnstileinhtml:resultawaitself.solver.solve_turnstile(sitekeysitekey,urlurl)elifh-captchainhtml:resultawaitself.solver.solve_hcaptcha(sitekeysitekey,urlurl)else:resultawaitself.solver.solve_recaptcha(sitekeysitekey,urlurl)returnresult.get(token)asyncdefrun(self,urls):asyncwithaiohttp.ClientSession()assession:tasks[self.fetch_with_captcha(session,url)forurlinurls]returnawaitasyncio.gather(*tasks,return_exceptionsTrue)# 使用asyncdefmain():scraperAsyncScraperWithCaptcha(captcha_api_keyyour_passxapi_key,concurrency10)urls[https://protected-site.com/page/1,https://protected-site.com/page/2]resultsawaitscraper.run(urls)asyncio.run(main())生产者-消费者模式对于大规模爬取推荐使用asyncio.Queue实现生产者-消费者模式importasyncioimportaiohttpasyncdefproducer(queue,urls):forurlinurls:awaitqueue.put(url)# 发送结束信号for_inrange(5):# worker数量awaitqueue.put(None)asyncdefconsumer(queue,session,results,worker_id):whileTrue:urlawaitqueue.get()ifurlisNone:breaktry:asyncwithsession.get(url)asresp:dataawaitresp.text()results.append({url:url,length:len(data)})print(f[Worker-{worker_id}]{url}-{len(data)}bytes)exceptExceptionase:print(f[Worker-{worker_id}] Error:{url}-{e})queue.task_done()asyncdefmain():urls[fhttps://httpbin.org/get?id{i}foriinrange(30)]queueasyncio.Queue(maxsize20)results[]asyncwithaiohttp.ClientSession()assession:# 启动1个生产者 5个消费者producer_taskasyncio.create_task(producer(queue,urls))workers[asyncio.create_task(consumer(queue,session,results,i))foriinrange(5)]awaitproducer_taskawaitasyncio.gather(*workers)print(f总计采集:{len(results)}个页面)asyncio.run(main())性能优化技巧1. 连接池复用# 配置TCP连接池connectoraiohttp.TCPConnector(limit100,# 总连接数上限limit_per_host10,# 单个域名连接数ttl_dns_cache300,# DNS缓存5分钟enable_cleanup_closedTrue,)### 2. 流式读取大文件pythonasyncdefdownload_file(session,url,filepath):asyncwithsession.get(url)asresp:withopen(filepath,wb)asf:asyncforchunkinresp.content.iter_chunked(8192):f.write(chunk)### 3. 优雅关闭pythonimportsignalasyncdefgraceful_shutdown(scraper):print(收到退出信号正在优雅关闭...)scraper.runningFalse# 等待当前任务完成awaitasyncio.sleep(2)loopasyncio.get_event_loop()loop.add_signal_handler(signal.SIGINT,lambda:asyncio.create_task(graceful_shutdown(scraper)))常见坑不要在async函数中使用time.sleep会阻塞整个事件循环用await asyncio.sleep()Session要复用每个请求创建新Session浪费TCP连接并发不是越多越好过高并发会触发反爬建议5-20异常要捕获一个任务的异常不应该影响其他任务总结异步爬虫是提升采集效率的最有效手段aiohttp asyncio可以轻松实现10倍以上的速度提升用Semaphore控制并发数避免被封IP验证码解决也要异步化不能阻塞事件循环生产者-消费者模式适合大规模采集场景验证码异步解决方案可以参考passxapi-python提供AsyncPassXAPI异步客户端完美融入asyncio工作流。觉得有帮助请点赞收藏有问题欢迎评论区讨论。

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如若转载,请注明出处:http://www.coloradmin.cn/o/2459120.html

如若内容造成侵权/违法违规/事实不符,请联系多彩编程网进行投诉反馈,一经查实,立即删除!相关文章

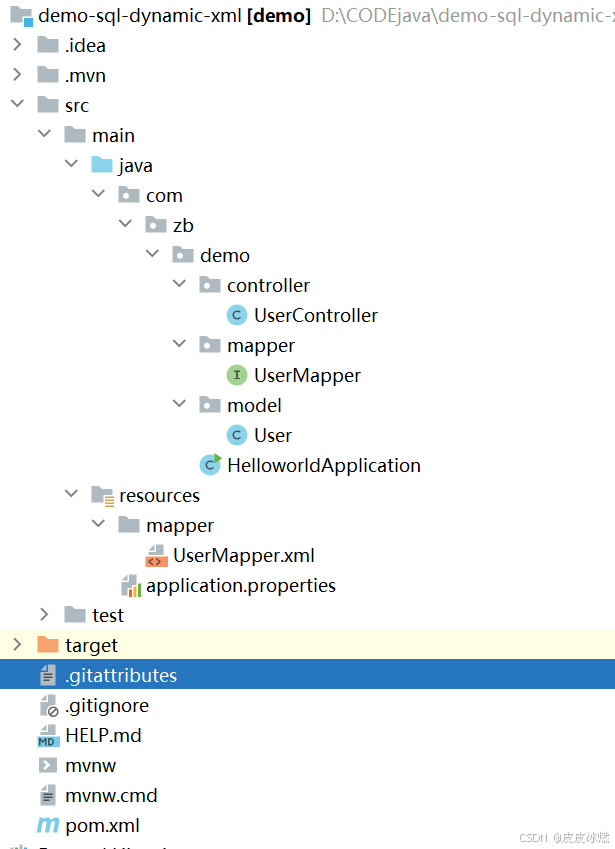

SpringBoot-17-MyBatis动态SQL标签之常用标签

文章目录 1 代码1.1 实体User.java1.2 接口UserMapper.java1.3 映射UserMapper.xml1.3.1 标签if1.3.2 标签if和where1.3.3 标签choose和when和otherwise1.4 UserController.java2 常用动态SQL标签2.1 标签set2.1.1 UserMapper.java2.1.2 UserMapper.xml2.1.3 UserController.ja…

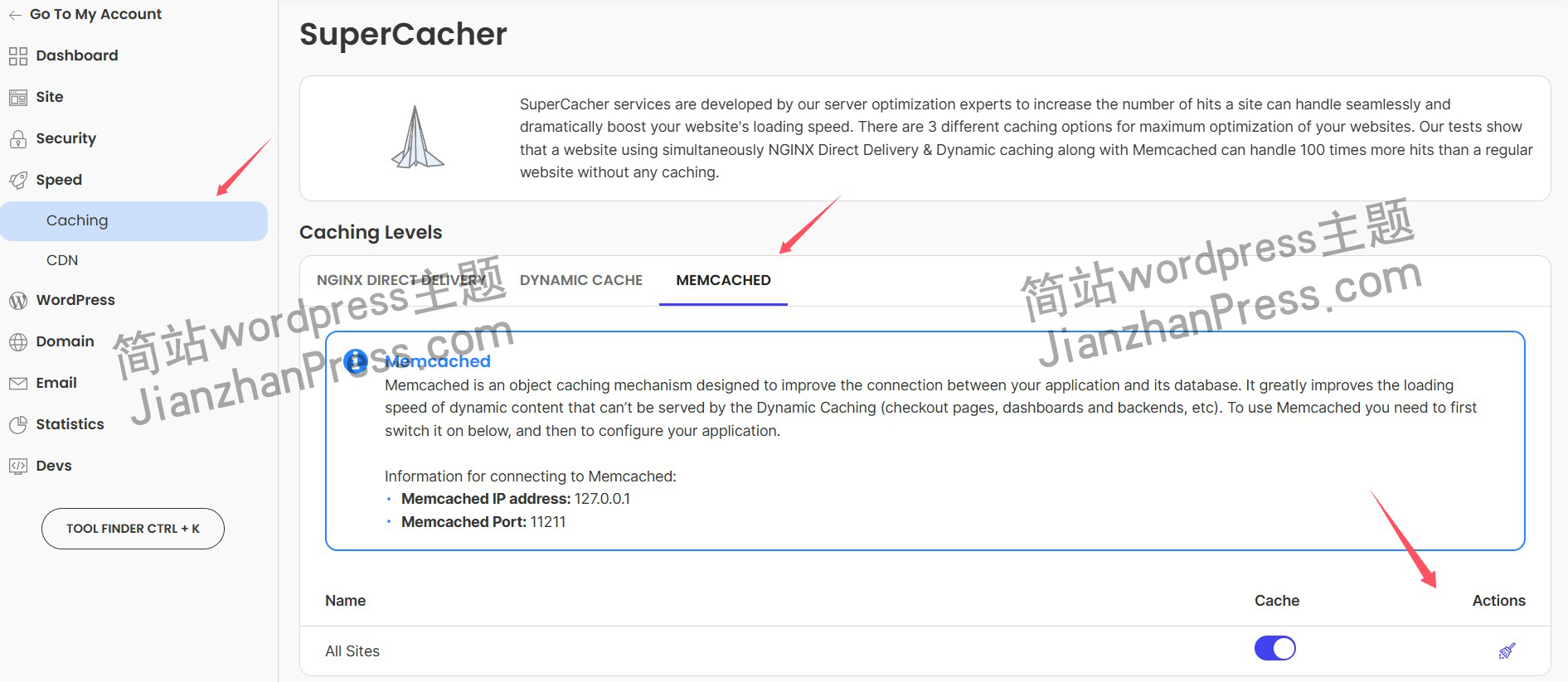

wordpress后台更新后 前端没变化的解决方法

使用siteground主机的wordpress网站,会出现更新了网站内容和修改了php模板文件、js文件、css文件、图片文件后,网站没有变化的情况。

不熟悉siteground主机的新手,遇到这个问题,就很抓狂,明明是哪都没操作错误&#x…

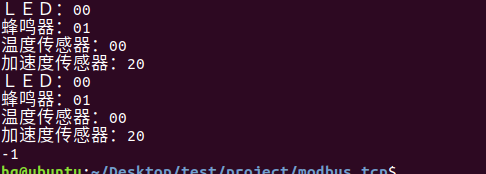

网络编程(Modbus进阶)

思维导图 Modbus RTU(先学一点理论)

概念 Modbus RTU 是工业自动化领域 最广泛应用的串行通信协议,由 Modicon 公司(现施耐德电气)于 1979 年推出。它以 高效率、强健性、易实现的特点成为工业控制系统的通信标准。 包…

UE5 学习系列(二)用户操作界面及介绍

这篇博客是 UE5 学习系列博客的第二篇,在第一篇的基础上展开这篇内容。博客参考的 B 站视频资料和第一篇的链接如下:

【Note】:如果你已经完成安装等操作,可以只执行第一篇博客中 2. 新建一个空白游戏项目 章节操作,重…

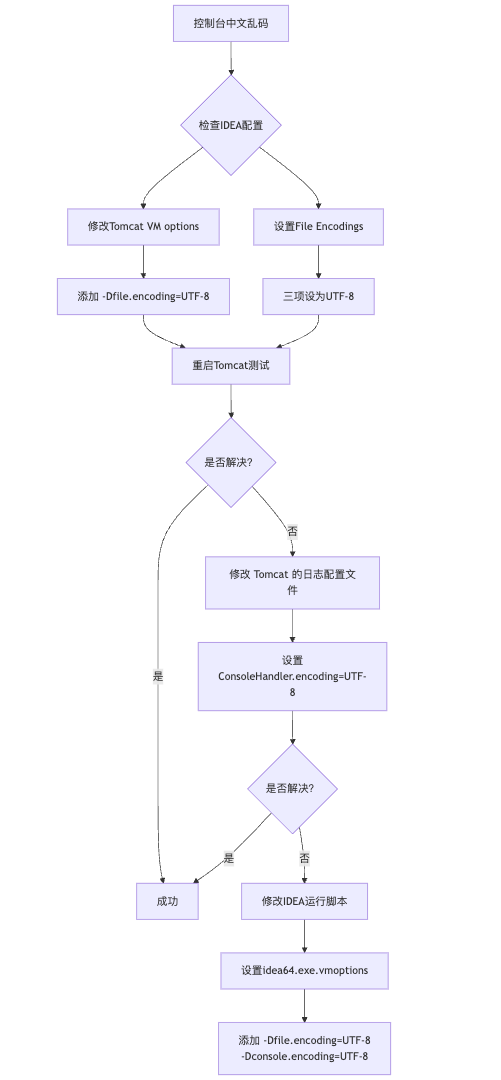

IDEA运行Tomcat出现乱码问题解决汇总

最近正值期末周,有很多同学在写期末Java web作业时,运行tomcat出现乱码问题,经过多次解决与研究,我做了如下整理:

原因:

IDEA本身编码与tomcat的编码与Windows编码不同导致,Windows 系统控制台…

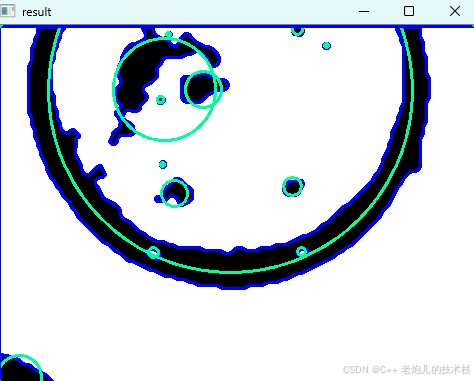

利用最小二乘法找圆心和半径

#include <iostream>

#include <vector>

#include <cmath>

#include <Eigen/Dense> // 需安装Eigen库用于矩阵运算 // 定义点结构

struct Point { double x, y; Point(double x_, double y_) : x(x_), y(y_) {}

}; // 最小二乘法求圆心和半径 …

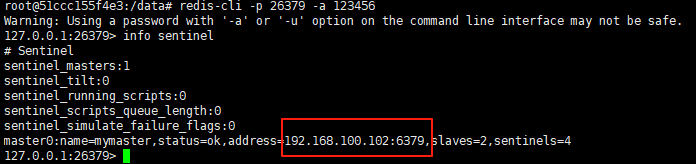

使用docker在3台服务器上搭建基于redis 6.x的一主两从三台均是哨兵模式

一、环境及版本说明

如果服务器已经安装了docker,则忽略此步骤,如果没有安装,则可以按照一下方式安装: 1. 在线安装(有互联网环境): 请看我这篇文章 传送阵>> 点我查看 2. 离线安装(内网环境):请看我这篇文章 传送阵>> 点我查看

说明:假设每台服务器已…

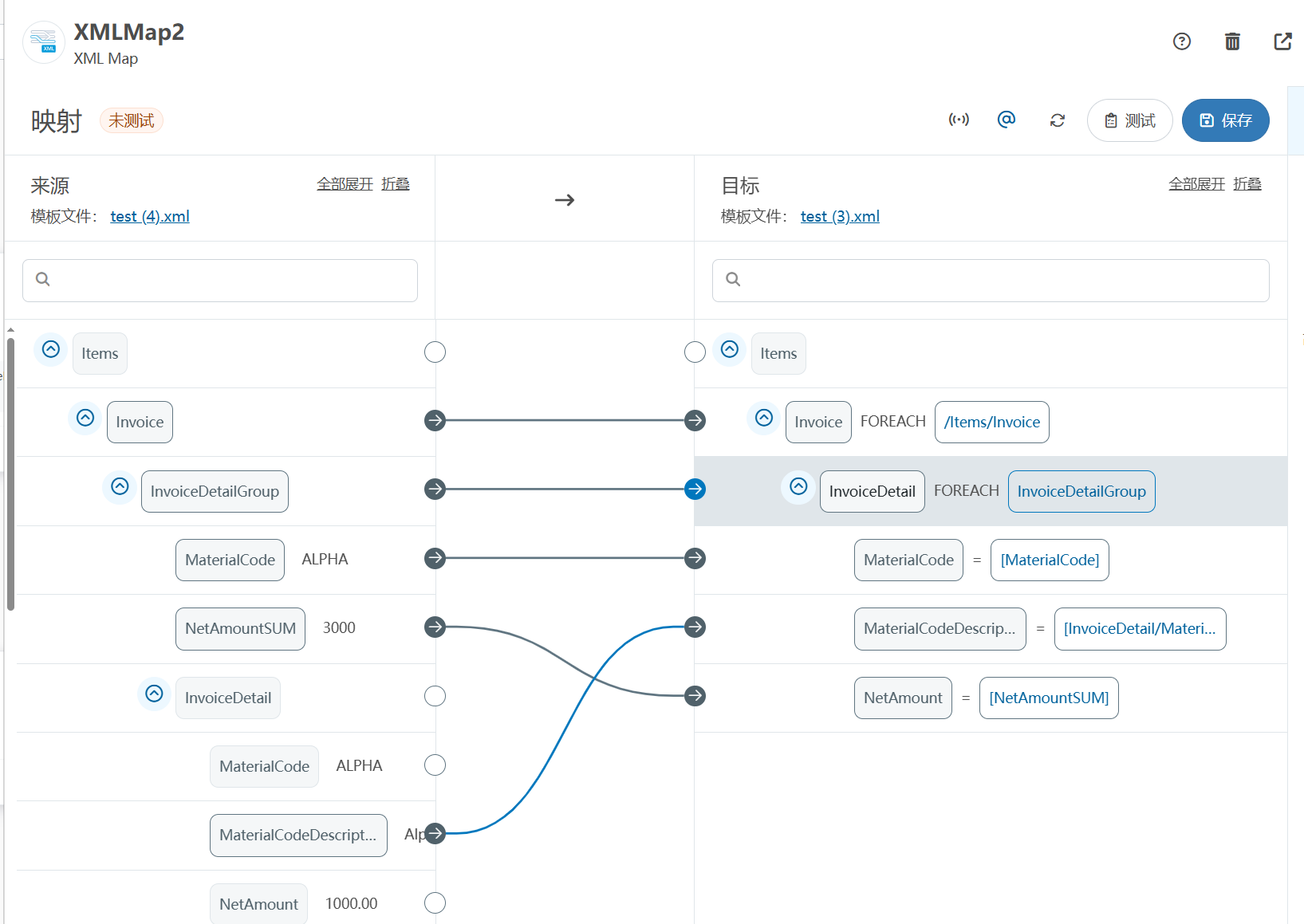

XML Group端口详解

在XML数据映射过程中,经常需要对数据进行分组聚合操作。例如,当处理包含多个物料明细的XML文件时,可能需要将相同物料号的明细归为一组,或对相同物料号的数量进行求和计算。传统实现方式通常需要编写脚本代码,增加了开…

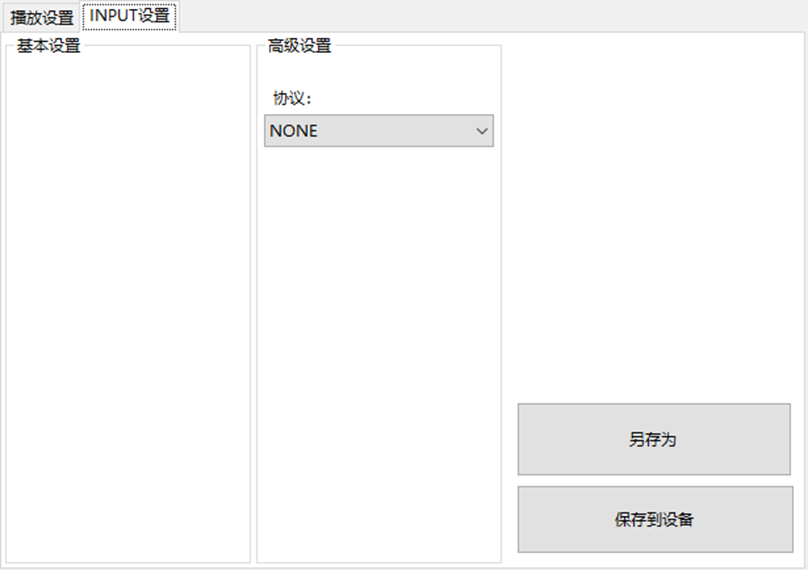

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器的上位机配置操作说明

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器专为工业环境精心打造,完美适配AGV和无人叉车。同时,集成以太网与语音合成技术,为各类高级系统(如MES、调度系统、库位管理、立库等)提供高效便捷的语音交互体验。

L…

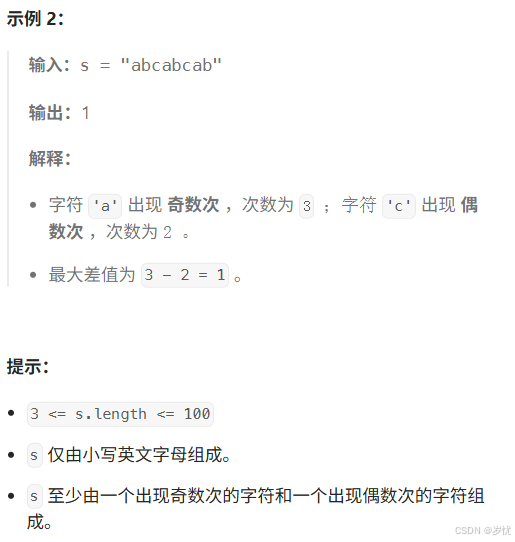

(LeetCode 每日一题) 3442. 奇偶频次间的最大差值 I (哈希、字符串)

题目:3442. 奇偶频次间的最大差值 I 思路 :哈希,时间复杂度0(n)。 用哈希表来记录每个字符串中字符的分布情况,哈希表这里用数组即可实现。

C版本:

class Solution {

public:int maxDifference(string s) {int a[26]…

【大模型RAG】拍照搜题技术架构速览:三层管道、两级检索、兜底大模型

摘要

拍照搜题系统采用“三层管道(多模态 OCR → 语义检索 → 答案渲染)、两级检索(倒排 BM25 向量 HNSW)并以大语言模型兜底”的整体框架: 多模态 OCR 层 将题目图片经过超分、去噪、倾斜校正后,分别用…

【Axure高保真原型】引导弹窗

今天和大家中分享引导弹窗的原型模板,载入页面后,会显示引导弹窗,适用于引导用户使用页面,点击完成后,会显示下一个引导弹窗,直至最后一个引导弹窗完成后进入首页。具体效果可以点击下方视频观看或打开下方…

接口测试中缓存处理策略

在接口测试中,缓存处理策略是一个关键环节,直接影响测试结果的准确性和可靠性。合理的缓存处理策略能够确保测试环境的一致性,避免因缓存数据导致的测试偏差。以下是接口测试中常见的缓存处理策略及其详细说明:

一、缓存处理的核…

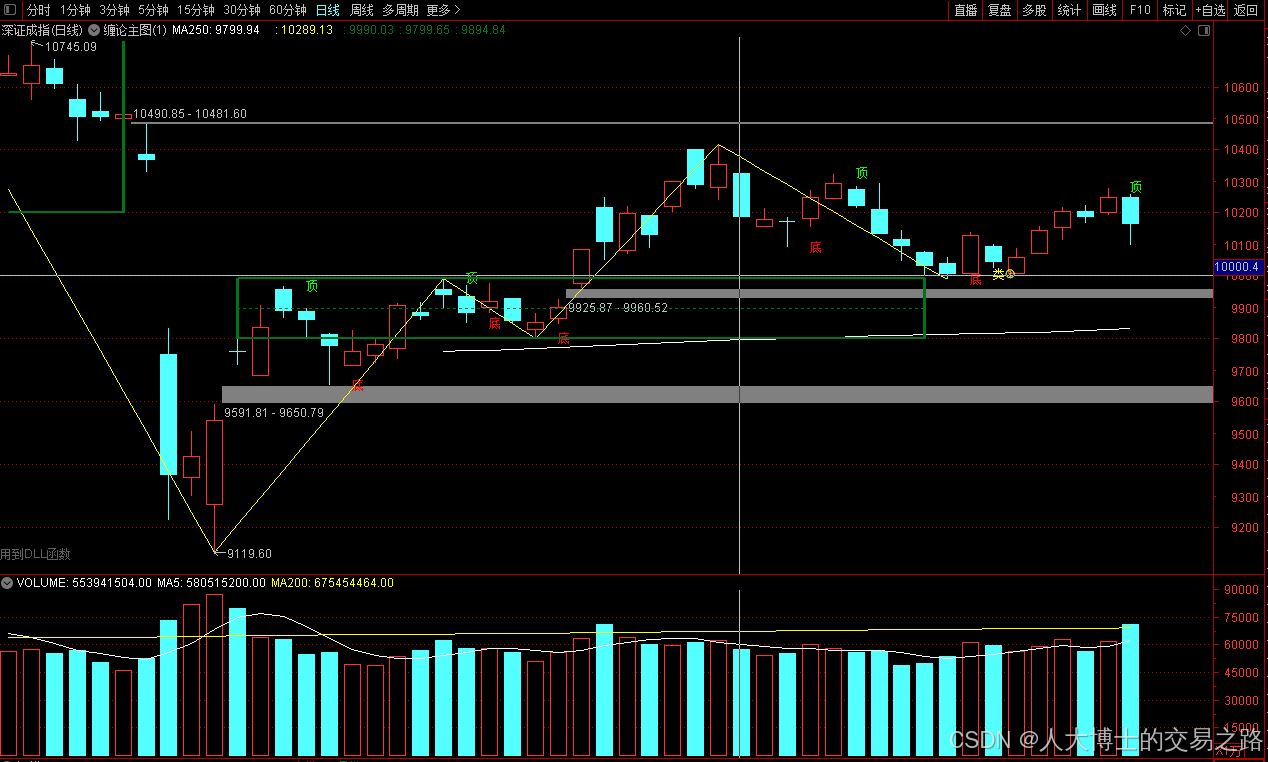

龙虎榜——20250610

上证指数放量收阴线,个股多数下跌,盘中受消息影响大幅波动。 深证指数放量收阴线形成顶分型,指数短线有调整的需求,大概需要一两天。 2025年6月10日龙虎榜行业方向分析 1. 金融科技

代表标的:御银股份、雄帝科技

驱动…

观成科技:隐蔽隧道工具Ligolo-ng加密流量分析

1.工具介绍

Ligolo-ng是一款由go编写的高效隧道工具,该工具基于TUN接口实现其功能,利用反向TCP/TLS连接建立一条隐蔽的通信信道,支持使用Let’s Encrypt自动生成证书。Ligolo-ng的通信隐蔽性体现在其支持多种连接方式,适应复杂网…

铭豹扩展坞 USB转网口 突然无法识别解决方法

当 USB 转网口扩展坞在一台笔记本上无法识别,但在其他电脑上正常工作时,问题通常出在笔记本自身或其与扩展坞的兼容性上。以下是系统化的定位思路和排查步骤,帮助你快速找到故障原因:

背景:

一个M-pard(铭豹)扩展坞的网卡突然无法识别了,扩展出来的三个USB接口正常。…

未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?

编辑:陈萍萍的公主一点人工一点智能 未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?RWM通过双自回归机制有效解决了复合误差、部分可观测性和随机动力学等关键挑战,在不依赖领域特定归纳偏见的条件下实现了卓越的预测准…

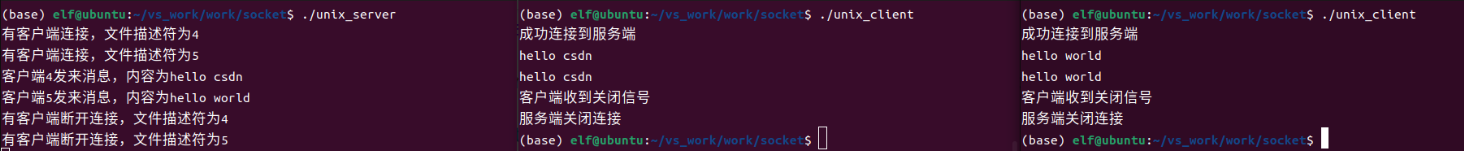

Linux应用开发之网络套接字编程(实例篇)

服务端与客户端单连接

服务端代码

#include <sys/socket.h>

#include <sys/types.h>

#include <netinet/in.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <arpa/inet.h>

#include <pthread.h>

…

华为云AI开发平台ModelArts

华为云ModelArts:重塑AI开发流程的“智能引擎”与“创新加速器”!

在人工智能浪潮席卷全球的2025年,企业拥抱AI的意愿空前高涨,但技术门槛高、流程复杂、资源投入巨大的现实,却让许多创新构想止步于实验室。数据科学家…

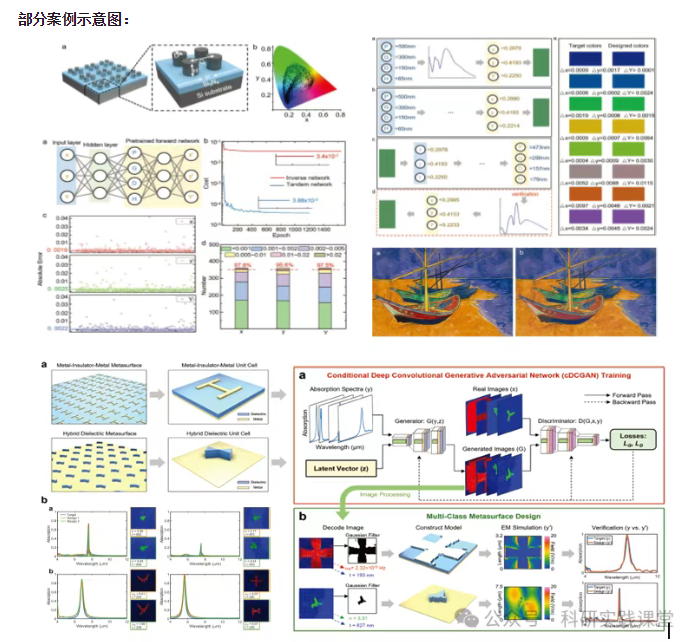

深度学习在微纳光子学中的应用

深度学习在微纳光子学中的主要应用方向

深度学习与微纳光子学的结合主要集中在以下几个方向:

逆向设计 通过神经网络快速预测微纳结构的光学响应,替代传统耗时的数值模拟方法。例如设计超表面、光子晶体等结构。

特征提取与优化 从复杂的光学数据中自…