GNN实战:Cora、Citeseer、PubMed三大文献数据集保姆级使用指南(附代码)

news2026/3/27 23:46:02

GNN实战Cora、Citeseer、PubMed三大文献数据集深度解析与工程实践引言为什么这三个数据集成为GNN研究的黄金标准在探索图神经网络GNN的浩瀚宇宙中Cora、Citeseer和PubMed如同三颗璀璨的恒星照亮了无数研究者的探索之路。这三个文献引用网络数据集之所以被奉为经典绝非偶然——它们完美平衡了现实世界的复杂性与研究可控性为算法验证提供了理想试验场。想象你面前有三座知识宝库Cora收录了2708篇机器学习论文划分为7个细分领域Citeseer包含3312篇计算机科学文献覆盖6大学科方向PubMed则聚焦生物医学拥有19717篇糖尿病相关研究论文分为3大类别。这些论文间的引用关系构成了天然的图结构节点是论文边是引用关系节点特征则是经过处理的词向量标签对应学科分类。这种结构化的知识网络正是GNN发挥威力的最佳舞台。核心价值矩阵维度CoraCiteseerPubMed论文数量2,7083,31219,717类别数763词向量维度1,4333,703500平均节点度数2.742.464.50数据特点学科划分细致特征维度高规模大、类别不平衡对于刚踏入GNN领域的研究者掌握这三个数据集的高效使用方法就如同获得了打开图学习大门的金钥匙。本文将带你深入数据集的内部构造手把手演示从数据获取到模型训练的全流程并分享处理实际问题的工程技巧——这些正是原始论文和教科书很少涉及的实战智慧。1. 环境配置与数据获取1.1 工具链选择PyG vs DGL工欲善其事必先利其器。PyTorch GeometricPyG和Deep Graph LibraryDGL是目前最主流的两个GNN框架它们在数据处理方式上各有特色# PyG安装命令需先安装对应版本的PyTorch pip install torch-scatter torch-sparse torch-cluster torch-spline-conv -f https://data.pyg.org/whl/torch-1.10.0cu113.html pip install torch-geometric # DGL安装命令以CUDA 11.3为例 pip install dgl-cu113 dglgo -f https://data.dgl.ai/wheels/repo.html框架特性对比表特性PyGDGL数据加载方式内置Dataset类通用DGLGraph对象消息传递范式消息-聚合-更新三阶段发送-接收原语稀疏矩阵处理专用COO格式多种稀疏格式支持多GPU支持通过DataParallel实现原生DistributedDataParallel社区生态论文复现多工业界应用广1.2 数据下载与预处理实战三大数据集在PyG中可通过统一接口获取但原始数据需要特殊处理from torch_geometric.datasets import Planetoid # 自动下载并解压数据集 dataset Planetoid(root/tmp/Cora, nameCora) # 查看数据结构 print(f数据集: {dataset}) print(f图数量: {len(dataset)}) print(f类别数: {dataset.num_classes}) print(f节点特征维度: {dataset.num_node_features}) # 获取第一张图 data dataset[0] print(f\n图结构:) print(节点数:, data.num_nodes) print(边数:, data.num_edges) print(训练集样本:, sum(data.train_mask).item()) print(验证集样本:, sum(data.val_mask).item()) print(测试集样本:, sum(data.test_mask).item())常见下载问题解决方案证书验证失败在代码前添加import ssl; ssl._create_default_https_context ssl._create_unverified_context连接超时手动下载https://github.com/kimiyoung/planetoid/raw/master/data下的对应文件放置到/tmp/Cora/raw/特征矩阵归一化使用F.normalize(data.x, p1, dim1)对词袋特征做L1归一化注意PubMed的原始文件较大约1.1GB下载时建议使用断点续传工具。首次加载时会进行预处理可能需要5-10分钟完成。2. 数据深度解析与特征工程2.1 解剖数据集内部结构这三个数据集虽然同属引文网络但在细节上各有特点Cora数据集.content文件格式paper_id word_attributes class_label.cites文件格式cited_paper_id citing_paper_id特征处理去除停用词后保留1433个高频词二进制词袋表示# 查看Cora数据的特征和标签分布 import matplotlib.pyplot as plt labels data.y.numpy() unique, counts np.unique(labels, return_countsTrue) plt.figure(figsize(10,5)) plt.bar(unique, counts, tick_label[ Case Based, Genetic Algorithms, Neural Networks, Probabilistic Methods, Reinforcement Learning, Rule Learning, Theory ]) plt.title(Cora数据集类别分布) plt.xlabel(研究领域) plt.ylabel(论文数量) plt.show()Citeseer的特殊处理存在孤立节点约5%的论文未被引用需要添加自环edge_index torch.cat([data.edge_index, torch.arange(data.num_nodes).repeat(2,1)], dim1)部分论文标题包含非ASCII字符读取时需指定编码with open(citeseer.content, r, encodinglatin1) as f: content f.readlines()2.2 特征增强技巧原始词袋特征可能不足以捕捉论文的深层语义可以考虑TF-IDF加权from sklearn.feature_extraction.text import TfidfTransformer tfidf TfidfTransformer(norml2) data.x torch.FloatTensor(tfidf.fit_transform(data.x.numpy()).toarray())元路径特征 通过构建论文-作者-论文、论文-期刊-论文等元路径丰富特征# 伪代码示例 - 需要真实的作者/期刊信息 def build_meta_path_features(paper_author_dict): coauthor_adj np.zeros((num_papers, num_papers)) for paper1, authors1 in paper_author_dict.items(): for paper2, authors2 in paper_author_dict.items(): if len(set(authors1) set(authors2)) 0: coauthor_adj[paper1][paper2] 1 return coauthor_adj结构特征注入# 添加节点度数作为额外特征 from torch_geometric.utils import degree deg degree(data.edge_index[0], dtypetorch.float) data.x torch.cat([data.x, deg.view(-1, 1)], dim1) # 添加PageRank分数 from torch_geometric.utils import scatter pagerank torch.ones(data.num_nodes) / data.num_nodes for _ in range(20): deg_inv 1.0 / degree(data.edge_index[1]) msg pagerank[data.edge_index[0]] * deg_inv[data.edge_index[1]] pagerank scatter(msg, data.edge_index[1], dim_sizedata.num_nodes, reducesum) data.x torch.cat([data.x, pagerank.view(-1, 1)], dim1)3. 模型构建与训练技巧3.1 基准模型实现以GCN为例演示如何在PyG中实现import torch.nn.functional as F from torch_geometric.nn import GCNConv class GCN(torch.nn.Module): def __init__(self, num_features, hidden_dim, num_classes): super().__init__() self.conv1 GCNConv(num_features, hidden_dim) self.conv2 GCNConv(hidden_dim, num_classes) def forward(self, data): x, edge_index data.x, data.edge_index x self.conv1(x, edge_index) x F.relu(x) x F.dropout(x, p0.5, trainingself.training) x self.conv2(x, edge_index) return F.log_softmax(x, dim1)超参数配置参考device torch.device(cuda if torch.cuda.is_available() else cpu) model GCN( num_featuresdataset.num_features, hidden_dim16, num_classesdataset.num_classes ).to(device) optimizer torch.optim.Adam(model.parameters(), lr0.01, weight_decay5e-4) data data.to(device)3.2 训练流程优化标准训练流程需要针对图数据特点进行优化def train(model, data, optimizer): model.train() optimizer.zero_grad() out model(data) loss F.nll_loss(out[data.train_mask], data.y[data.train_mask]) loss.backward() optimizer.step() return loss.item() def test(model, data): model.eval() out model(data) pred out.argmax(dim1) accs [] for mask in [data.train_mask, data.val_mask, data.test_mask]: correct pred[mask] data.y[mask] accs.append(int(correct.sum()) / int(mask.sum())) return accs best_val_acc 0 patience 20 current_patience 0 for epoch in range(1, 501): loss train(model, data, optimizer) train_acc, val_acc, test_acc test(model, data) if val_acc best_val_acc: best_val_acc val_acc current_patience 0 torch.save(model.state_dict(), best_model.pt) else: current_patience 1 if current_patience patience: print(fEarly stopping at epoch {epoch}) break if epoch % 20 0: print(fEpoch: {epoch:03d}, Loss: {loss:.4f}, fTrain: {train_acc:.4f}, Val: {val_acc:.4f}, fTest: {test_acc:.4f}) # 加载最佳模型 model.load_state_dict(torch.load(best_model.pt)) final_train, final_val, final_test test(model, data) print(f\nFinal results: Train: {final_train:.4f}, fVal: {final_val:.4f}, Test: {final_test:.4f})性能提升技巧邻域采样对于PubMed等大数据集使用NeighborSampler进行分批训练from torch_geometric.loader import NeighborSampler train_loader NeighborSampler(data.edge_index, node_idxdata.train_mask, sizes[10, 5], batch_size256, shuffleTrue)标签平滑缓解类别不平衡def smooth_labels(labels, alpha0.1): num_classes labels.max() 1 return (1 - alpha) * F.one_hot(labels, num_classes) alpha / num_classes图增强通过边丢弃(Edge Dropout)增加鲁棒性def random_edge_dropout(edge_index, p0.2): mask torch.rand(edge_index.size(1)) p return edge_index[:, mask]4. 常见问题与解决方案4.1 典型报错与排查问题1维度不匹配RuntimeError: size mismatch, m1: [2708 x 1433], m2: [16 x 64]检查点确保num_features与输入维度匹配隐藏层维度一致问题2内存不足CUDA out of memory. Tried to allocate...解决方案使用NeighborSampler进行分批训练减小隐藏层维度启用梯度检查点from torch.utils.checkpoint import checkpoint x checkpoint(self.conv1, x, edge_index)问题3梯度爆炸/消失诊断方法for name, param in model.named_parameters(): if param.grad is not None: print(name, param.grad.data.norm())应对措施添加梯度裁剪torch.nn.utils.clip_grad_norm_(model.parameters(), max_norm1.0)使用残差连接self.conv1 GCNConv(num_features, hidden_dim) self.conv2 GCNConv(hidden_dim, hidden_dim) self.conv3 GCNConv(hidden_dim, num_classes) def forward(self, data): x0 data.x x1 F.relu(self.conv1(x0, data.edge_index)) x2 F.relu(self.conv2(x1, data.edge_index) x1) # 残差连接 x3 self.conv3(x2, data.edge_index) return F.log_softmax(x3, dim1)4.2 性能调优路线图基线建立原始GCNCora (81.5%), Citeseer (71.2%), PubMed (79.0%)加入自注意力1.5~3%添加残差连接0.5~1.5%高级优化策略课程学习先训练简单样本逐步增加难度def get_curriculum_mask(epoch, total_epochs): # 随训练进度逐渐增加训练样本 ratio min(epoch / (total_epochs * 0.3), 1.0) return torch.rand(data.num_nodes) ratio对抗训练增强模型鲁棒性def adversarial_perturb(model, data, epsilon0.01): data.x.requires_grad True out model(data) loss F.nll_loss(out[data.train_mask], data.y[data.train_mask]) loss.backward() perturb epsilon * data.x.grad / torch.norm(data.x.grad, p2) return perturb.detach()模型解释工具GNNExplainer识别重要节点和边from torch_geometric.nn import GNNExplainer explainer GNNExplainer(model, epochs200) node_idx 10 # 待解释的节点索引 feat_mask, edge_mask explainer.explain_node(node_idx, data.x, data.edge_index)5. 超越基准前沿探索方向5.1 异构图处理真实文献网络包含作者、期刊等多类型节点可用异构图神经网络建模from torch_geometric.nn import HeteroConv, GCNConv, SAGEConv class HeteroGNN(torch.nn.Module): def __init__(self, metadata, hidden_dim): super().__init__() self.conv1 HeteroConv({ (paper, cites, paper): GCNConv(-1, hidden_dim), (paper, written_by, author): SAGEConv((-1, -1), hidden_dim), (author, writes, paper): SAGEConv((-1, -1), hidden_dim) }) self.conv2 HeteroConv({ (paper, cites, paper): GCNConv(-1, hidden_dim), (paper, written_by, author): SAGEConv((-1, -1), hidden_dim), (author, writes, paper): SAGEConv((-1, -1), hidden_dim) }) def forward(self, x_dict, edge_index_dict): x_dict self.conv1(x_dict, edge_index_dict) x_dict {key: F.leaky_relu(x) for key, x in x_dict.items()} x_dict self.conv2(x_dict, edge_index_dict) return x_dict[paper] # 返回论文节点表示5.2 自监督学习当标注数据有限时自监督预训练能显著提升性能from torch_geometric.nn import Node2Vec # 节点级预训练 def pretrain_node2vec(data, embedding_dim128): device cuda if torch.cuda.is_available() else cpu model Node2Vec(data.edge_index, embedding_dimembedding_dim, walk_length20, context_size10, walks_per_node10).to(device) loader model.loader(batch_size128, shuffleTrue) optimizer torch.optim.Adam(model.parameters(), lr0.01) for epoch in range(1, 101): model.train() total_loss 0 for pos_rw, neg_rw in loader: optimizer.zero_grad() loss model.loss(pos_rw.to(device), neg_rw.to(device)) loss.backward() optimizer.step() total_loss loss.item() if epoch % 10 0: print(fEpoch: {epoch:02d}, Loss: {total_loss / len(loader):.4f}) return model.embedding.weight.data # 将预训练嵌入作为初始特征 pretrain_emb pretrain_node2vec(data) data.x torch.cat([data.x, pretrain_emb], dim1)5.3 动态图建模文献网络随时间演化动态GNN能捕捉这种变化from torch_geometric.nn import TGCN class DynamicGNN(torch.nn.Module): def __init__(self, num_features, hidden_dim, num_classes): super().__init__() self.tgcn TGCN(num_features, hidden_dim) self.linear torch.nn.Linear(hidden_dim, num_classes) def forward(self, x, edge_index, edge_weight, timestamps): h self.tgcn(x, edge_index, edge_weight, timestamps) return F.log_softmax(self.linear(h), dim1) # 假设每个边有时间戳 timestamps torch.randint(0, 10, (data.edge_index.size(1),)) model DynamicGNN(data.num_features, 16, dataset.num_classes)

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如若转载,请注明出处:http://www.coloradmin.cn/o/2452500.html

如若内容造成侵权/违法违规/事实不符,请联系多彩编程网进行投诉反馈,一经查实,立即删除!相关文章

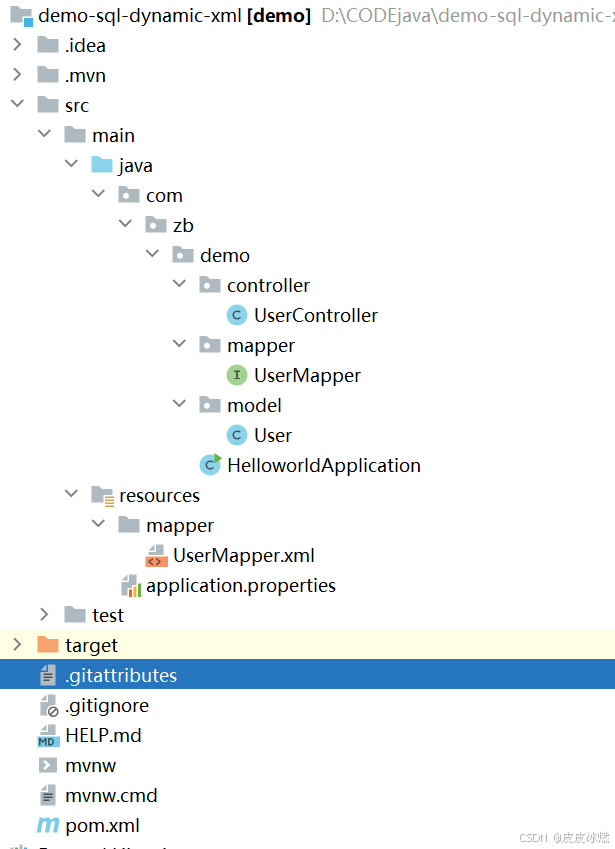

SpringBoot-17-MyBatis动态SQL标签之常用标签

文章目录 1 代码1.1 实体User.java1.2 接口UserMapper.java1.3 映射UserMapper.xml1.3.1 标签if1.3.2 标签if和where1.3.3 标签choose和when和otherwise1.4 UserController.java2 常用动态SQL标签2.1 标签set2.1.1 UserMapper.java2.1.2 UserMapper.xml2.1.3 UserController.ja…

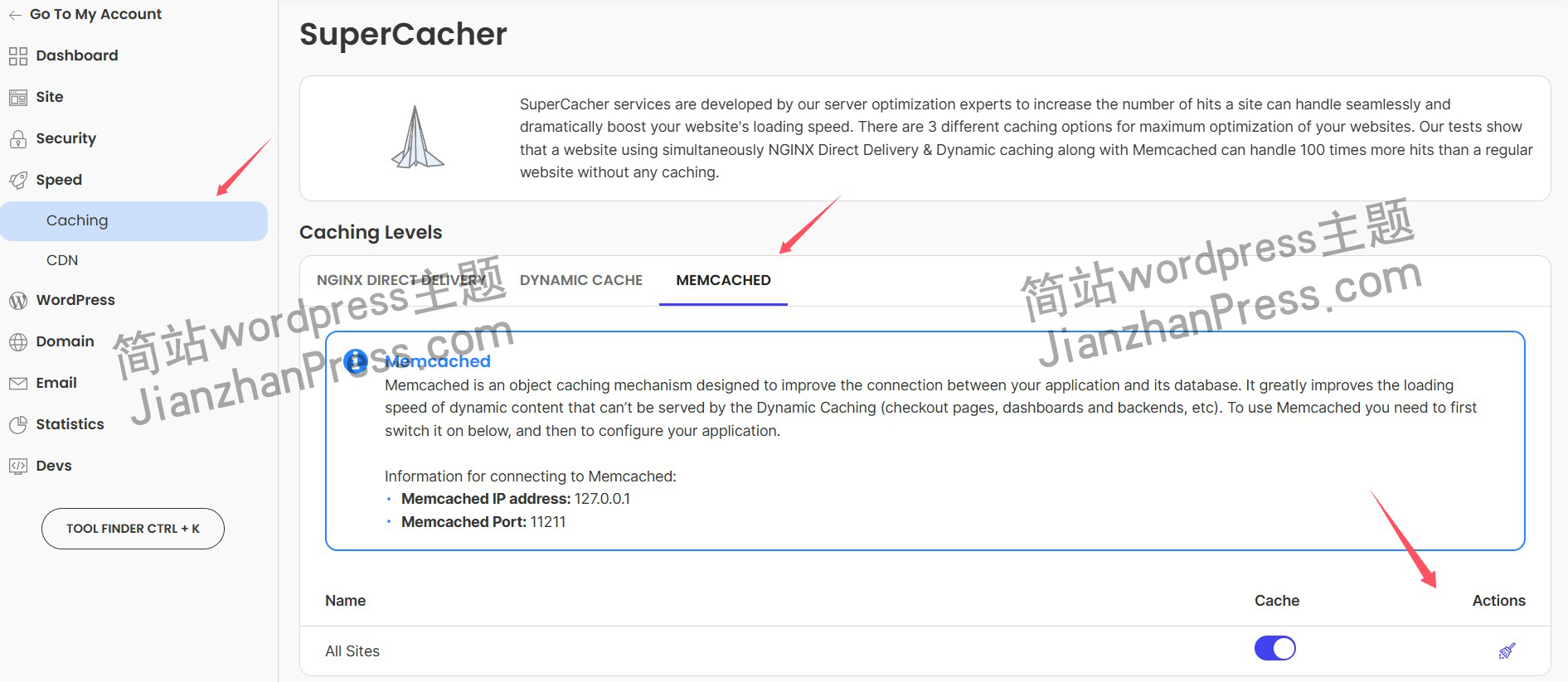

wordpress后台更新后 前端没变化的解决方法

使用siteground主机的wordpress网站,会出现更新了网站内容和修改了php模板文件、js文件、css文件、图片文件后,网站没有变化的情况。

不熟悉siteground主机的新手,遇到这个问题,就很抓狂,明明是哪都没操作错误&#x…

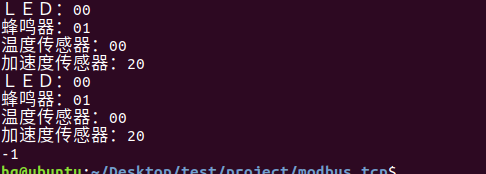

网络编程(Modbus进阶)

思维导图 Modbus RTU(先学一点理论)

概念 Modbus RTU 是工业自动化领域 最广泛应用的串行通信协议,由 Modicon 公司(现施耐德电气)于 1979 年推出。它以 高效率、强健性、易实现的特点成为工业控制系统的通信标准。 包…

UE5 学习系列(二)用户操作界面及介绍

这篇博客是 UE5 学习系列博客的第二篇,在第一篇的基础上展开这篇内容。博客参考的 B 站视频资料和第一篇的链接如下:

【Note】:如果你已经完成安装等操作,可以只执行第一篇博客中 2. 新建一个空白游戏项目 章节操作,重…

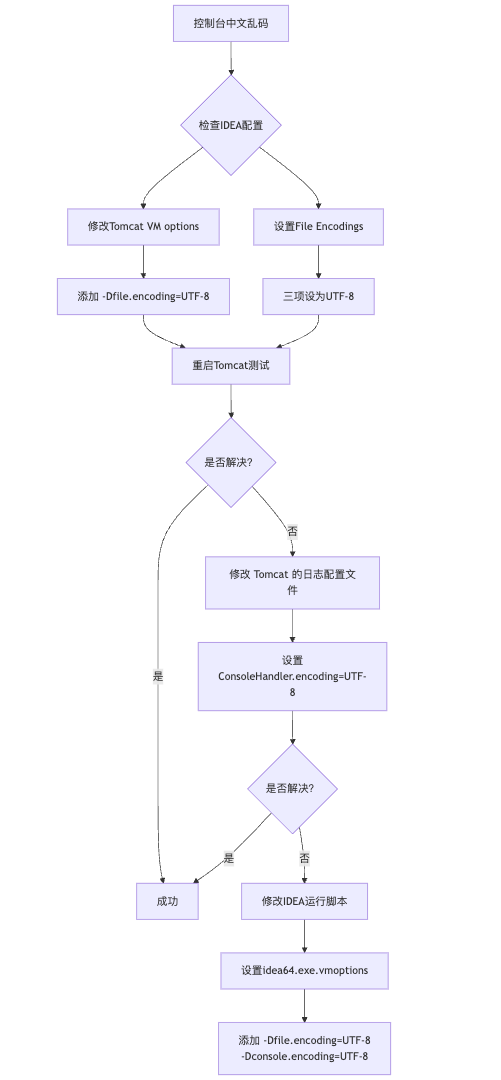

IDEA运行Tomcat出现乱码问题解决汇总

最近正值期末周,有很多同学在写期末Java web作业时,运行tomcat出现乱码问题,经过多次解决与研究,我做了如下整理:

原因:

IDEA本身编码与tomcat的编码与Windows编码不同导致,Windows 系统控制台…

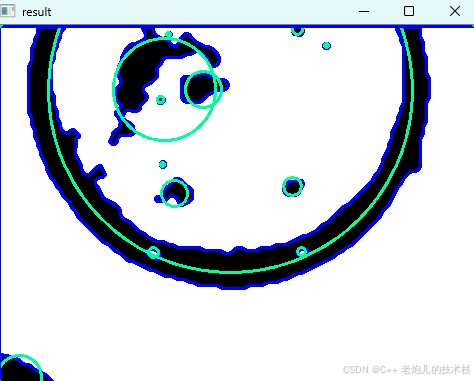

利用最小二乘法找圆心和半径

#include <iostream>

#include <vector>

#include <cmath>

#include <Eigen/Dense> // 需安装Eigen库用于矩阵运算 // 定义点结构

struct Point { double x, y; Point(double x_, double y_) : x(x_), y(y_) {}

}; // 最小二乘法求圆心和半径 …

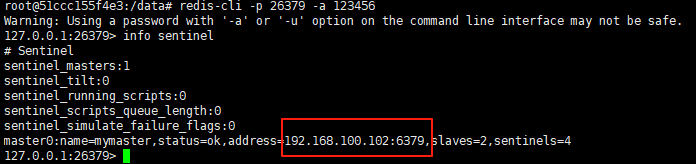

使用docker在3台服务器上搭建基于redis 6.x的一主两从三台均是哨兵模式

一、环境及版本说明

如果服务器已经安装了docker,则忽略此步骤,如果没有安装,则可以按照一下方式安装: 1. 在线安装(有互联网环境): 请看我这篇文章 传送阵>> 点我查看 2. 离线安装(内网环境):请看我这篇文章 传送阵>> 点我查看

说明:假设每台服务器已…

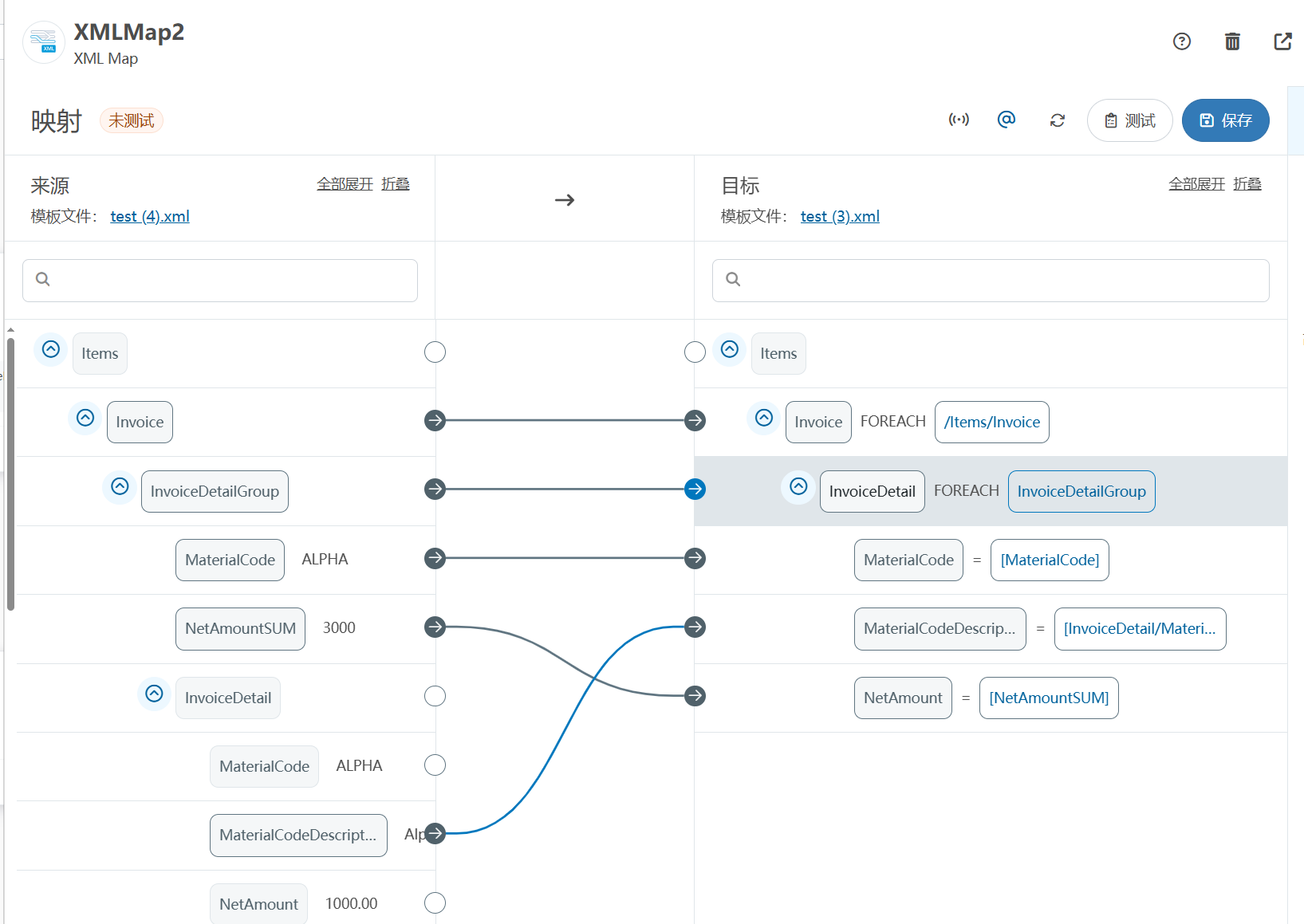

XML Group端口详解

在XML数据映射过程中,经常需要对数据进行分组聚合操作。例如,当处理包含多个物料明细的XML文件时,可能需要将相同物料号的明细归为一组,或对相同物料号的数量进行求和计算。传统实现方式通常需要编写脚本代码,增加了开…

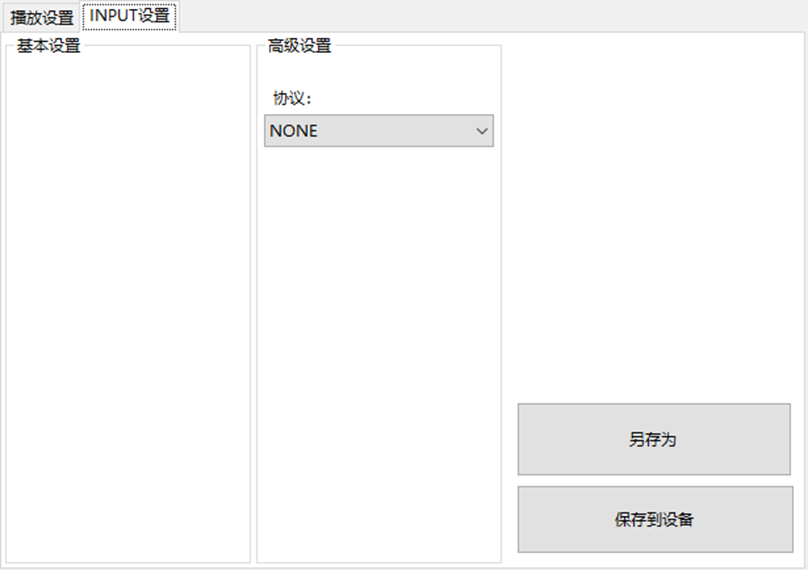

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器的上位机配置操作说明

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器专为工业环境精心打造,完美适配AGV和无人叉车。同时,集成以太网与语音合成技术,为各类高级系统(如MES、调度系统、库位管理、立库等)提供高效便捷的语音交互体验。

L…

(LeetCode 每日一题) 3442. 奇偶频次间的最大差值 I (哈希、字符串)

题目:3442. 奇偶频次间的最大差值 I 思路 :哈希,时间复杂度0(n)。 用哈希表来记录每个字符串中字符的分布情况,哈希表这里用数组即可实现。

C版本:

class Solution {

public:int maxDifference(string s) {int a[26]…

【大模型RAG】拍照搜题技术架构速览:三层管道、两级检索、兜底大模型

摘要

拍照搜题系统采用“三层管道(多模态 OCR → 语义检索 → 答案渲染)、两级检索(倒排 BM25 向量 HNSW)并以大语言模型兜底”的整体框架: 多模态 OCR 层 将题目图片经过超分、去噪、倾斜校正后,分别用…

【Axure高保真原型】引导弹窗

今天和大家中分享引导弹窗的原型模板,载入页面后,会显示引导弹窗,适用于引导用户使用页面,点击完成后,会显示下一个引导弹窗,直至最后一个引导弹窗完成后进入首页。具体效果可以点击下方视频观看或打开下方…

接口测试中缓存处理策略

在接口测试中,缓存处理策略是一个关键环节,直接影响测试结果的准确性和可靠性。合理的缓存处理策略能够确保测试环境的一致性,避免因缓存数据导致的测试偏差。以下是接口测试中常见的缓存处理策略及其详细说明:

一、缓存处理的核…

龙虎榜——20250610

上证指数放量收阴线,个股多数下跌,盘中受消息影响大幅波动。 深证指数放量收阴线形成顶分型,指数短线有调整的需求,大概需要一两天。 2025年6月10日龙虎榜行业方向分析 1. 金融科技

代表标的:御银股份、雄帝科技

驱动…

观成科技:隐蔽隧道工具Ligolo-ng加密流量分析

1.工具介绍

Ligolo-ng是一款由go编写的高效隧道工具,该工具基于TUN接口实现其功能,利用反向TCP/TLS连接建立一条隐蔽的通信信道,支持使用Let’s Encrypt自动生成证书。Ligolo-ng的通信隐蔽性体现在其支持多种连接方式,适应复杂网…

铭豹扩展坞 USB转网口 突然无法识别解决方法

当 USB 转网口扩展坞在一台笔记本上无法识别,但在其他电脑上正常工作时,问题通常出在笔记本自身或其与扩展坞的兼容性上。以下是系统化的定位思路和排查步骤,帮助你快速找到故障原因:

背景:

一个M-pard(铭豹)扩展坞的网卡突然无法识别了,扩展出来的三个USB接口正常。…

未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?

编辑:陈萍萍的公主一点人工一点智能 未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?RWM通过双自回归机制有效解决了复合误差、部分可观测性和随机动力学等关键挑战,在不依赖领域特定归纳偏见的条件下实现了卓越的预测准…

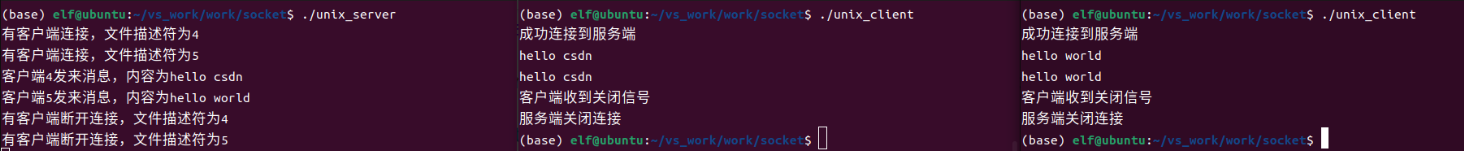

Linux应用开发之网络套接字编程(实例篇)

服务端与客户端单连接

服务端代码

#include <sys/socket.h>

#include <sys/types.h>

#include <netinet/in.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <arpa/inet.h>

#include <pthread.h>

…

华为云AI开发平台ModelArts

华为云ModelArts:重塑AI开发流程的“智能引擎”与“创新加速器”!

在人工智能浪潮席卷全球的2025年,企业拥抱AI的意愿空前高涨,但技术门槛高、流程复杂、资源投入巨大的现实,却让许多创新构想止步于实验室。数据科学家…

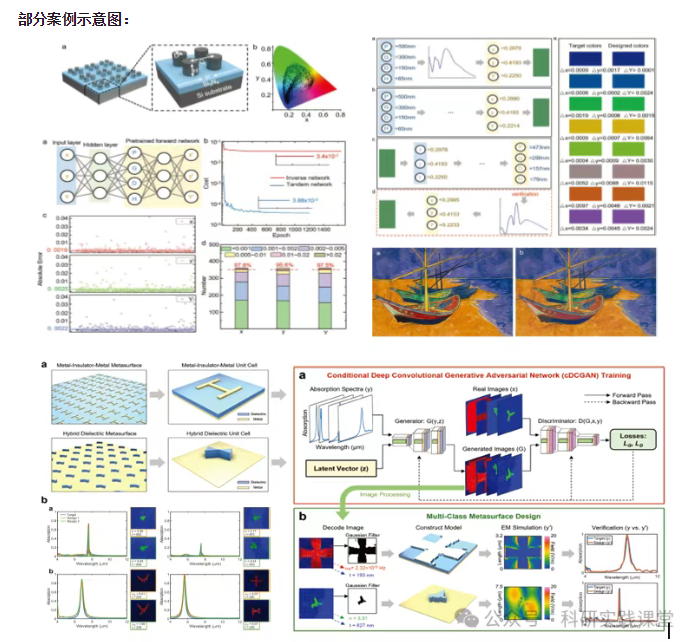

深度学习在微纳光子学中的应用

深度学习在微纳光子学中的主要应用方向

深度学习与微纳光子学的结合主要集中在以下几个方向:

逆向设计 通过神经网络快速预测微纳结构的光学响应,替代传统耗时的数值模拟方法。例如设计超表面、光子晶体等结构。

特征提取与优化 从复杂的光学数据中自…