用Kubernetes搭建大数据分析平台:Spark on K8s完整配置指南(附Flink集成方案)

news2026/3/28 11:14:22

Kubernetes大数据平台实战Spark与Flink的容器化部署与优化大数据处理框架的容器化部署已经成为企业级数据平台的标准配置。本文将深入探讨如何在Kubernetes上构建高性能的Spark和Flink集群从基础配置到高级优化为大数据工程师提供一站式解决方案。1. 环境准备与基础架构设计构建Kubernetes大数据平台的第一步是规划合理的集群架构。对于生产环境建议采用至少三个工作节点的集群配置每个节点配备足够的CPU、内存和存储资源。GPU节点则根据机器学习工作负载需求单独部署。基础组件清单Kubernetes集群版本1.20Helm包管理器版本3.0网络插件Calico/Flannel/Cilium存储解决方案如Rook/Ceph或云厂商存储类监控系统Prometheus-Operator Grafana提示生产环境务必配置集群自动扩缩容CA和水平Pod自动扩缩容HPA以应对突发工作负载。对于GPU资源管理需要预先安装NVIDIA设备插件kubectl create -f https://raw.githubusercontent.com/NVIDIA/k8s-device-plugin/v0.12.2/nvidia-device-plugin.yml2. Spark on Kubernetes深度配置2.1 定制化Spark镜像构建标准Spark镜像往往不能满足企业特定需求我们需要构建包含必要依赖的自定义镜像。以下是一个优化后的Dockerfile示例FROM eclipse-temurin:11-jre-jammy ARG SPARK_VERSION3.3.2 ARG HADOOP_VERSION3 RUN apt-get update \ apt-get install -y python3 python3-pip \ rm -rf /var/lib/apt/lists/* WORKDIR /opt RUN wget -q https://archive.apache.org/dist/spark/spark-${SPARK_VERSION}/spark-${SPARK_VERSION}-bin-hadoop${HADOOP_VERSION}.tgz \ tar xzf spark-${SPARK_VERSION}-bin-hadoop${HADOOP_VERSION}.tgz \ ln -s spark-${SPARK_VERSION}-bin-hadoop${HADOOP_VERSION} spark \ rm spark-${SPARK_VERSION}-bin-hadoop${HADOOP_VERSION}.tgz ENV SPARK_HOME/opt/spark ENV PATH$PATH:$SPARK_HOME/bin ENV PYSPARK_PYTHONpython3 # 安装Python依赖 COPY requirements.txt . RUN pip install -r requirements.txt WORKDIR /opt/spark/work-dir构建完成后推送至私有镜像仓库docker build -t your-registry/spark:3.3.2-custom . docker push your-registry/spark:3.3.2-custom2.2 使用Spark Operator部署集群Spark Operator大大简化了Spark应用在Kubernetes上的管理。通过Helm安装Operatorhelm repo add spark-operator https://googlecloudplatform.github.io/spark-on-k8s-operator helm install spark-operator spark-operator/spark-operator --namespace spark-operator --create-namespace典型Spark应用部署配置示例apiVersion: sparkoperator.k8s.io/v1beta2 kind: SparkApplication metadata: name: etl-pipeline spec: type: Python mode: cluster image: your-registry/spark:3.3.2-custom mainApplicationFile: local:///opt/spark/work-dir/main.py sparkVersion: 3.3.2 restartPolicy: type: OnFailure onFailureRetries: 3 onFailureRetryInterval: 10 driver: cores: 1 memory: 2G serviceAccount: spark labels: version: 3.3.2 annotations: spark.apache.org/version: 3.3.2 executor: cores: 2 instances: 3 memory: 4G labels: version: 3.3.22.3 性能优化策略资源配置优化矩阵工作负载类型Driver资源Executor资源实例数并行度系数批处理ETL4CPU/8GB4CPU/16GB10-20核心数×3流处理2CPU/4GB2CPU/8GB5-10分区数×1.2机器学习8CPU/16GB8CPU/32GBGPU3-5数据分片数关键配置参数spark.kubernetes.executor.request.cores2 spark.kubernetes.memoryOverheadFactor0.2 spark.executor.instances5 spark.sql.shuffle.partitions200 spark.default.parallelism1003. Flink on Kubernetes实战部署3.1 高可用Flink会话集群部署使用官方Flink镜像部署会话集群apiVersion: apps/v1 kind: Deployment metadata: name: flink-jobmanager spec: replicas: 1 selector: matchLabels: app: flink component: jobmanager template: metadata: labels: app: flink component: jobmanager spec: containers: - name: jobmanager image: flink:1.16.1-scala_2.12 args: [jobmanager] ports: - containerPort: 6123 name: rpc - containerPort: 6124 name: blob - containerPort: 8081 name: ui env: - name: JOB_MANAGER_RPC_ADDRESS value: flink-jobmanager resources: requests: cpu: 2 memory: 4Gi limits: cpu: 4 memory: 8Gi --- apiVersion: apps/v1 kind: Deployment metadata: name: flink-taskmanager spec: replicas: 3 selector: matchLabels: app: flink component: taskmanager template: metadata: labels: app: flink component: taskmanager spec: containers: - name: taskmanager image: flink:1.16.1-scala_2.12 args: [taskmanager] ports: - containerPort: 6122 name: data env: - name: JOB_MANAGER_RPC_ADDRESS value: flink-jobmanager resources: requests: cpu: 4 memory: 8Gi limits: cpu: 8 memory: 16Gi3.2 使用Flink Kubernetes Operator对于生产环境推荐使用Flink Kubernetes Operator进行生命周期管理helm repo add flink-operator https://downloads.apache.org/flink/flink-kubernetes-operator-1.4.0/ helm install flink-operator flink-operator/flink-kubernetes-operator部署Flink作业示例apiVersion: flink.apache.org/v1beta1 kind: FlinkDeployment metadata: name: streaming-job spec: image: flink:1.16.1-scala_2.12 flinkVersion: v1_16 flinkConfiguration: taskmanager.numberOfTaskSlots: 4 state.backend: rocksdb state.checkpoints.dir: s3://your-bucket/checkpoints podTemplate: spec: containers: - name: flink-main-container resources: requests: memory: 8Gi cpu: 2 limits: memory: 16Gi cpu: 4 jobManager: resource: memory: 4Gi cpu: 1 taskManager: resource: memory: 8Gi cpu: 2 job: jarURI: local:///opt/flink/usrlib/streaming-job.jar parallelism: 8 upgradeMode: stateless4. 混合工作负载调度与资源优化4.1 资源隔离与配额管理在Kubernetes中实现Spark和Flink的资源隔离apiVersion: scheduling.k8s.io/v1 kind: PriorityClass metadata: name: high-priority value: 1000000 globalDefault: false description: 用于关键批处理作业 apiVersion: scheduling.k8s.io/v1 kind: PriorityClass metadata: name: medium-priority value: 500000 globalDefault: false description: 用于流处理作业 apiVersion: v1 kind: ResourceQuota metadata: name: spark-quota spec: hard: pods: 50 requests.cpu: 40 requests.memory: 160Gi limits.cpu: 80 limits.memory: 320Gi4.2 动态资源分配策略Spark动态分配配置spark.dynamicAllocation.enabledtrue spark.dynamicAllocation.shuffleTracking.enabledtrue spark.dynamicAllocation.minExecutors3 spark.dynamicAllocation.maxExecutors20 spark.dynamicAllocation.initialExecutors5Flink弹性伸缩配置spec: flinkConfiguration: kubernetes.operator.job.autoscaler.enabled: true kubernetes.operator.job.autoscaler.target.utilization: 0.7 kubernetes.operator.job.autoscaler.stabilization.interval: 1min4.3 监控与告警体系部署Prometheus监控栈helm repo add prometheus-community https://prometheus-community.github.io/helm-charts helm install prometheus prometheus-community/kube-prometheus-stack关键监控指标组件核心指标告警阈值SparkDriver/Executor内存使用率85%持续5分钟任务失败率5%FlinkCheckpoint成功率90%反压指标高反压持续10分钟K8s节点CPU/内存利用率80%持续15分钟Pod重启次数3次/小时

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如若转载,请注明出处:http://www.coloradmin.cn/o/2420580.html

如若内容造成侵权/违法违规/事实不符,请联系多彩编程网进行投诉反馈,一经查实,立即删除!相关文章

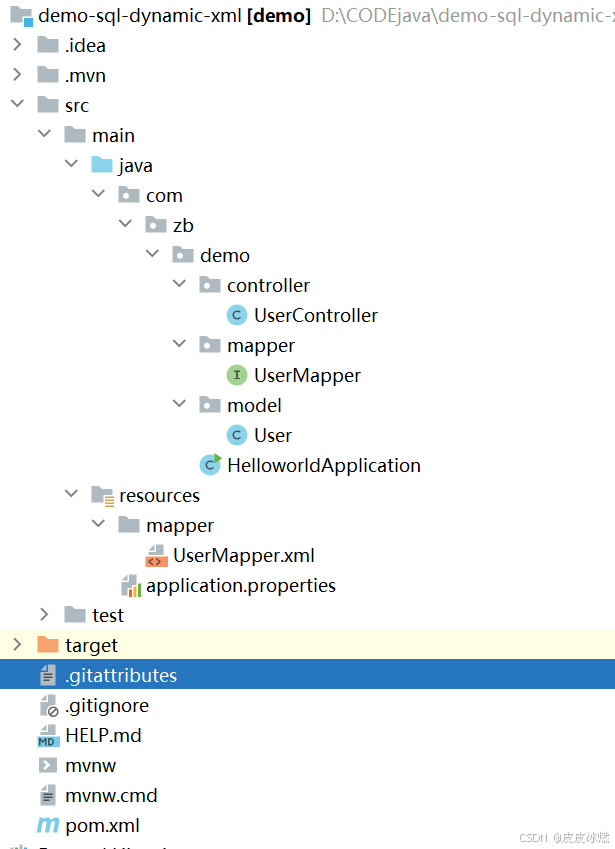

SpringBoot-17-MyBatis动态SQL标签之常用标签

文章目录 1 代码1.1 实体User.java1.2 接口UserMapper.java1.3 映射UserMapper.xml1.3.1 标签if1.3.2 标签if和where1.3.3 标签choose和when和otherwise1.4 UserController.java2 常用动态SQL标签2.1 标签set2.1.1 UserMapper.java2.1.2 UserMapper.xml2.1.3 UserController.ja…

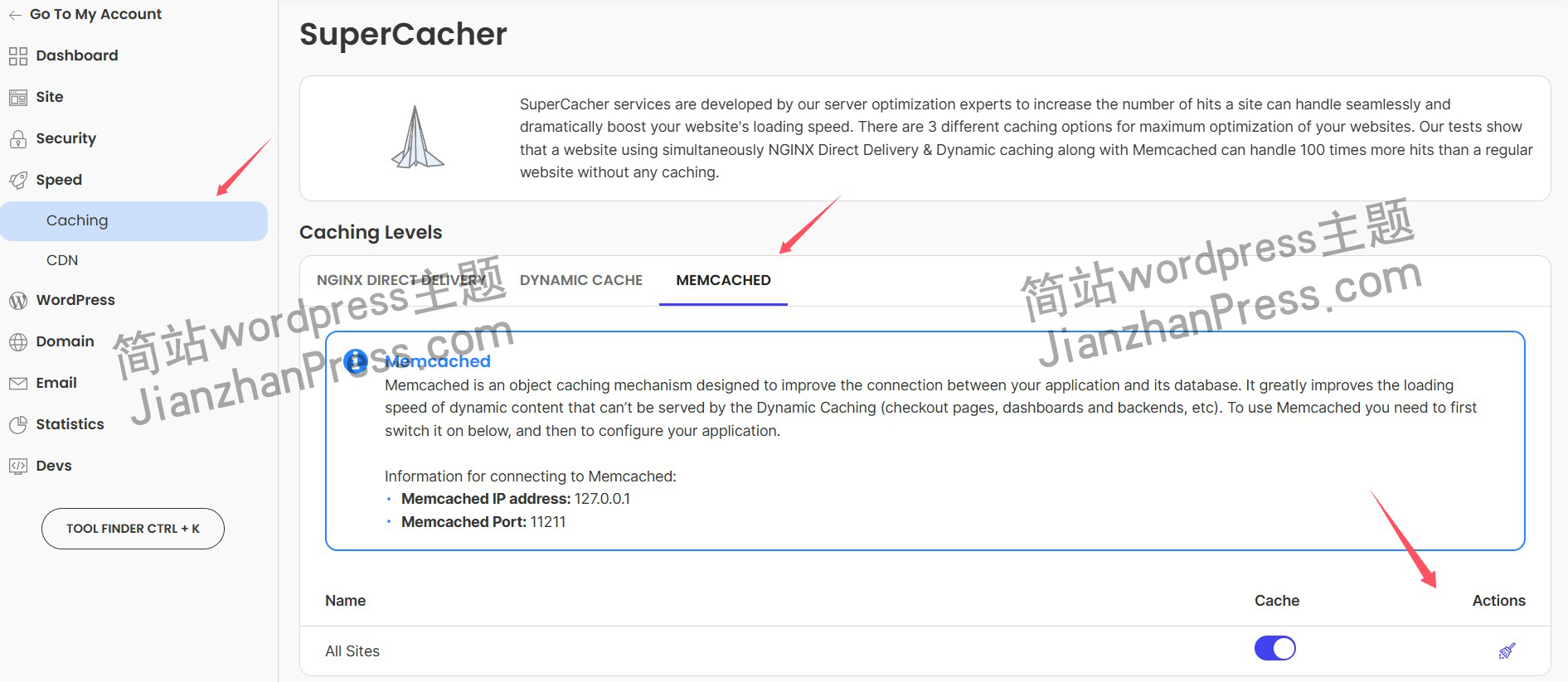

wordpress后台更新后 前端没变化的解决方法

使用siteground主机的wordpress网站,会出现更新了网站内容和修改了php模板文件、js文件、css文件、图片文件后,网站没有变化的情况。

不熟悉siteground主机的新手,遇到这个问题,就很抓狂,明明是哪都没操作错误&#x…

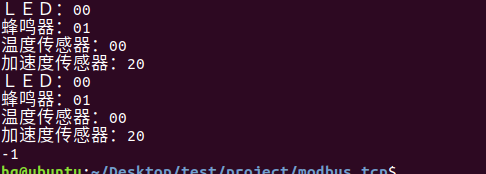

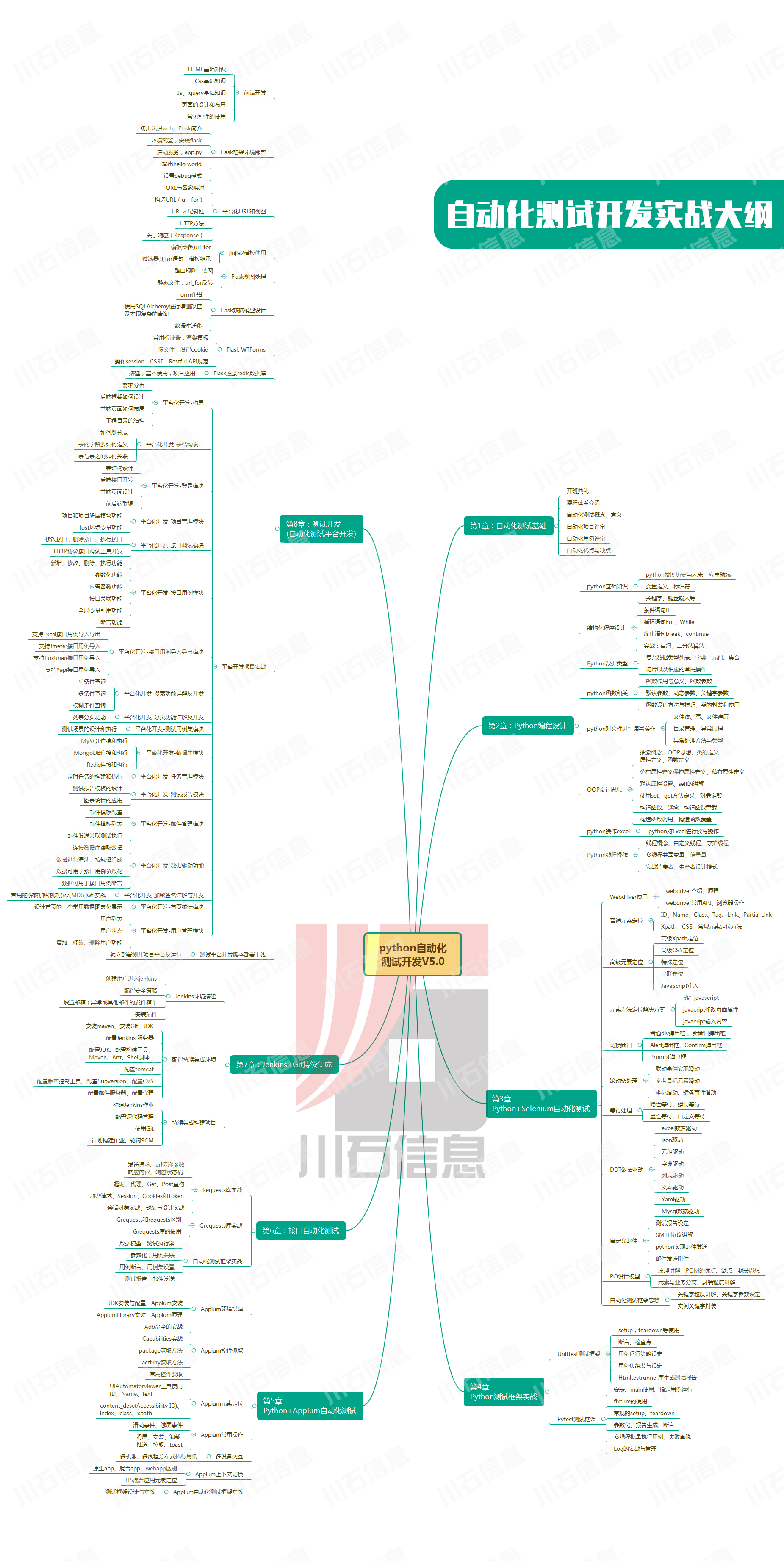

网络编程(Modbus进阶)

思维导图 Modbus RTU(先学一点理论)

概念 Modbus RTU 是工业自动化领域 最广泛应用的串行通信协议,由 Modicon 公司(现施耐德电气)于 1979 年推出。它以 高效率、强健性、易实现的特点成为工业控制系统的通信标准。 包…

UE5 学习系列(二)用户操作界面及介绍

这篇博客是 UE5 学习系列博客的第二篇,在第一篇的基础上展开这篇内容。博客参考的 B 站视频资料和第一篇的链接如下:

【Note】:如果你已经完成安装等操作,可以只执行第一篇博客中 2. 新建一个空白游戏项目 章节操作,重…

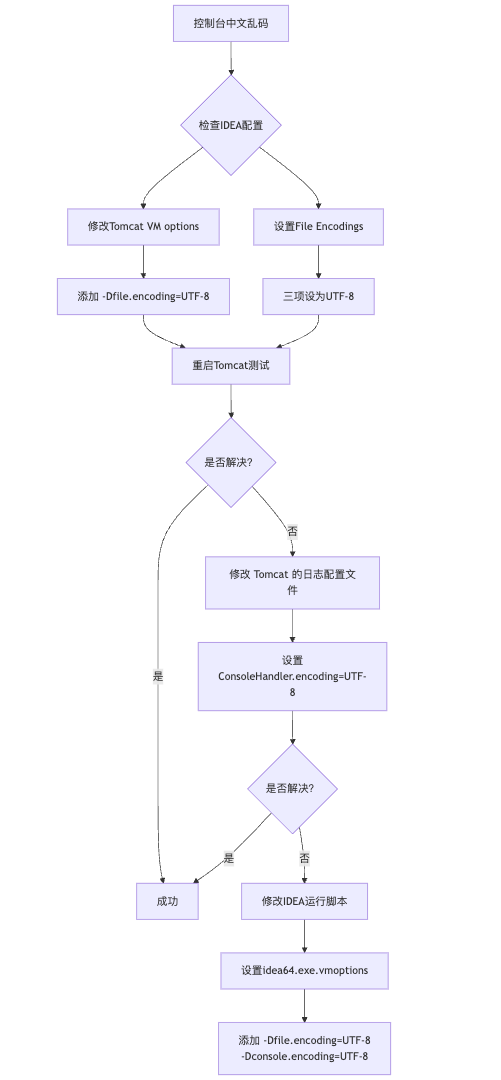

IDEA运行Tomcat出现乱码问题解决汇总

最近正值期末周,有很多同学在写期末Java web作业时,运行tomcat出现乱码问题,经过多次解决与研究,我做了如下整理:

原因:

IDEA本身编码与tomcat的编码与Windows编码不同导致,Windows 系统控制台…

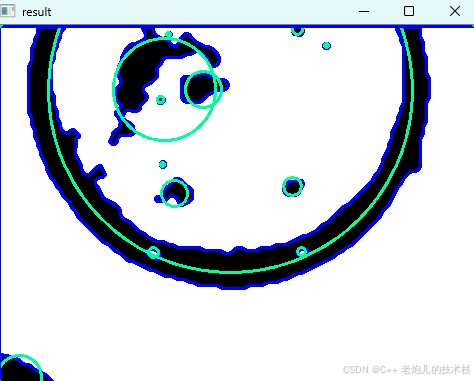

利用最小二乘法找圆心和半径

#include <iostream>

#include <vector>

#include <cmath>

#include <Eigen/Dense> // 需安装Eigen库用于矩阵运算 // 定义点结构

struct Point { double x, y; Point(double x_, double y_) : x(x_), y(y_) {}

}; // 最小二乘法求圆心和半径 …

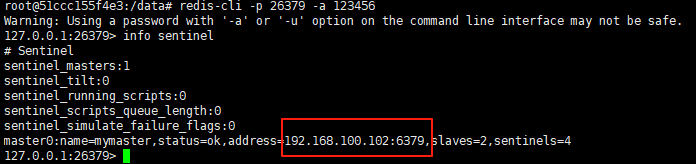

使用docker在3台服务器上搭建基于redis 6.x的一主两从三台均是哨兵模式

一、环境及版本说明

如果服务器已经安装了docker,则忽略此步骤,如果没有安装,则可以按照一下方式安装: 1. 在线安装(有互联网环境): 请看我这篇文章 传送阵>> 点我查看 2. 离线安装(内网环境):请看我这篇文章 传送阵>> 点我查看

说明:假设每台服务器已…

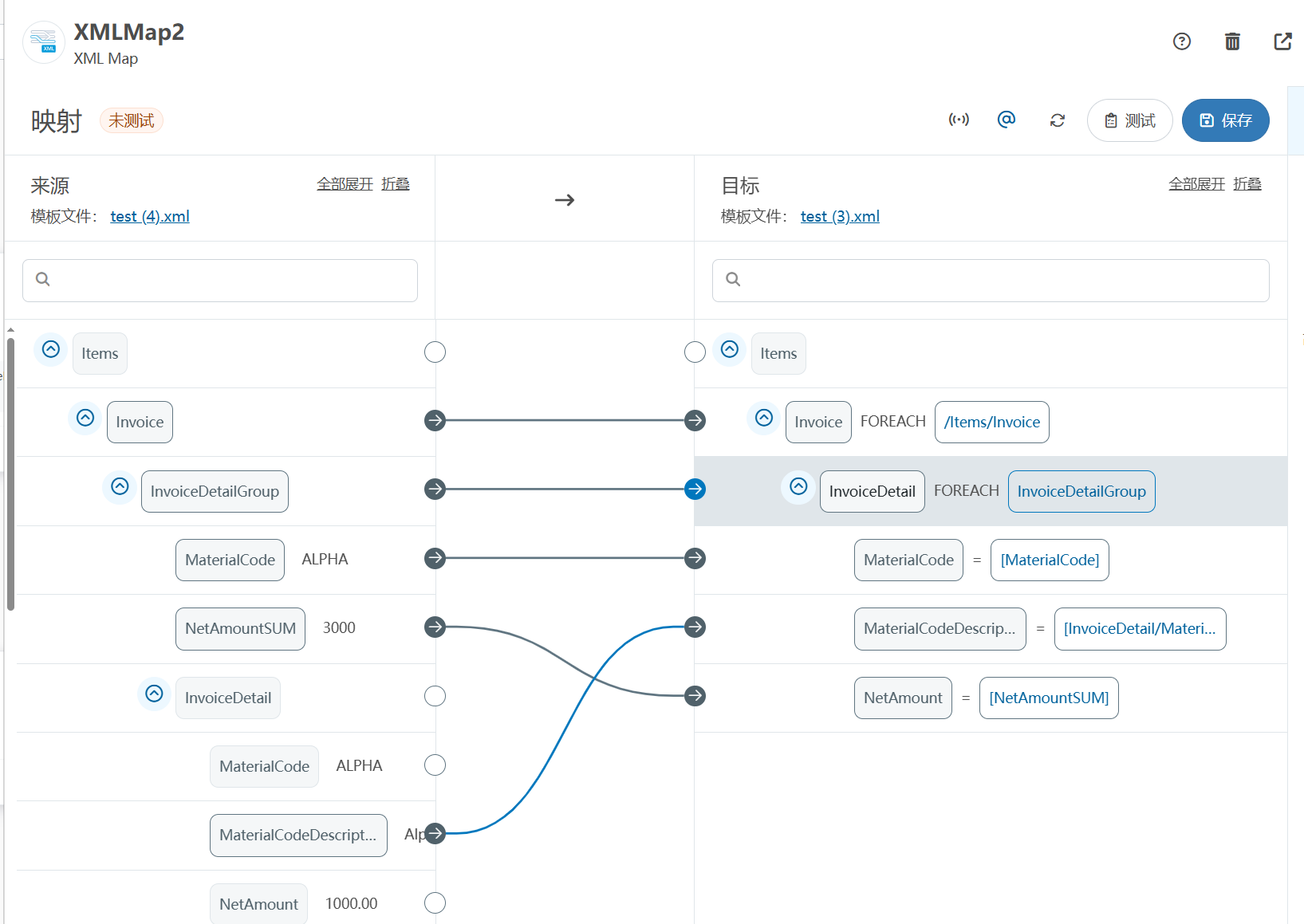

XML Group端口详解

在XML数据映射过程中,经常需要对数据进行分组聚合操作。例如,当处理包含多个物料明细的XML文件时,可能需要将相同物料号的明细归为一组,或对相同物料号的数量进行求和计算。传统实现方式通常需要编写脚本代码,增加了开…

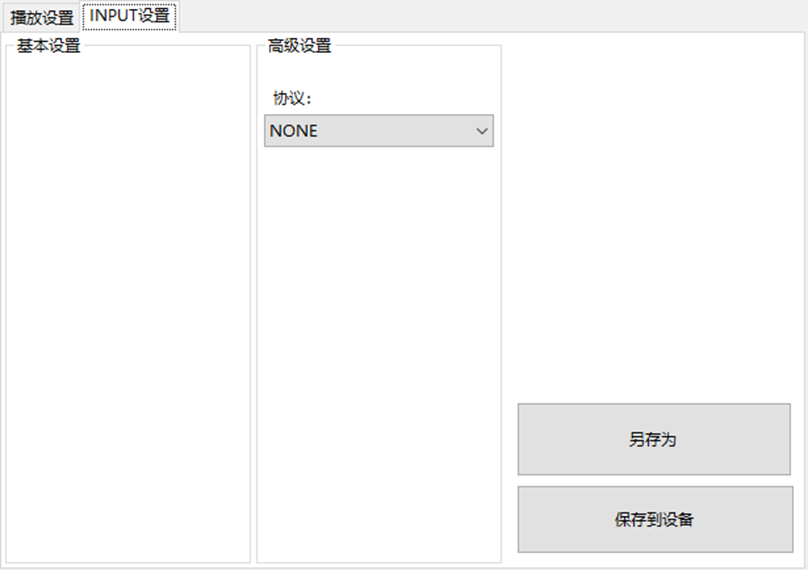

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器的上位机配置操作说明

LBE-LEX系列工业语音播放器|预警播报器|喇叭蜂鸣器专为工业环境精心打造,完美适配AGV和无人叉车。同时,集成以太网与语音合成技术,为各类高级系统(如MES、调度系统、库位管理、立库等)提供高效便捷的语音交互体验。

L…

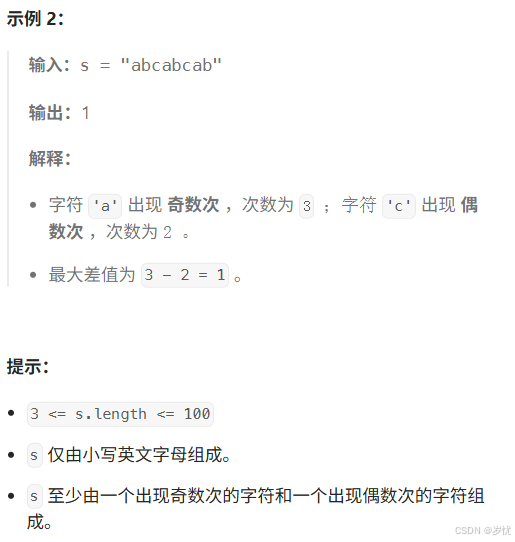

(LeetCode 每日一题) 3442. 奇偶频次间的最大差值 I (哈希、字符串)

题目:3442. 奇偶频次间的最大差值 I 思路 :哈希,时间复杂度0(n)。 用哈希表来记录每个字符串中字符的分布情况,哈希表这里用数组即可实现。

C版本:

class Solution {

public:int maxDifference(string s) {int a[26]…

【大模型RAG】拍照搜题技术架构速览:三层管道、两级检索、兜底大模型

摘要

拍照搜题系统采用“三层管道(多模态 OCR → 语义检索 → 答案渲染)、两级检索(倒排 BM25 向量 HNSW)并以大语言模型兜底”的整体框架: 多模态 OCR 层 将题目图片经过超分、去噪、倾斜校正后,分别用…

【Axure高保真原型】引导弹窗

今天和大家中分享引导弹窗的原型模板,载入页面后,会显示引导弹窗,适用于引导用户使用页面,点击完成后,会显示下一个引导弹窗,直至最后一个引导弹窗完成后进入首页。具体效果可以点击下方视频观看或打开下方…

接口测试中缓存处理策略

在接口测试中,缓存处理策略是一个关键环节,直接影响测试结果的准确性和可靠性。合理的缓存处理策略能够确保测试环境的一致性,避免因缓存数据导致的测试偏差。以下是接口测试中常见的缓存处理策略及其详细说明:

一、缓存处理的核…

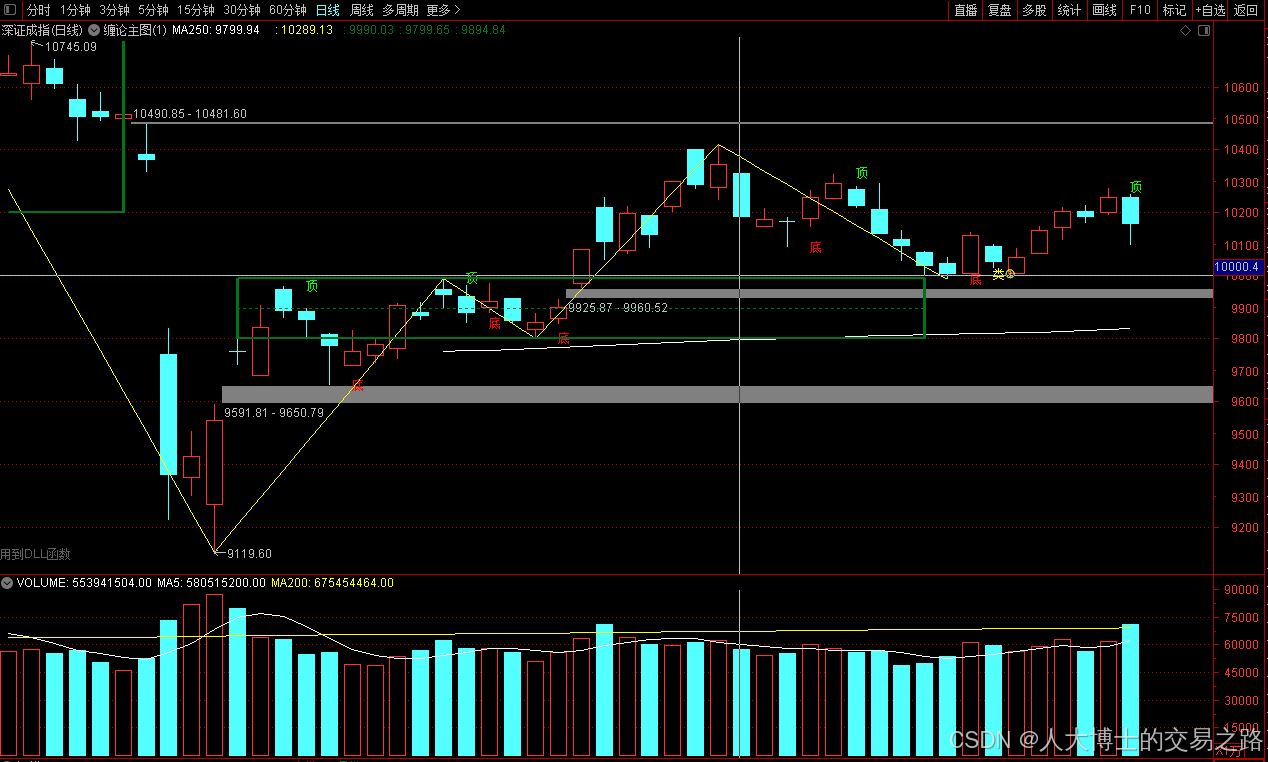

龙虎榜——20250610

上证指数放量收阴线,个股多数下跌,盘中受消息影响大幅波动。 深证指数放量收阴线形成顶分型,指数短线有调整的需求,大概需要一两天。 2025年6月10日龙虎榜行业方向分析 1. 金融科技

代表标的:御银股份、雄帝科技

驱动…

观成科技:隐蔽隧道工具Ligolo-ng加密流量分析

1.工具介绍

Ligolo-ng是一款由go编写的高效隧道工具,该工具基于TUN接口实现其功能,利用反向TCP/TLS连接建立一条隐蔽的通信信道,支持使用Let’s Encrypt自动生成证书。Ligolo-ng的通信隐蔽性体现在其支持多种连接方式,适应复杂网…

铭豹扩展坞 USB转网口 突然无法识别解决方法

当 USB 转网口扩展坞在一台笔记本上无法识别,但在其他电脑上正常工作时,问题通常出在笔记本自身或其与扩展坞的兼容性上。以下是系统化的定位思路和排查步骤,帮助你快速找到故障原因:

背景:

一个M-pard(铭豹)扩展坞的网卡突然无法识别了,扩展出来的三个USB接口正常。…

未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?

编辑:陈萍萍的公主一点人工一点智能 未来机器人的大脑:如何用神经网络模拟器实现更智能的决策?RWM通过双自回归机制有效解决了复合误差、部分可观测性和随机动力学等关键挑战,在不依赖领域特定归纳偏见的条件下实现了卓越的预测准…

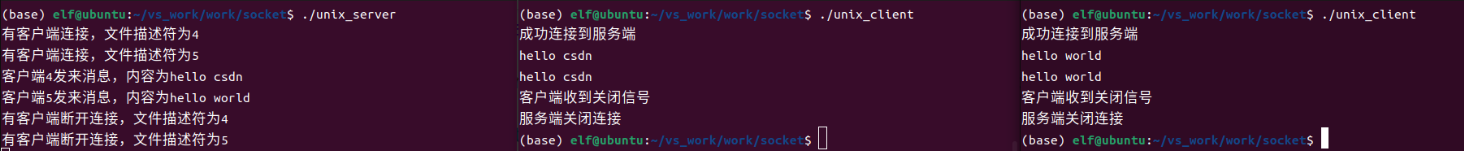

Linux应用开发之网络套接字编程(实例篇)

服务端与客户端单连接

服务端代码

#include <sys/socket.h>

#include <sys/types.h>

#include <netinet/in.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <arpa/inet.h>

#include <pthread.h>

…

华为云AI开发平台ModelArts

华为云ModelArts:重塑AI开发流程的“智能引擎”与“创新加速器”!

在人工智能浪潮席卷全球的2025年,企业拥抱AI的意愿空前高涨,但技术门槛高、流程复杂、资源投入巨大的现实,却让许多创新构想止步于实验室。数据科学家…

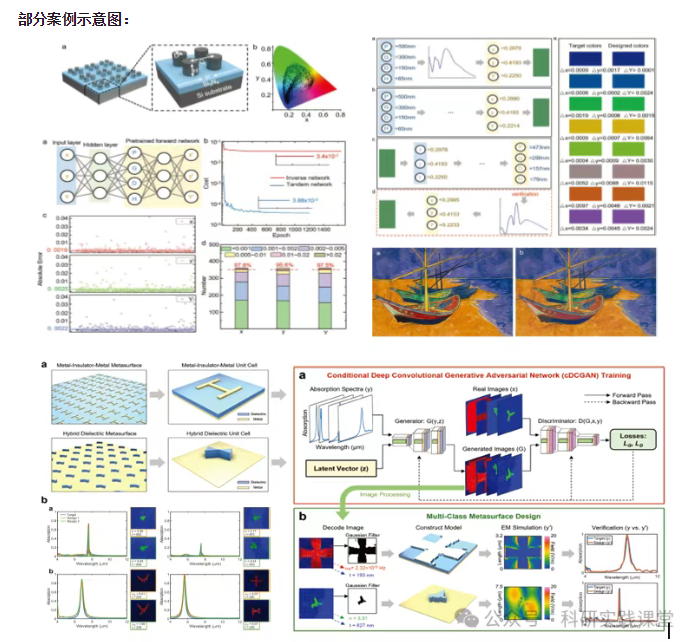

深度学习在微纳光子学中的应用

深度学习在微纳光子学中的主要应用方向

深度学习与微纳光子学的结合主要集中在以下几个方向:

逆向设计 通过神经网络快速预测微纳结构的光学响应,替代传统耗时的数值模拟方法。例如设计超表面、光子晶体等结构。

特征提取与优化 从复杂的光学数据中自…