一、最大池化原理

二、最大池化实例

import torch

import torchvision

from torch import nn

from torch.nn import MaxPool2d

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10("../chihua",train=False,

download=True,transform=torchvision.transforms.ToTensor()) # 对数据集的操作

dataloader = DataLoader(dataset,batch_size=64) # 加载数据集

构建最大池化神经网络:

class SUN(nn.Module):

def __init__(self):

super(SUN, self).__init__()

self.maxpool1 = MaxPool2d(kernel_size=3, ceil_mode=False)

def forward(self, input):

output = self.maxpool1(input)

return output

sun = SUN()

使用tensorboard显示图片:

writer = SummaryWriter("../logs_maxpool")

step = 0

for data in dataloader:

imgs,targets = data

writer.add_image("input", imgs, step, dataformats="NCHW") # 注意输入的图片,可能出现数据类型的不匹配

output = sun(imgs)

writer.add_image("output", output, step, dataformats="NCHW") # 数据通道的设置

step +=1

writer.close()

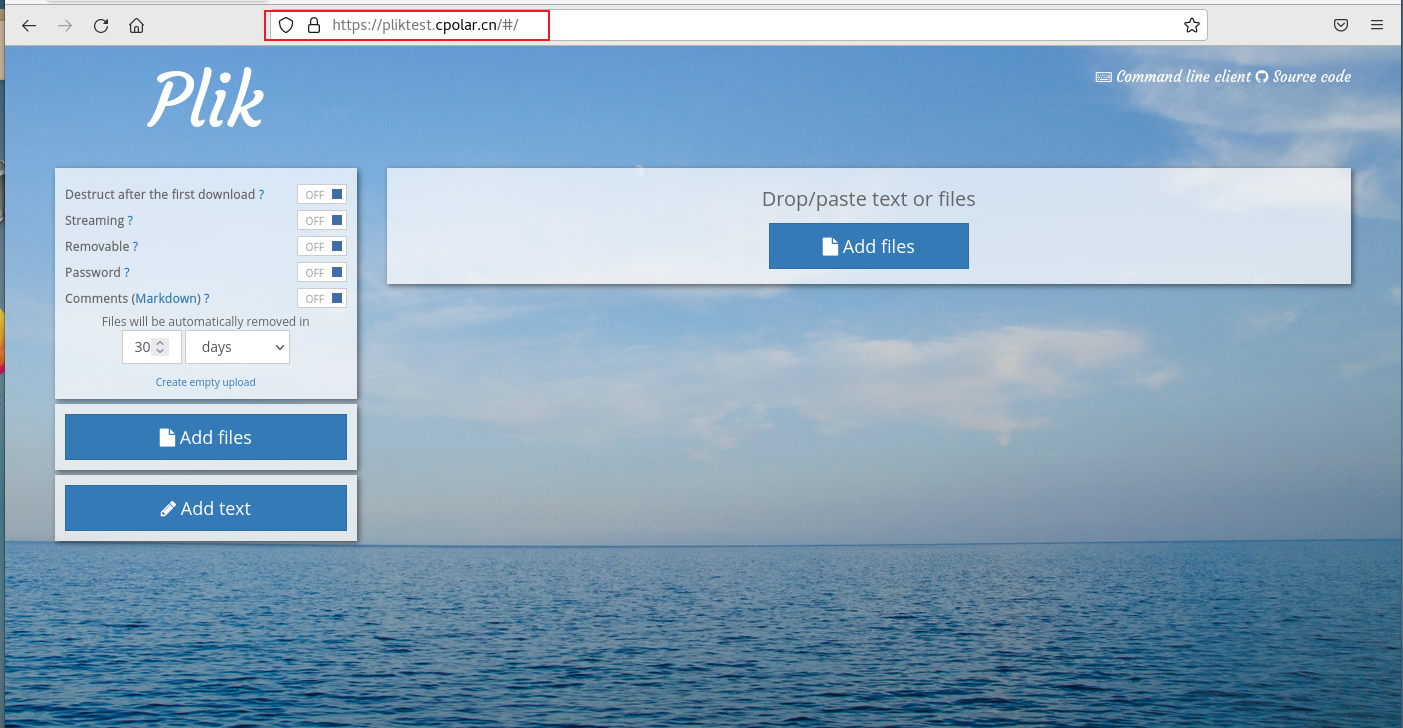

显示的结果:

池化的作用,减小了像素,但是对应的,变得更加模糊。

三、非线性激活

非线性激活的作用,就是主要是给模型加上一些非线性特征,非线性特征越多,才能训练出符合各种特征的模型,提高模型的泛化能力。

四、非线性激活的实例

import torch

import torchvision

from torch import nn

from torch.nn import ReLU, Sigmoid

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

input = torch.tensor([[1,-0.5],

[-1,3]])

input = torch.reshape(input,(-1, 1, 2, 2))

print(input.shape)

dataset = torchvision.datasets.CIFAR10("../datas", train = False, download=True,

transform=torchvision.transforms.ToTensor())

dataloader = DataLoader(dataset, batch_size=64)

class SUN(nn.Module):

def __init__(self):

super(SUN, self).__init__()

self.relu1 = ReLU() # 添加对应的网络

self.sigmoid = Sigmoid()

def forward (self, input):

output = self.sigmoid(input) # 使用了Sigmoid函数

return output

sun = SUN()

step = 0

write = SummaryWriter("../logs_relu")

for data in dataloader:

imgs,targets = data

write.add_image("input", imgs, global_step=step)

output = sun(imgs)

write.add_image("output",output,global_step=step)

step +=1

write.close()

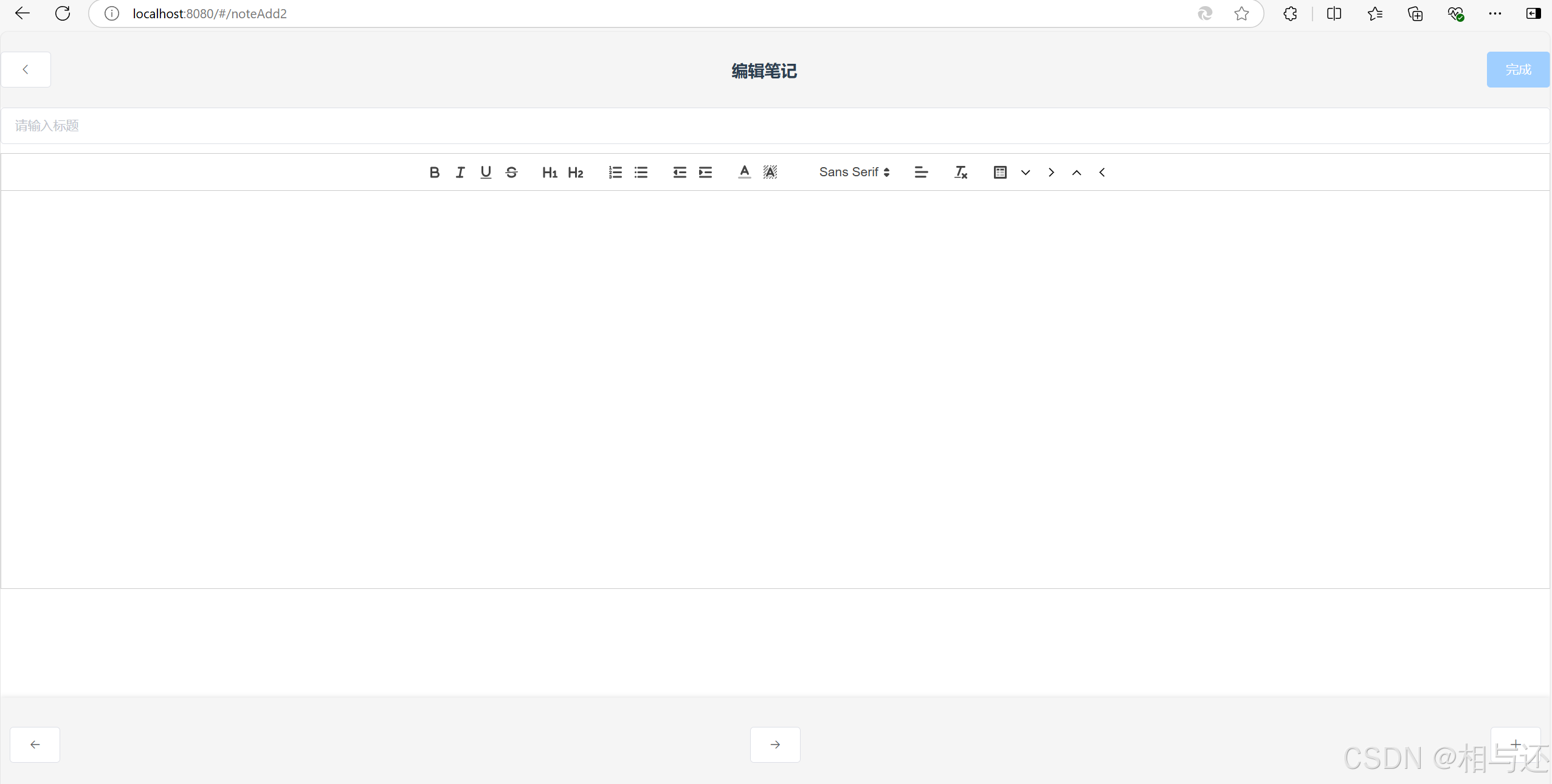

结果

五、 线性层

主要作用是通过线性变换将输入数据映射到一个新的空间,改变数据的维度,便于后续层进一步处理。

六、线性层实例

import torch import torchvision from torch import nn from torch.nn import Linear from torch.utils.data import DataLoader from torch.utils.tensorboard import SummaryWriter dataset = torchvision.datasets.CIFAR10("../datalinear", train=False, transform=torchvision.transforms.ToTensor(), download=True) dataloader = DataLoader(dataset, batch_size=64, drop_last=True)# 此处特别注意,要设置该参数,否则出现报错:

RuntimeError: mat1 and mat2 shapes cannot be multiplied (1x49152 and 196608x10)

class SUN(nn.Module):

def __init__(self):

super(SUN, self).__init__()

self.linear1 = Linear(196608, 10)

def forward(self,input):

output = self.linear1(input)

return output

sun = SUN()

# writer = SummaryWriter("../logslinear")

# step = 0

for data in dataloader:

imgs, targets = data

print(imgs.shape)

# output = torch.reshape(imgs, (1, 1, 1, -1))

# 展平

output = torch.flatten(imgs)

print(output.reshape)

output = sun(output)

print(output.shape)

结果:

将196608的in_future输出out_future变为10。