文章目录

- 前言

- 一、模型说明

- 二、示例

- 1.求解步骤

- 2.示例代码

- 总结

前言

介绍了如何处理多维特征的输入问题

一、模型说明

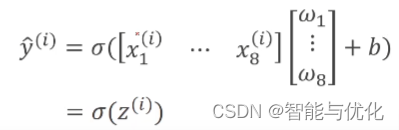

多维问题分类模型

二、示例

1.求解步骤

1.载入数据集:数据集用路径D:\anaconda\Lib\site-packages\sklearn\datasets\data下的diabetes.csv,输入有8个维度

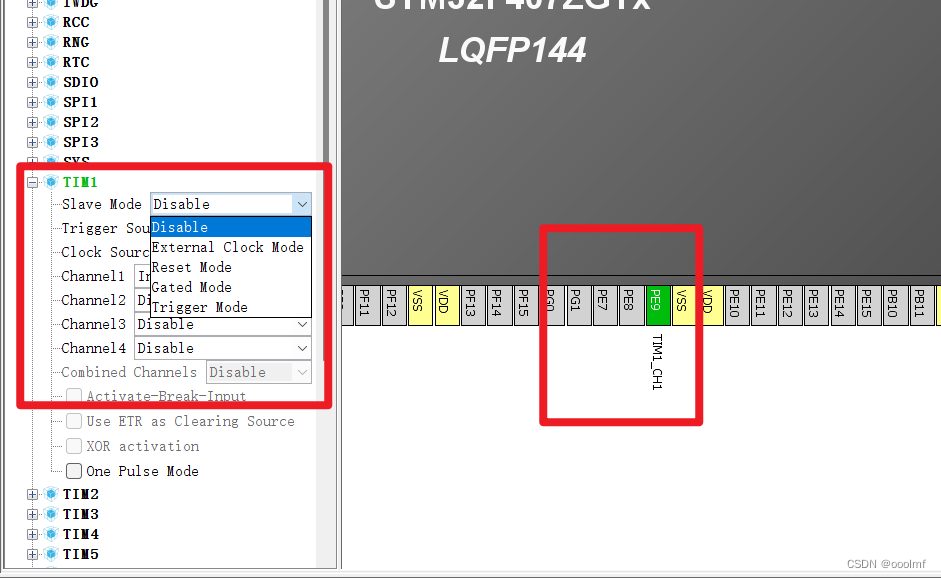

2.创建模型:维度8-6-4-2-1

3.选择损失函数和优化器

3.进行训练

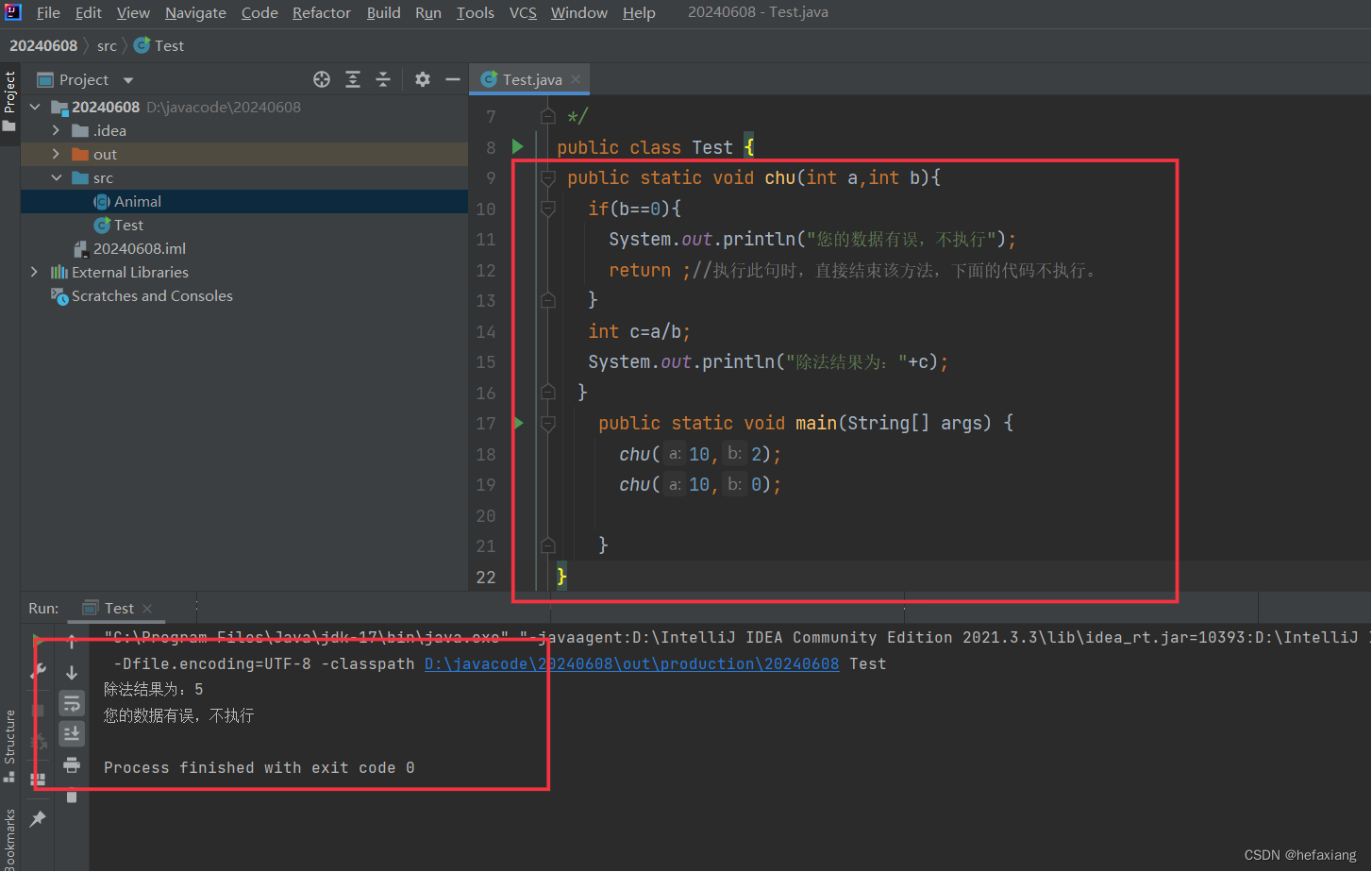

2.示例代码

代码如下(示例):

import numpy as np

import torch

import matplotlib.pyplot as plt

# prepare dataset

xy = np.loadtxt('diabetes.csv', delimiter=',', dtype=np.float32)

x_data = torch.from_numpy(xy[:, :-1]) # 第一个‘:’是指读取所有行,第二个‘:’是指从第一列开始,最后一列不要

print("input data.shape", x_data.shape)

y_data = torch.from_numpy(xy[:, [-1]]) # [-1] 最后得到的是个矩阵

# print(x_data.shape)

# design model using class

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.linear1 = torch.nn.Linear(8, 6)

self.linear2 = torch.nn.Linear(6, 4)

self.linear3 = torch.nn.Linear(4, 2)

self.linear4 = torch.nn.Linear(2, 1)

self.sigmoid = torch.nn.Sigmoid()

def forward(self, x):

x = self.sigmoid(self.linear1(x))

x = self.sigmoid(self.linear2(x))

x = self.sigmoid(self.linear3(x)) # y hat

x = self.sigmoid(self.linear4(x)) # y hat

return x

model = Model()

# construct loss and optimizer

criterion = torch.nn.BCELoss(size_average = True)

optimizer = torch.optim.SGD(model.parameters(), lr=0.1)

epoch_list = []

loss_list = []

# training cycle forward, backward, update

for epoch in range(1000):

y_pred = model(x_data)

loss = criterion(y_pred, y_data)

# print(epoch, loss.item())

print(epoch, loss.item())

epoch_list.append(epoch)

loss_list.append(loss.item())

optimizer.zero_grad()

loss.backward()

optimizer.step()

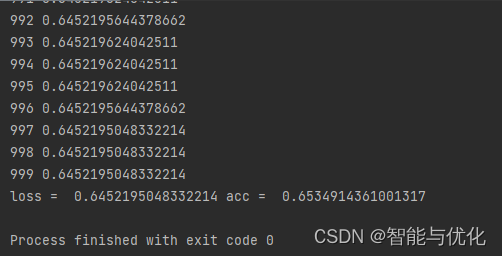

if epoch % 100 == 99:

y_pred_label = torch.where(y_pred >= 0.5, torch.tensor([1.0]), torch.tensor([0.0]))

acc = torch.eq(y_pred_label, y_data).sum().item() / y_data.size(0)

print("loss = ", loss.item(), "acc = ", acc)

plt.plot(epoch_list, loss_list)

plt.ylabel('loss')

plt.xlabel('epoch')

plt.show()

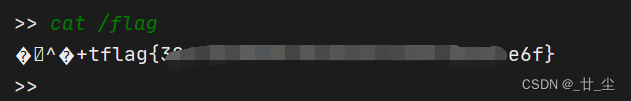

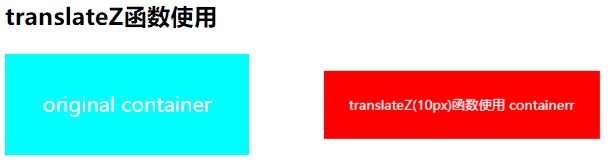

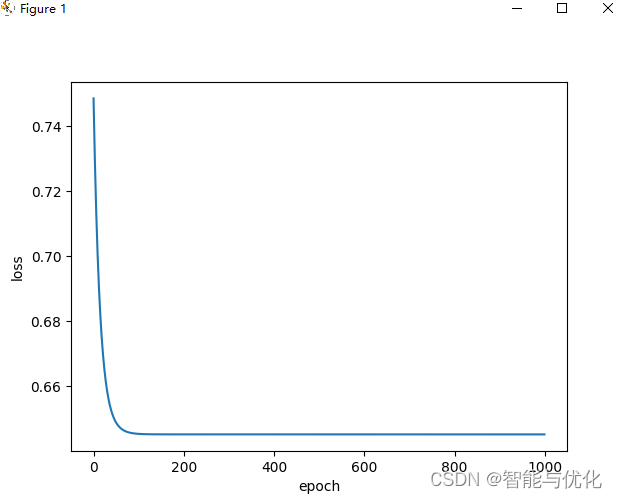

得到如下结果:

总结

PyTorch学习6:多维特征输入