【论文】https://arxiv.org/abs/1705.03098v2

【pytorch】(本文代码参考)weigq/3d_pose_baseline_pytorch: A simple baseline for 3d human pose estimation in PyTorch. (github.com)

【tensorflow】https://github.com/una-dinosauria/3d-pose-baseline

基本上算作是2d人体姿态提升到3d这个pineline的开山之作

一.核心思想

将三维位姿估计解耦为已深入研究的二维姿态估计问题[30,50]和基于二维关节检测的三维姿态估计问题中

1.1 网络设计

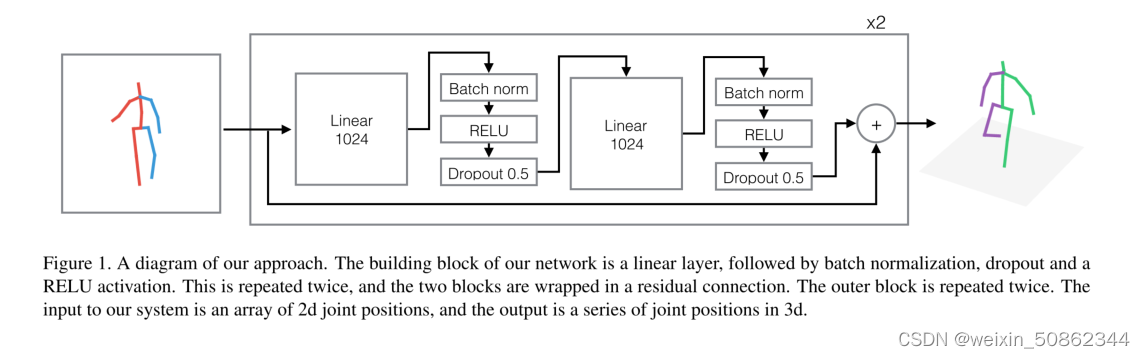

网络的构建块是一个线性层,然后是批量归一化、退出和RELU激活。重复两次,两个块被包裹在一个残差连接中。外层块重复两次。系统的输入是一个二维关节位置数组,输出是一系列三维关节位置。

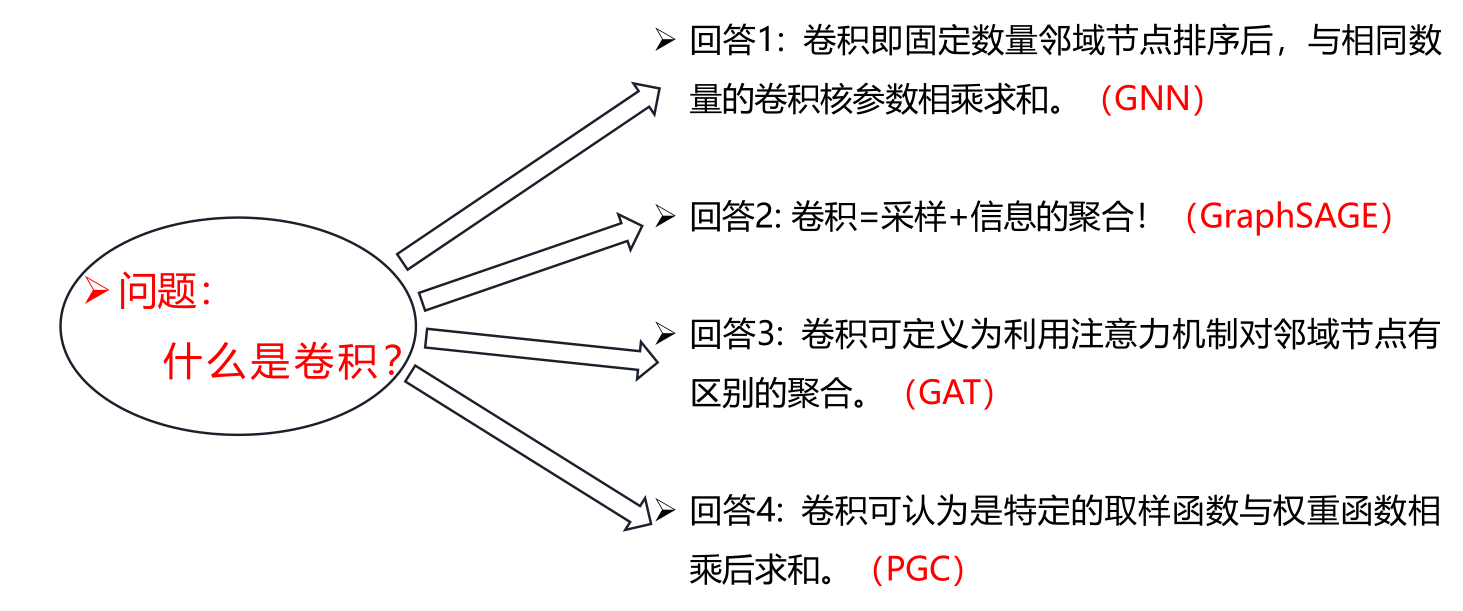

1.1.1 为什么使用线性层?

大多数用于3d人体姿势估计的深度学习方法都是基于卷积神经网络,该网络学习可应用于整个图像[13,24,32,33,45]或二维关节位置热图[33,56]的平移不变滤波器。然而,由于我们处理的是低维点作为输入和输出,我们可以使用更简单、计算成本更低的线性层。RELUs[29]是在深度神经网络中添加非线性的标准选择。

二.层结构

一个带残差的线性层,该层包含两个子层,两个子层完全相同,都是 Linear-BN-Relu-dropout的结构

class Linear(nn.Module):

def __init__(self, linear_size, p_dropout=0.5):

super(Linear, self).__init__()

self.l_size = linear_size

self.relu = nn.ReLU(inplace=True)

self.dropout = nn.Dropout(p_dropout)

self.w1 = nn.Linear(self.l_size, self.l_size)

self.batch_norm1 = nn.BatchNorm1d(self.l_size)

self.w2 = nn.Linear(self.l_size, self.l_size)

self.batch_norm2 = nn.BatchNorm1d(self.l_size)

def forward(self, x):

y = self.w1(x)

y = self.batch_norm1(y)

y = self.relu(y)

y = self.dropout(y)

y = self.w2(y)

y = self.batch_norm2(y)

y = self.relu(y)

y = self.dropout(y)

out = x + y

return out三.模型整体结构

在基本层结构之前,添加了一个预处理和输出,同样也是Linear-BN-Relu-dropout的结构,预处理只是从输入维度input_size(16 * 2),即16个关键点的2维位置,映射到线性层的维度linear_size,输出只是从线性层的维度linear_size映射到输出维度output_size(16 * 3),即16个关键点的3维位置

class LinearModel(nn.Module):

def __init__(self,

linear_size=1024,

num_stage=2,

p_dropout=0.5):

super(LinearModel, self).__init__()

self.linear_size = linear_size

self.p_dropout = p_dropout

self.num_stage = num_stage

# 2d joints

self.input_size = 16 * 2

# 3d joints

self.output_size = 16 * 3

# process input to linear size

self.w1 = nn.Linear(self.input_size, self.linear_size)

self.batch_norm1 = nn.BatchNorm1d(self.linear_size)

self.linear_stages = []

for l in range(num_stage):

self.linear_stages.append(Linear(self.linear_size, self.p_dropout))

self.linear_stages = nn.ModuleList(self.linear_stages)

# post processing

self.w2 = nn.Linear(self.linear_size, self.output_size)

self.relu = nn.ReLU(inplace=True)

self.dropout = nn.Dropout(self.p_dropout)

def forward(self, x):

# pre-processing

y = self.w1(x)

y = self.batch_norm1(y)

y = self.relu(y)

y = self.dropout(y)

# linear layers

for i in range(self.num_stage):

y = self.linear_stages[i](y)

y = self.w2(y)

return y