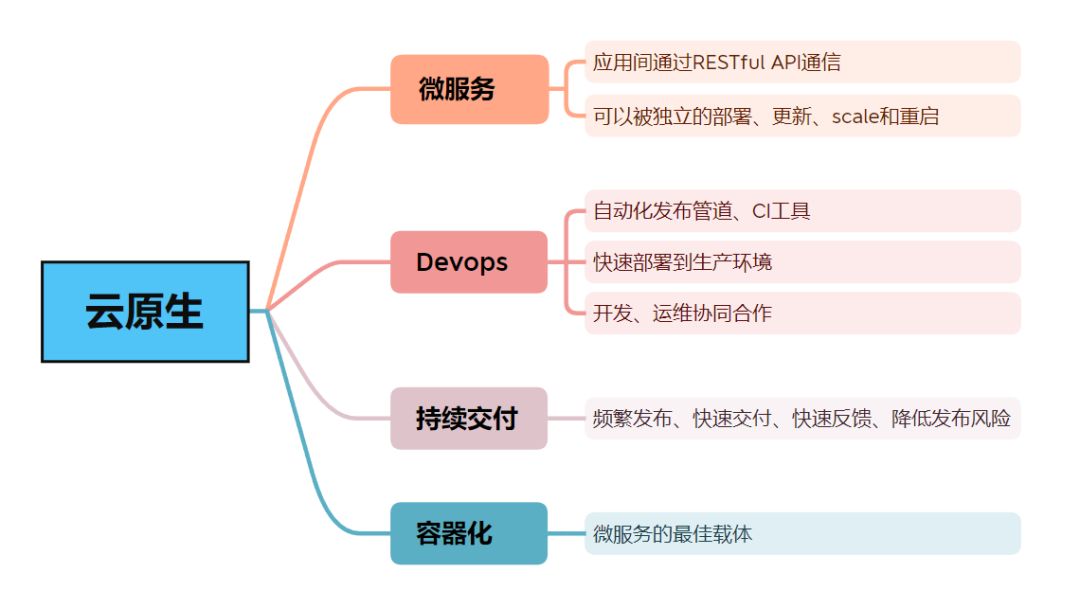

Istio 服务部署

这篇文章讲述如何将 Java Spring Cloud 微服务应用部署到 Istio mesh 中。

准备基础环境

使用 Kind 模拟 kubernetes 环境。文章参考:https://blog.csdn.net/qq_52397471/article/details/135715485

在 kubernetes cluster 中安装 Istio

创建一个 k8s cluster

# 查看 kind 版本

$ kind --version

$ kind version 0.22.0

# 查看 docker 版本

$ docker --version

Docker version 26.0.0, build 2ae903e

# 启动节点

$ kind create cluster name istio-k8s-cluster

# 输出如下表示创建成功

reating cluster "istio-k8s-cluster" ...

✓ Ensuring node image (kindest/node:v1.29.2) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-istio-k8s-cluster"

You can now use your cluster with:

kubectl cluster-info --context kind-istio-k8s-cluster

Have a nice day! 👋

为 Kind 设置操作界面

kind 不像 minikube 一样内置了操作界面。但仍然可以设置一个基于网页的 Kubernetes 界面,以查看集群。

crd 资源文件:https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

securityContext:

seccompProfile:

type: RuntimeDefault

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.7.0

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

spec:

securityContext:

seccompProfile:

type: RuntimeDefault

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.8

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

使用如上的配置文件或者如下命令部署控制界面

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

使用如下指令验证 kubernetes-dashboard 已经在运行:

$ kubectl get pod -n kubernetes-dashboard

root@yuluo-Inspiron-3647:/kubernetes/kind# kubectl get pod -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-5657497c4c-jdqfg 1/1 Running 0 67s

kubernetes-dashboard-78f87ddfc-phmt6 1/1 Running 0 67s

创建 ServiceAccount 和 ClusterRoleBinding 以提供对新创建的集群的管理权限访问。

$ kubectl create serviceaccount -n kubernetes-dashboard admin-user

$ kubectl create clusterrolebinding -n kubernetes-dashboard admin-user --clusterrole cluster-admin --serviceaccount=kubernetes-dashboard:admin-user

需要用 Bearer Token 来登录到操作界面。使用以下命令将 token 保存到变量。

$ kind_console_token=$(kubectl -n kubernetes-dashboard create token admin-user)

使用 echo 命令显示 token 并复制它,以用于登录到操作界面。

$ echo $kind_console_token

使用 kubectl 命令行工具运行以下命令以访问操作界面:

$ kubectl proxy

Starting to serve on 127.0.0.1:8001

注意事项

kubectl proxy 只允许在 localhost 地址访问,也可以使用 kubectl proxy --address='0.0.0.0' --accept-hosts='^*$' 来允许外部地址访问。

dashboard address:http://localhost:8001/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy/

之后在外部访问就可以登陆到 kubernetes dashboard,但是却无法成功登陆:

除过 localhost 地址,其他地址只允许 https 访问。因此,如果需要在非本机访问Dashboard的话,只能选择其他访问方式。

这里采用 ssh 隧道转发的方式访问,前提是 ssh 可以访问到 k8s master; 也就是 kind 的宿主机。

ssh -L localhost:8001:localhost:8001 -NT 192.168.2.10@root

点击 Kubernetes Dashboard 来查看 Deployment 和 Service。

安装 Istio

下载:https://github.com/istio/istio/releases/tag/1.21.0

参考:https://istio.io/latest/zh/docs/setup/getting-started/#download

-

转到 Istio 包目录。例如,如果包是

istio-1.21.0:$ cd istio-1.21.0安装目录包含:

samples/目录下的示例应用程序bin/目录下的istioctl客户端二进制文件。

-

将

istioctl客户端添加到路径(Linux 或 macOS):$ export PATH=$PWD/bin:$PATH -

安装

# 安装 istio root@yuluo-Inspiron-3647:/kubernetes/istio-1.21.0# istioctl install --set profile=demo -y ✔ Istio core installed ✔ Istiod installed ✔ Ingress gateways installed ✔ Egress gateways installed ✔ Installation complete Made this installation the default for injection and validation. # 查看 istio root@yuluo-Inspiron-3647:/kubernetes/istio-1.21.0# kubectl get ns NAME STATUS AGE default Active 65m istio-system Active 2m23s kube-node-lease Active 65m kube-public Active 65m kube-system Active 65m kubernetes-dashboard Active 59m local-path-storage Active 65m root@yuluo-Inspiron-3647:/kubernetes/istio-1.21.0# kubectl get all -n istio-system NAME READY STATUS RESTARTS AGE pod/istio-egressgateway-b569895b5-j4hlt 1/1 Running 0 2m9s pod/istio-ingressgateway-694c4b4d85-dhst9 1/1 Running 0 2m9s pod/istiod-8596844f7d-7tvpj 1/1 Running 0 2m30s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/istio-egressgateway ClusterIP 10.96.217.217 <none> 80/TCP,443/TCP 2m8s service/istio-ingressgateway LoadBalancer 10.96.60.122 <pending> 15021:31862/TCP,80:30459/TCP,443:30695/TCP,31400:32052/TCP,15443:30184/TCP 2m8s service/istiod ClusterIP 10.96.141.1 <none> 15010/TCP,15012/TCP,443/TCP,15014/TCP 2m30s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/istio-egressgateway 1/1 1 1 2m9s deployment.apps/istio-ingressgateway 1/1 1 1 2m9s deployment.apps/istiod 1/1 1 1 2m30s NAME DESIRED CURRENT READY AGE replicaset.apps/istio-egressgateway-b569895b5 1 1 1 2m9s replicaset.apps/istio-ingressgateway-694c4b4d85 1 1 1 2m9s replicaset.apps/istiod-8596844f7d 1 1 1 2m30s # 给命名空间添加标签,指示 Istio 在部署应用的时候,自动注入 Envoy 边车代理: kubectl label namespace default istio-injection=enabled

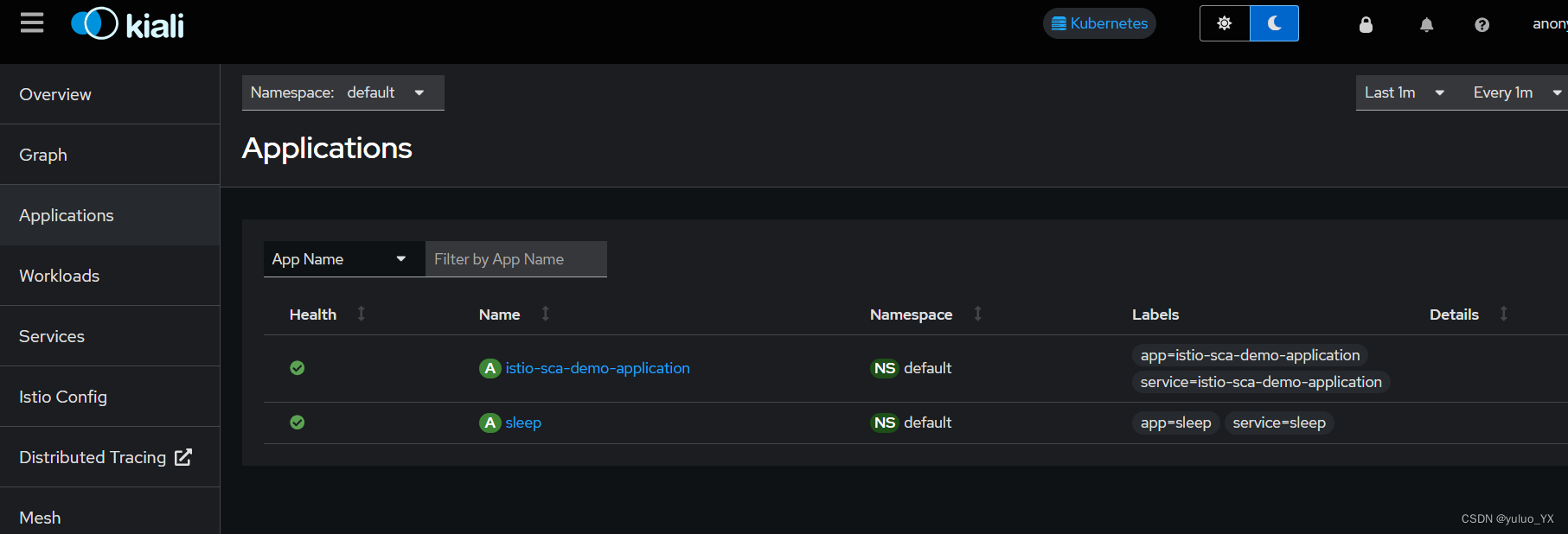

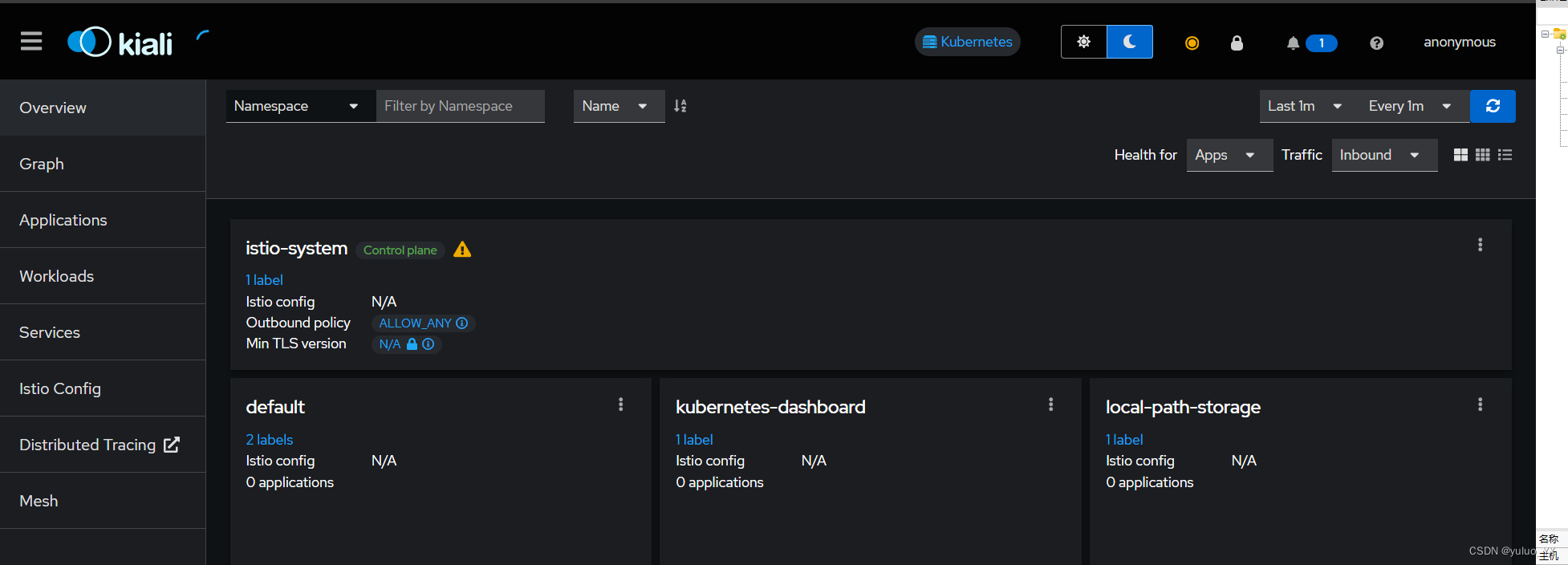

安装 Istio 控制界面 kiali

kubectl apply -f samples/addons

kubectl rollout status deployment/kiali -n istio-system

# 等待 status 完成

root@yuluo-Inspiron-3647:/kubernetes/istio-1.21.0# kubectl rollout status deployment/kiali -n istio-system

Waiting for deployment "kiali" rollout to finish: 0 of 1 updated replicas are available...

deployment "kiali" successfully rolled out

# 查看安装所有的 istio 组件

kubectl get service -n istio-system

# 访问 kiali 仪表盘

istioctl dashboard kiali

# 通过端口转发访问,grafana 普罗米修斯端口也需要转发

kubectl port-forward -n istio-system service/kiali --address=0.0.0.0 20001:20001

访问:http://ip:port/kiali

部署应用

准备 SCA 应用

pom.xml

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

</dependencies>

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-dependencies</artifactId>

<version>${spring-cloud.version}</version>

<type>pom</type>

<scope>import</scope>

</dependency>

<dependency>

<groupId>com.alibaba.cloud</groupId>

<artifactId>spring-cloud-alibaba-dependencies</artifactId>

<version>${spring-cloud-alibaba.version}</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

controller.java

@RestController

@RequestMapping("/app")

public class ProviderController {

@Resource

protected ProviderService providerService;

@GetMapping("/svc")

public String providerA() {

return providerService.service();

}

}

service java

@Service

public class ProviderServiceImpl implements ProviderService {

@Override

public String service() {

return "This response from service application";

}

}

编写 DockerFile

FROM registry.cn-hangzhou.aliyuncs.com/yuluo-yx/java-base:V20240116

LABEL author="yuluo" \

email="karashouk.pan@gmail.com"

COPY app.jar /app.jar

COPY application-k8s.yml /application-k8s.yml

ENTRYPOINT ["java", "-jar", "app.jar", "--spring.profiles.active=${SPRING_PROFILES_ACTIVE}"]

将镜像打包上传至 阿里云容器镜像仓库。

编写 k8s 部署文件

configMap 外部配置挂载:

apiVersion: v1

data:

spring-profiles-active: 'k8s'

kind: ConfigMap

metadata:

name: sca-k8s-demo-spring-profile-cm

application yaml

apiVersion: v1

kind: Service

metadata:

name: istio-sca-demo-application-svc

labels:

app: istio-sca-demo-application

service: istio-sca-demo-application

spec:

ports:

- port: 8080

targetPort: 8080

name: http

type: ClusterIP

selector:

app: istio-sca-demo-application

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: istio-sca-demo-application

labels:

app: istio-sca-demo-application

versions: v1

# Add the following annotations to enable Istio sidecar injection

annotations:

sidecar.istio.io/inject: "true"

spec:

replicas: 1

selector:

matchLabels:

app: istio-sca-demo-application

template:

metadata:

labels:

app: istio-sca-demo-application

spec:

containers:

- env:

- name: SPRING_PROFILES_ACTIVE

valueFrom:

configMapKeyRef:

name: istio-sca-demo-cm-cm

key: spring-profiles-active

# jvm arguments

# - name: JAVA_OPTION

# value: "-Dfile.encoding=UTF-8 -XX:+UseParallelGC -XX:+PrintGCDetails -Xloggc:/var/log/devops-example.gc.log -XX:+HeapDumpOnOutOfMemoryError -XX:+DisableExplicitGC"

# - name: XMX

# value: "128m"

# - name: XMS

# value: "128m"

# - name: XMN

# value: "64m"

name: istio-sca-demo-application

image: registry.cn-hangzhou.aliyuncs.com/yuluo-yx/istio-sca-demo-application:latest

ports:

- containerPort: 8080

livenessProbe:

httpGet:

path: /actuator/health

port: 30001

scheme: HTTP

initialDelaySeconds: 20

periodSeconds: 10

部署

在 default 命名空间开启 istio 自动注入

kubectl label namespace default istio-injection=enabled

root@yuluo-Inspiron-3647:/kubernetes/sca-application# kubectl get ns -L istio-injection

NAME STATUS AGE ISTIO-INJECTION

default Active 179m enabled

istio-system Active 116m

kube-node-lease Active 179m

kube-public Active 179m

kube-system Active 179m

kubernetes-dashboard Active 173m

local-path-storage Active 179m

执行以下命令部署服务:

kubectl apply -f istio-sca-demo-cm.yaml

kubectl apply -f istio-sca-demo-application.yaml

查看部署结果

root@yuluo-Inspiron-3647:/kubernetes/sca-application# kubectl get pods -l app=istio-sca-demo-application

NAME READY STATUS RESTARTS AGE

istio-sca-demo-application-797684cc96-5tl7b 2/2 Running 0 85s

在开启了 istio 自动注入之后,就会有两个容器,一个为应用本身,一个为 sidecar 边车代理。

在 describe 中可以看到注入时的信息:

$ kubectl describe pod istio-sca-demo-application-797684cc96-5tl7b

# 输出内容截取 即为 istio sidecar

istio-proxy:

Container ID: containerd://26e95eaa56904faeb183f0cad53165354b789b44294aa898c16ab3da0d0f09b3

Image: docker.io/istio/proxyv2:1.21.0

Image ID: docker.io/istio/proxyv2@sha256:1b10ab67aa311bcde7ebc18477d31cc73d8169ad7f3447d86c40a2b056c456e4

Port: 15090/TCP

Host Port: 0/TCP

Args:

proxy

sidecar

--domain

$(POD_NAMESPACE).svc.cluster.local

--proxyLogLevel=warning

--proxyComponentLogLevel=misc:error

--log_output_level=default:info

State: Running

Started: Mon, 25 Mar 2024 14:00:02 +0800

Ready: True

Restart Count: 0

我们在 kiali 中可以查看到 envoy sidecar 注入的日志信息:

:: Spring Boot :: (v3.0.9)

2024-03-25T06:00:03.486Z INFO 1 --- [ main] c.a.cloud.k8s.IstioDemoApplication : Starting IstioDemoApplication v2024.01.08 using Java 17.0.10 with PID 1 (/app.jar started by root in /)

2024-03-25T06:00:03.489Z INFO 1 --- [ main] c.a.cloud.k8s.IstioDemoApplication : The following 1 profile is active: "k8s"

# 如下所示

2024-03-25T06:00:03.985996Z info Readiness succeeded in 1.703126315s

2024-03-25T06:00:03.988340Z info Envoy proxy is ready

2024-03-25T06:00:05.314Z INFO 1 --- [ main] o.s.b.w.embedded.tomcat.TomcatWebServer : Tomcat initialized with port(s): 8080 (http)

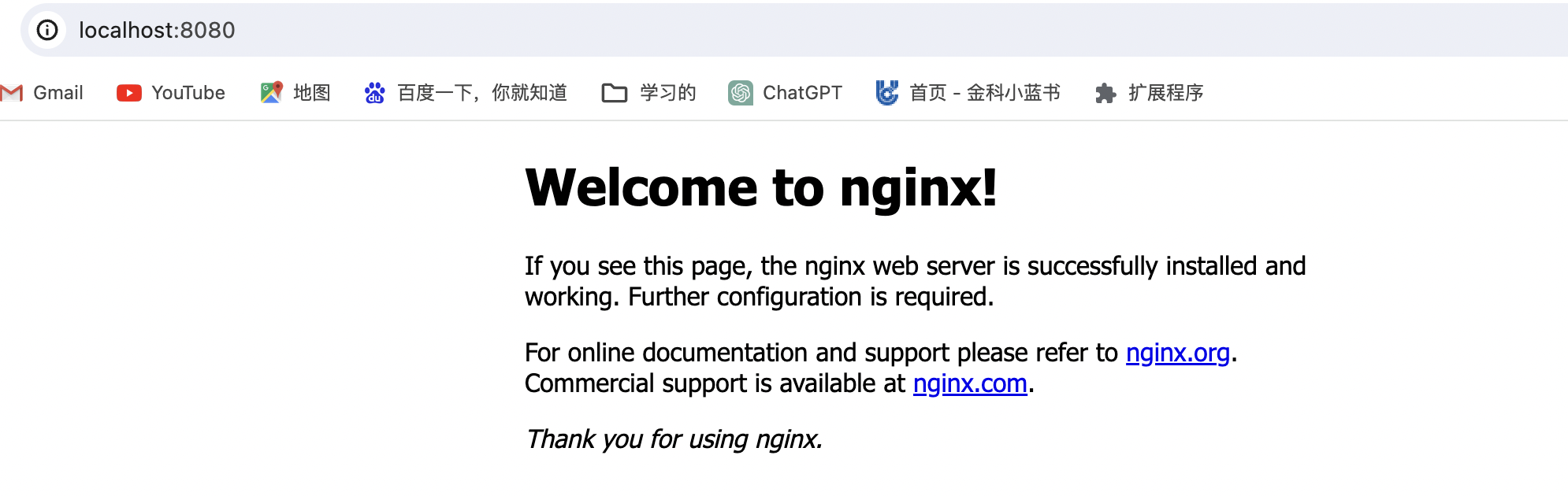

测试访问

kubectl port-forward svc/istio-sca-demo-application-svc --address 0.0.0.0 8080:8080

root@yuluo-Inspiron-3647:/kubernetes/kind# curl 192.168.2.10:8080/app/svc

This response from service applicationroot

服务部署成功!

总结

root@yuluo-Inspiron-3647:/kubernetes/sca-application# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

default istio-sca-demo-application-797684cc96-5tl7b 2/2 Running 0 5m38s

default loki-0 2/2 Running 0 129m

default sleep-7656cf8794-dx7zm 2/2 Running 0 14m

istio-system grafana-6f68dfd8f4-pf48z 1/1 Running 0 129m

istio-system istio-egressgateway-b569895b5-j4hlt 1/1 Running 0 147m

istio-system istio-ingressgateway-694c4b4d85-dhst9 1/1 Running 0 147m

istio-system istiod-8596844f7d-7tvpj 1/1 Running 0 147m

istio-system jaeger-7d7d59b9d-s5chv 1/1 Running 0 129m

istio-system kiali-588bc98cd-gqmnr 1/1 Running 0 129m

istio-system prometheus-7545dd48db-kvmrm 2/2 Running 0 129m

kube-system coredns-76f75df574-jxl2w 1/1 Running 0 3h30m

kube-system coredns-76f75df574-r8bxm 1/1 Running 0 3h30m

kube-system etcd-istio-k8s-cluster-control-plane 1/1 Running 0 3h30m

kube-system kindnet-zh4t9 1/1 Running 0 3h30m

kube-system kube-apiserver-istio-k8s-cluster-control-plane 1/1 Running 0 3h30m

kube-system kube-controller-manager-istio-k8s-cluster-control-plane 1/1 Running 0 3h31m

kube-system kube-proxy-kn666 1/1 Running 0 3h30m

kube-system kube-scheduler-istio-k8s-cluster-control-plane 1/1 Running 0 3h30m

kubernetes-dashboard dashboard-metrics-scraper-5657497c4c-jdqfg 1/1 Running 0 3h24m

kubernetes-dashboard kubernetes-dashboard-78f87ddfc-phmt6 1/1 Running 0 3h24m

local-path-storage local-path-provisioner-7577fdbbfb-sjp54 1/1 Running 0 3h30m

以上便是上述文章中部署的所有 pod。kiali 中显示出了应用的具体信息: