词嵌入向量和位置编码向量的整合

flyfish

文本序列 -> 输入词嵌入向量(Word Embedding Vector)-> 词向量 + 位置编码向量(Positional Encoding Vector)

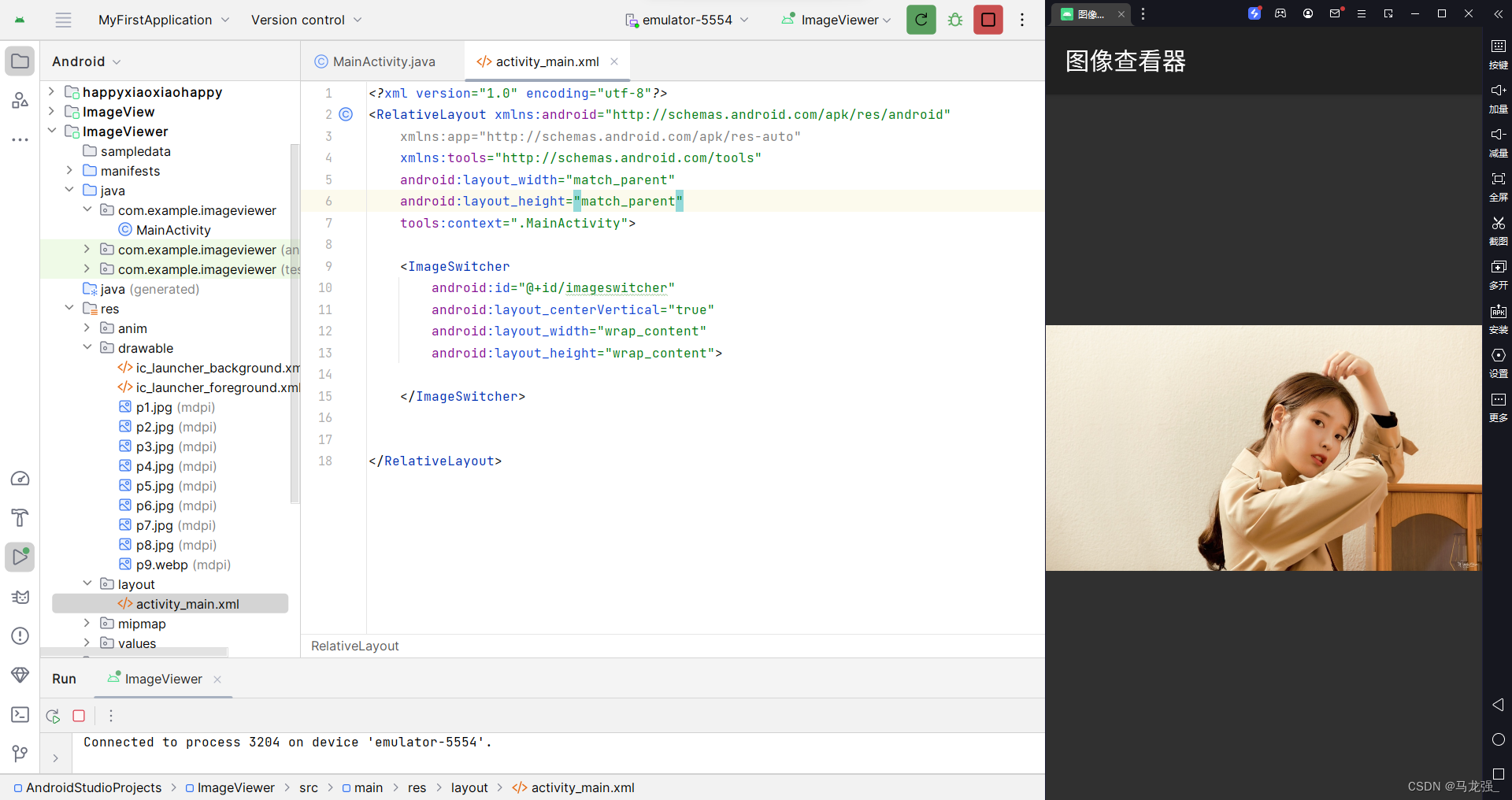

Embedding 的维度使用了3 可以输出打印看结果

from collections import Counter

import torch

import torch.nn as nn

import numpy as np

from collections import Counter

import torch

import torch.nn as nn

# 定义 TranslationCorpus 类

class TranslationCorpus:

def __init__(self, sentences):

self.sentences = sentences

# 计算源语言和目标语言的最大句子长度,并分别加 1 和 2 以容纳填充符和特殊符号

self.src_len = max(len(sentence[0].split()) for sentence in sentences) + 1

self.tgt_len = max(len(sentence[1].split()) for sentence in sentences) + 2

# 创建源语言和目标语言的词汇表

self.src_vocab, self.tgt_vocab = self.create_vocabularies()

# 创建索引到单词的映射

self.src_idx2word = {v: k for k, v in self.src_vocab.items()}

self.tgt_idx2word = {v: k for k, v in self.tgt_vocab.items()}

# 定义创建词汇表的函数

def create_vocabularies(self):

# 统计源语言和目标语言的单词频率

src_counter = Counter(word for sentence in self.sentences for word in sentence[0].split())

tgt_counter = Counter(word for sentence in self.sentences for word in sentence[1].split())

# 创建源语言和目标语言的词汇表,并为每个单词分配一个唯一的索引

src_vocab = {'<pad>': 0, **{word: i+1 for i, word in enumerate(src_counter)}}

tgt_vocab = {'<pad>': 0, '<sos>': 1, '<eos>': 2,

**{word: i+3 for i, word in enumerate(tgt_counter)}}

return src_vocab, tgt_vocab

# 定义创建批次数据的函数

def make_batch(self, batch_size, test_batch=False):

input_batch, output_batch, target_batch = [], [], []

# 随机选择句子索引

sentence_indices = torch.randperm(len(self.sentences))[:batch_size]

for index in sentence_indices:

src_sentence, tgt_sentence = self.sentences[index]

# 将源语言和目标语言的句子转换为索引序列

src_seq = [self.src_vocab[word] for word in src_sentence.split()]

tgt_seq = [self.tgt_vocab['<sos>']] + [self.tgt_vocab[word] \

for word in tgt_sentence.split()] + [self.tgt_vocab['<eos>']]

# 对源语言和目标语言的序列进行填充

src_seq += [self.src_vocab['<pad>']] * (self.src_len - len(src_seq))

tgt_seq += [self.tgt_vocab['<pad>']] * (self.tgt_len - len(tgt_seq))

# 将处理好的序列添加到批次中

input_batch.append(src_seq)

output_batch.append([self.tgt_vocab['<sos>']] + ([self.tgt_vocab['<pad>']] * \

(self.tgt_len - 2)) if test_batch else tgt_seq[:-1])

target_batch.append(tgt_seq[1:])

# 将批次转换为 LongTensor 类型

input_batch = torch.LongTensor(input_batch)

output_batch = torch.LongTensor(output_batch)

target_batch = torch.LongTensor(target_batch)

return input_batch, output_batch, target_batch

sentences = [

['like tree like fruit','羊毛 出在 羊身上'],

['East west home is best', '金窝 银窝 不如 自己的 草窝'],

]

# 创建语料库类实例

d_embedding = 3 # Embedding 的维度

corpus = TranslationCorpus(sentences)

# 生成正弦位置编码表的函数,用于在 Transformer 中引入位置信息

def get_sin_enc_table(n_position, embedding_dim):

#------------------------- 维度信息 --------------------------------

# n_position: 输入序列的最大长度

# embedding_dim: 词嵌入向量的维度

#-----------------------------------------------------------------

# 根据位置和维度信息,初始化正弦位置编码表

sinusoid_table = np.zeros((n_position, embedding_dim))

# 遍历所有位置和维度,计算角度值

for pos_i in range(n_position):

for hid_j in range(embedding_dim):

angle = pos_i / np.power(10000, 2 * (hid_j // 2) / embedding_dim)

sinusoid_table[pos_i, hid_j] = angle

# 计算正弦和余弦值

sinusoid_table[:, 0::2] = np.sin(sinusoid_table[:, 0::2]) # dim 2i 偶数维

sinusoid_table[:, 1::2] = np.cos(sinusoid_table[:, 1::2]) # dim 2i+1 奇数维

#------------------------- 维度信息 --------------------------------

# sinusoid_table 的维度是 [n_position, embedding_dim]

#----------------------------------------------------------------

return torch.FloatTensor(sinusoid_table) # 返回正弦位置编码表

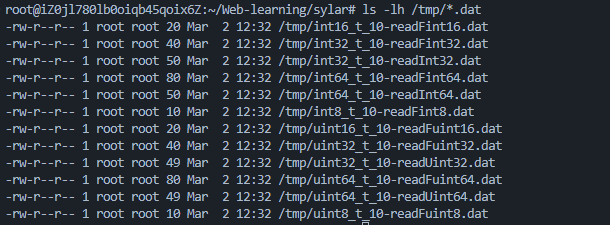

print(corpus.src_len)#'6

print(corpus.src_vocab)#{'<pad>': 0, 'like': 1, 'tree': 2, 'fruit': 3, 'East': 4, 'west': 5, 'home': 6, 'is': 7, 'best': 8}

print("get_sin_enc_table:",get_sin_enc_table(corpus.src_len+1, d_embedding))

src_emb = nn.Embedding(len(corpus.src_vocab), d_embedding) # 词嵌入层

pos_emb = nn.Embedding.from_pretrained( get_sin_enc_table(corpus.src_len+1, d_embedding), freeze=True) # 位置嵌入层

# src_emb: Embedding(9, 3)

# pos_emb: Embedding(7, 3)

print("src_emb:",src_emb.weight)

print("pos_emb:",pos_emb.weight)

#-----------------------------------------------------------------

# 创建一个从 1 到 source_len 的位置索引序列

enc_inputs, dec_inputs, target_batch = corpus.make_batch(batch_size=1,test_batch=True)

print("enc_inputs:",enc_inputs)

pos_indices = torch.arange(1, enc_inputs.size(1) + 1).unsqueeze(0).to(enc_inputs)

print("pos_indices:",pos_indices)

#------------------------- 维度信息 --------------------------------

# pos_indices 的维度是 [1, source_len]

#-----------------------------------------------------------------

# 对输入进行词嵌入和位置嵌入相加 [batch_size, source_len,embedding_dim]

a= src_emb(enc_inputs)

b= pos_emb(pos_indices)

print("src_emb(enc_inputs)",a)

print("pos_emb(pos_indices)",b)

enc_outputs = a+b

print("enc_outputs:",enc_outputs)

6

{'<pad>': 0, 'like': 1, 'tree': 2, 'fruit': 3, 'East': 4, 'west': 5, 'home': 6, 'is': 7, 'best': 8}

get_sin_enc_table: tensor([[ 0.0000, 1.0000, 0.0000],

[ 0.8415, 0.5403, 0.0022],

[ 0.9093, -0.4161, 0.0043],

[ 0.1411, -0.9900, 0.0065],

[-0.7568, -0.6536, 0.0086],

[-0.9589, 0.2837, 0.0108],

[-0.2794, 0.9602, 0.0129]])

src_emb: Parameter containing:

tensor([[-1.2816e+00, -6.5114e-01, 2.9241e-01],

[-9.8684e-01, -7.9353e-01, 5.9976e-01],

[-1.1094e+00, 7.7750e-02, 7.6362e-01],

[-1.4485e+00, -7.4217e-01, 1.5868e+00],

[ 6.2311e-02, 3.3751e-01, -2.4862e-01],

[-2.1817e-01, -4.4703e-01, -6.0495e-01],

[ 1.0660e+00, -1.3480e-03, -1.8772e-01],

[ 1.0988e+00, -1.7080e-01, -2.7901e-01],

[-8.7047e-01, 1.2164e+00, -1.3154e+00]], requires_grad=True)

pos_emb: Parameter containing:

tensor([[ 0.0000, 1.0000, 0.0000],

[ 0.8415, 0.5403, 0.0022],

[ 0.9093, -0.4161, 0.0043],

[ 0.1411, -0.9900, 0.0065],

[-0.7568, -0.6536, 0.0086],

[-0.9589, 0.2837, 0.0108],

[-0.2794, 0.9602, 0.0129]])

enc_inputs: tensor([[4, 5, 6, 7, 8, 0]])

pos_indices: tensor([[1, 2, 3, 4, 5, 6]])

src_emb(enc_inputs) tensor([[[ 0.0623, 0.3375, -0.2486],

[-0.2182, -0.4470, -0.6049],

[ 1.0660, -0.0013, -0.1877],

[ 1.0988, -0.1708, -0.2790],

[-0.8705, 1.2164, -1.3154],

[-1.2816, -0.6511, 0.2924]]], grad_fn=<EmbeddingBackward0>)

pos_emb(pos_indices) tensor([[[ 0.8415, 0.5403, 0.0022],

[ 0.9093, -0.4161, 0.0043],

[ 0.1411, -0.9900, 0.0065],

[-0.7568, -0.6536, 0.0086],

[-0.9589, 0.2837, 0.0108],

[-0.2794, 0.9602, 0.0129]]])

enc_outputs: tensor([[[ 0.9038, 0.8778, -0.2465],

[ 0.6911, -0.8632, -0.6006],

[ 1.2071, -0.9913, -0.1813],

[ 0.3420, -0.8244, -0.2704],

[-1.8294, 1.5001, -1.3047],

[-1.5610, 0.3090, 0.3053]]], grad_fn=<AddBackward0>)

假如 transformer 使用了 512 维的词向量 word embeddings

也就是上面的

对输入进行词嵌入和位置嵌入相加 [batch_size, source_len,embedding_dim]

enc_outputs = src_emb(enc_inputs) + pos_emb(pos_indices)