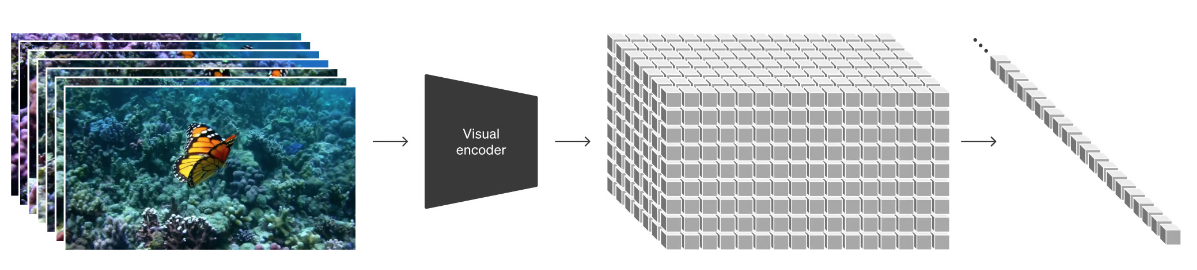

Scaled Dot-Product Attention

flyfish

Attention ( Q , K , V ) = softmax ( Q K T d k ) V {\text{Attention}}(Q, K, V) = \text{softmax}\left(\frac{QK^{T}}{\sqrt{d_k}}\right)V Attention(Q,K,V)=softmax(dkQKT)V

import torch

import torch.nn as nn

import torch.nn.functional as F

class ScaledDotProductAttention(nn.Module):

''' Scaled Dot-Product Attention '''

def __init__(self, temperature, attn_dropout=0.1):

super().__init__()

self.temperature = temperature

self.dropout = nn.Dropout(attn_dropout)

def forward(self, q, k, v, mask=None):

attn = torch.matmul(q / self.temperature, k.transpose(2, 3))

if mask is not None:

attn = attn.masked_fill(mask == 0, -1e9)

attn = self.dropout(F.softmax(attn, dim=-1))

output = torch.matmul(attn, v)

return output, attn

PyTorch官网的实现

torch.nn.functional.scaled_dot_product_attention

# Efficient implementation equivalent to the following:

def scaled_dot_product_attention(query, key, value, attn_mask=None, dropout_p=0.0, is_causal=False, scale=None) -> torch.Tensor:

# Efficient implementation equivalent to the following:

L, S = query.size(-2), key.size(-2)

scale_factor = 1 / math.sqrt(query.size(-1)) if scale is None else scale

attn_bias = torch.zeros(L, S, dtype=query.dtype)

if is_causal:

assert attn_mask is None

temp_mask = torch.ones(L, S, dtype=torch.bool).tril(diagonal=0)

attn_bias.masked_fill_(temp_mask.logical_not(), float("-inf"))

attn_bias.to(query.dtype)

if attn_mask is not None:

if attn_mask.dtype == torch.bool:

attn_bias.masked_fill_(attn_mask.logical_not(), float("-inf"))

else:

attn_bias += attn_mask

attn_weight = query @ key.transpose(-2, -1) * scale_factor

attn_weight += attn_bias

attn_weight = torch.softmax(attn_weight, dim=-1)

attn_weight = torch.dropout(attn_weight, dropout_p, train=True)

return attn_weight @ value

点乘

计算过程

(1, 2, 3) • (7, 9, 11) = 1×7 + 2×9 + 3×11= 58

(1, 2, 3) • (8, 10, 12) = 1×8 + 2×10 + 3×12= 64

(4, 5, 6) • (7, 9, 11) = 4×7 + 5×9 + 6×11= 139

(4, 5, 6) • (8, 10, 12) = 4×8 + 5×10 + 6×12= 154