1.8 Spark编程入门

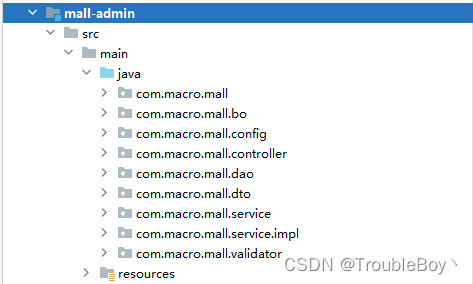

1.8.1 通过IDEA创建Spark工程

ps:工程创建之前步骤省略,在scala中已经讲解,直接默认是创建好工程的 导入Pom文件依赖

<!-- 声明公有的属性 -->

<properties>

<maven.compiler.source>1.8</maven.compiler.source>

<maven.compiler.target>1.8</maven.compiler.target>

<encoding>UTF-8</encoding>

<scala.version>2.12.8</scala.version>

<spark.version>3.1.2</spark.version>

<hadoop.version>3.2.1</hadoop.version>

<scala.compat.version>2.12</scala.compat.version>

</properties>

<!-- 声明并引入公有的依赖 -->

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

</dependencies>

<!-- 配置构建信息 -->

<build>

<!-- 资源文件夹 -->

<sourceDirectory>src/main/scala</sourceDirectory>

<!-- 声明并引入构建的插件 -->

<plugins>

<!-- 用于编译Scala代码到class -->

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

<configuration>

<args>

<arg>-dependencyfile</arg>

<arg>${project.build.directory}/.scala_dependencies</arg>

</args>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<!-- 程序打包 -->

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.4.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<!-- 过滤掉以下文件,不打包 :解决包重复引用导致的打包错误-->

<filters>

<filter><artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers>

<!-- 打成可执行的jar包 的主方法入口-->

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass></mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

1.8.2 Scala实现WordCount

package com.qianfeng.sparkcore

import org.apache.spark.{SparkConf, SparkContext}

/**

* 使用Spark统计单词个数

*/

object Demo01_SparkWC {

def main(args: Array[String]): Unit = {

//1、获取spark上下文环境 local[n] : n代表cpu核数,*代表可用的cpu数量;如果打包服务器运行,则需要注释掉.setMaster()

val conf = new SparkConf().setAppName("spark-wc").setMaster("local[*]")

val sc = new SparkContext(conf)

//2、初始化数据

val rdd = sc.textFile("/Users/liyadong/data/sparkdata/test.txt")

//3、对数据进行加工

val sumRDD = rdd

.filter(_.length >= 10)

.flatMap(_.split("\t"))

.map((_, 1))

.reduceByKey(_ + _)

//4、对数据进行输出

println(sumRDD.collect().toBuffer)

sumRDD.foreach(println(_))

//5、关闭sc对象

sc.stop()

}

}

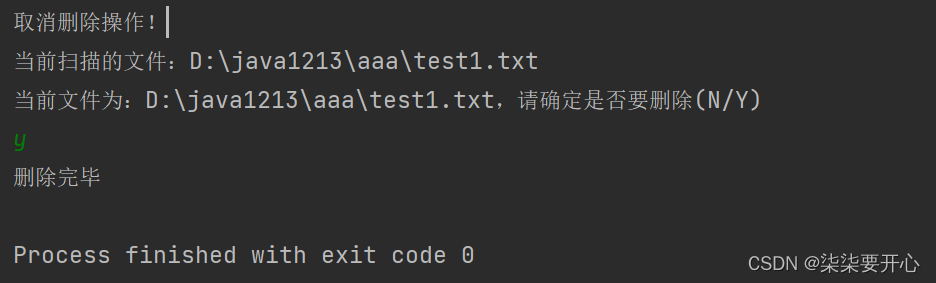

1.8.3 程序打包上传集群

在Spark安装目录中的bin目录进行提交作业操作

spark-submit \

--class com.qianfeng.sparkcore.Demo01_SparkWC \

--master yarn \

--deploy-mode client \

/home/original-hn-bigdata-1.0.jar hdfs://qianfeng01:9820/words hdfs://qianfeng01:9820/output/0901注意:如果HDFS集群中有数据文件直接使用集群的数据文件即可,如果没有的话使用【hdfs dfs -put /home/words /】从Linux系统中将文件上传到HDFS,查看集群中运行之后的结果【hdfs dfs -tail output/0901/*】

Guff_hys_python数据结构,大数据开发学习,python实训项目-CSDN博客